Evaluating High-Resolution Piano Sustain Pedal Depth Estimation with Musically Informed Metrics

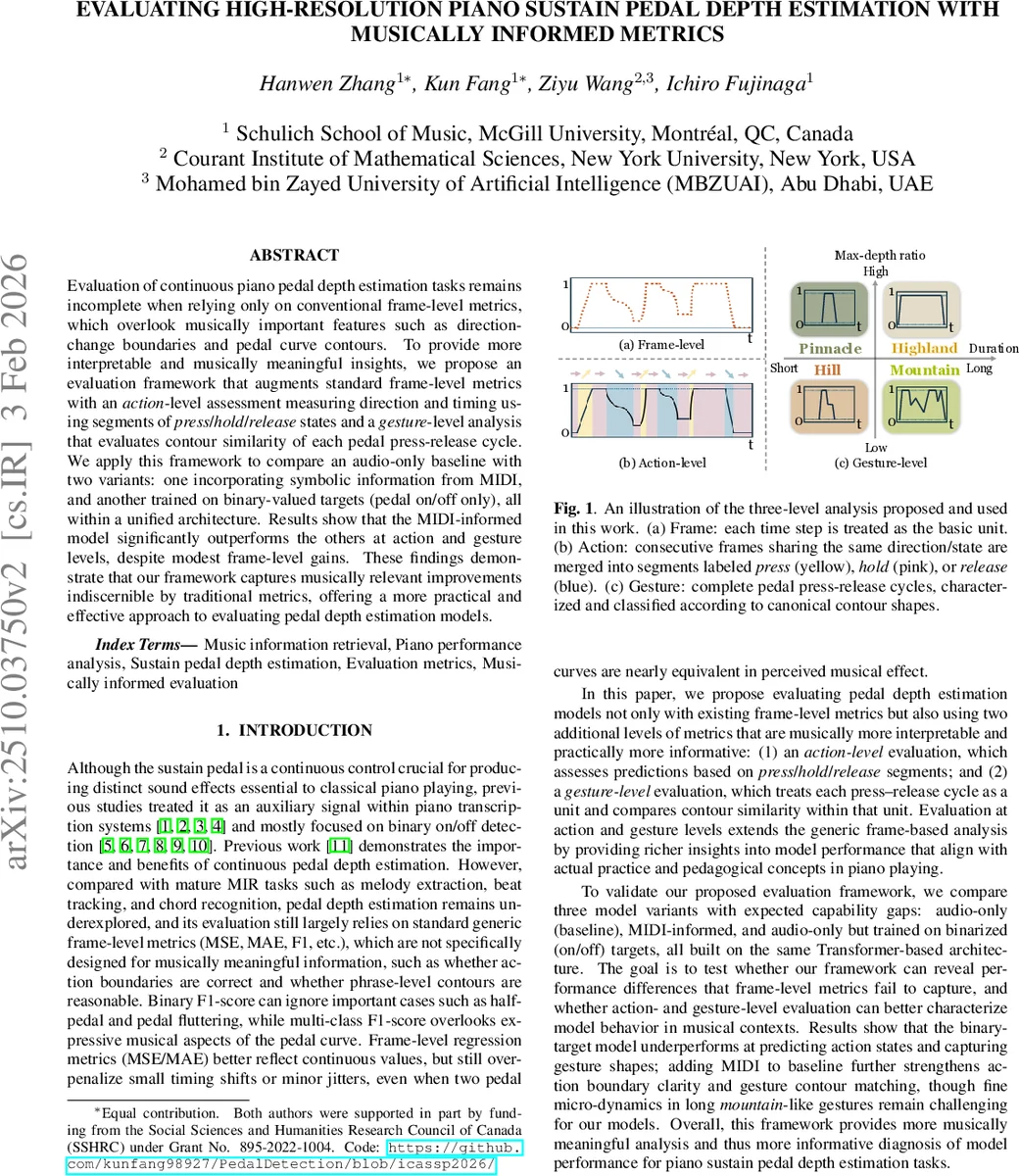

Evaluation for continuous piano pedal depth estimation tasks remains incomplete when relying only on conventional frame-level metrics, which overlook musically important features such as direction-change boundaries and pedal curve contours. To provide more interpretable and musically meaningful insights, we propose an evaluation framework that augments standard frame-level metrics with an action-level assessment measuring direction and timing using segments of press/hold/release states and a gesture-level analysis that evaluates contour similarity of each press-release cycle. We apply this framework to compare an audio-only baseline with two variants: one incorporating symbolic information from MIDI, and another trained in a binary-valued setting, all within a unified architecture. Results show that the MIDI-informed model significantly outperforms the others at action and gesture levels, despite modest frame-level gains. These findings demonstrate that our framework captures musically relevant improvements indiscernible by traditional metrics, offering a more practical and effective approach to evaluating pedal depth estimation models.

💡 Research Summary

This paper addresses a critical gap in the evaluation of continuous piano sustain‑pedal depth estimation: conventional frame‑level metrics such as mean‑squared error (MSE), mean absolute error (MAE), and binary or multi‑class F1 scores do not capture musically salient aspects of pedaling, such as the precise timing of press‑hold‑release transitions or the overall shape of a pedal gesture. To remedy this, the authors propose a three‑tiered evaluation framework that augments standard frame‑wise measures with (i) an action‑level assessment and (ii) a gesture‑level assessment, both of which are grounded in piano pedagogy and performance practice.

Action‑level evaluation converts the continuous pedal curve into a sequence of discrete directional states—press, hold, and release—by applying a sliding‑window linear regression (window size 19, slope threshold 0.005, minimum R² 0.5). The resulting three‑class sequence is treated as a classification problem, and precision, recall, and F1 are reported per class as well as macro‑averaged and weighted‑averaged scores. This level directly measures how well a model captures the boundaries that musicians consider most important.

Gesture‑level evaluation defines a gesture as a maximal interval where the pedal depth stays above a small threshold ε, from the first crossing upward to the subsequent crossing downward. Gestures are further categorized into four canonical shapes—Pinnacle, Hill, Highland, and Mountain—based on two axes: duration (short vs. long) and max‑depth ratio r (high vs. low). The max‑depth ratio quantifies how much of the gesture remains near its peak. For each gesture type, similarity between predicted and ground‑truth contours is measured using two complementary methods: (1) a Fourier‑descriptor approach that retains the first 11 coefficients to suppress high‑frequency noise, and (2) a 5‑point descriptor (start, end, mean, median, max) with duration‑weighted MSE. These metrics reward overall contour similarity while tolerating small local jitter, reflecting the musical notion that a “correct” gesture need not match every sample exactly.

To validate the framework, the authors train three variants of a Transformer‑based model on the MAESTRO v3.0 dataset, keeping architecture and hyper‑parameters constant:

- AUDIO (binary) – trained on binary on/off targets, using the raw sigmoid output as a continuous estimate.

- AUDIO – standard regression on audio features (log‑mel spectrogram + MFCC).

- AUDIO + MIDI – same audio regression plus frame‑aligned MIDI note‑velocity vectors as an additional modality.

All models predict frame‑wise depth, onset/offset binary events, and a segment‑level global depth, optimized with a weighted sum of MSE and binary cross‑entropy losses. Training uses AdamW, a OneCycle scheduler, and 15 epochs on a single NVIDIA H100.

Results:

- Frame‑level: AUDIO + MIDI achieves the lowest MSE (0.0280) and MAE (0.0986), outperforming AUDIO (0.0416/0.1237) and AUDIO (binary) (0.0582/0.1502).

- Action‑level: Macro‑F1 improves from 0.5739 (binary) → 0.6070 (audio) → 0.6964 (audio+MIDI). Weighted‑F1 shows a similar trend, indicating that MIDI information markedly enhances the detection of press, hold, and release states.

- Gesture‑level: For each of the four gesture categories, the Fourier‑based MSE and 5‑point MSE are consistently lowest for the MIDI‑infused model (e.g., Mountain: 0.0457 vs. 0.0689 vs. 0.0863). This demonstrates that the model not only predicts the correct timing but also reproduces the intended contour shapes (e.g., long “Highland” plateaus or short “Pinnacle” spikes).

The authors argue that the proposed evaluation framework uncovers performance differences invisible to traditional metrics. For instance, while the binary model’s frame‑wise errors are only modestly higher, its action‑level recall for “hold” drops dramatically, revealing a failure to sustain pedal depressions. Similarly, gesture‑level analysis shows that even the best model struggles with long “Mountain” gestures that contain subtle oscillations, pointing to a concrete direction for future model improvements.

In conclusion, the paper makes two substantive contributions: (1) a musically informed, multi‑scale evaluation suite that aligns model assessment with how pianists conceptualize pedaling, and (2) empirical evidence that multimodal input (audio + MIDI) yields measurable gains not only in raw regression error but, more importantly, in the musical fidelity of pedal actions and gestures. The framework is released publicly, enabling reproducible benchmarking and providing diagnostic feedback for subsequent research on continuous control signals in music information retrieval. Future work may extend the approach to other expressive controls (e.g., vibrato, dynamics) and explore real‑time deployment scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment