Evalet: Evaluating Large Language Models by Fragmenting Outputs into Functions

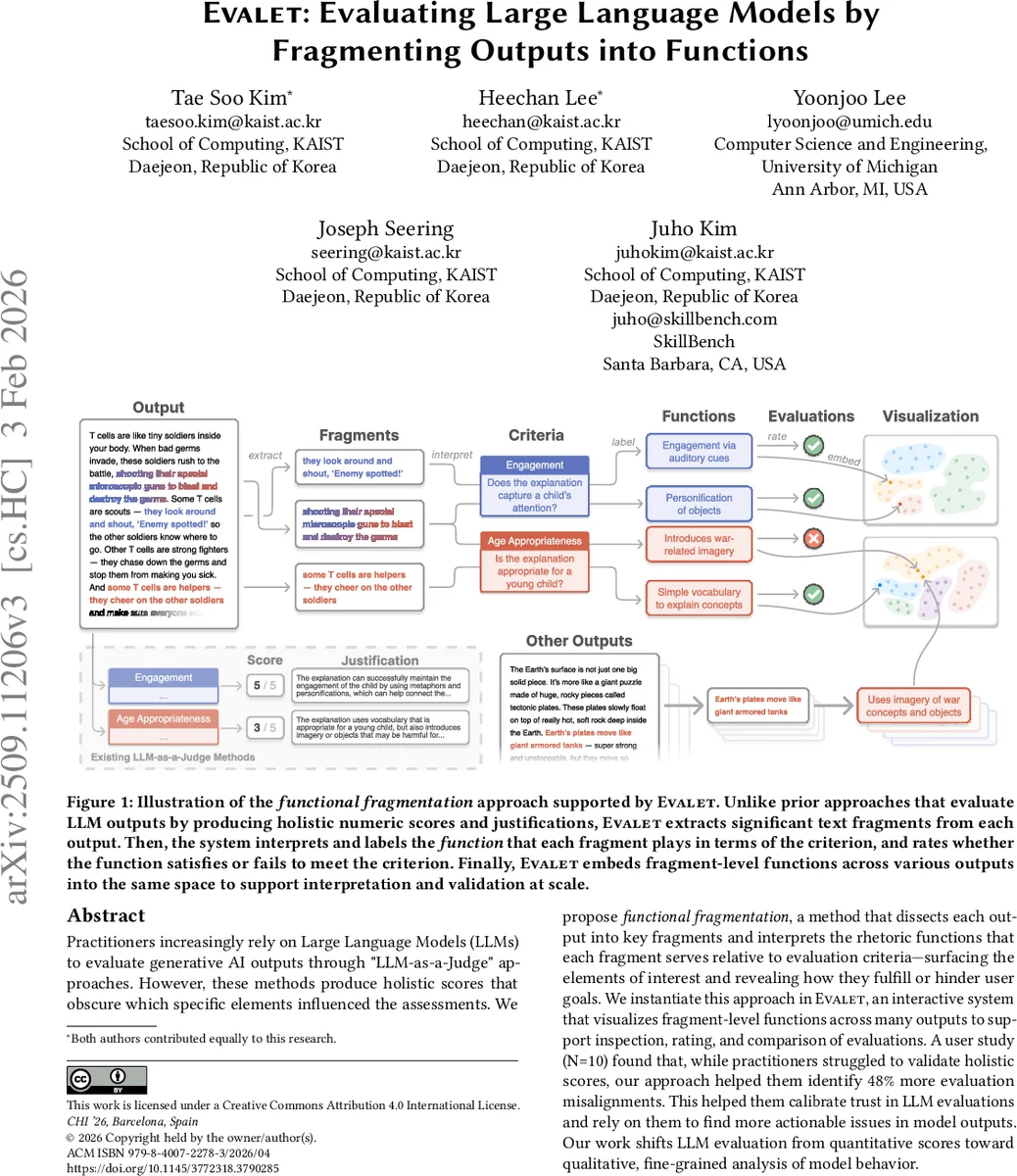

Practitioners increasingly rely on Large Language Models (LLMs) to evaluate generative AI outputs through “LLM-as-a-Judge” approaches. However, these methods produce holistic scores that obscure which specific elements influenced the assessments. We propose functional fragmentation, a method that dissects each output into key fragments and interprets the rhetoric functions that each fragment serves relative to evaluation criteria – surfacing the elements of interest and revealing how they fulfill or hinder user goals. We instantiate this approach in Evalet, an interactive system that visualizes fragment-level functions across many outputs to support inspection, rating, and comparison of evaluations. A user study (N=10) found that, while practitioners struggled to validate holistic scores, our approach helped them identify 48% more evaluation misalignments. This helped them calibrate trust in LLM evaluations and rely on them to find more actionable issues in model outputs. Our work shifts LLM evaluation from quantitative scores toward qualitative, fine-grained analysis of model behavior.

💡 Research Summary

The paper introduces Evalet, an interactive system that re‑imagines the “LLM‑as‑a‑Judge” paradigm by breaking down model outputs into meaningful text fragments and assigning each fragment a rhetorical function relative to user‑defined evaluation criteria. Traditional LLM‑based evaluators produce a single holistic score (e.g., 3 out of 5) and a short justification, which obscures which specific parts of the output drove the rating. Evalet’s functional fragmentation first extracts salient fragments—sentence‑level, clause‑level, or keyword‑based units—using an LLM that parses the output structure. For each fragment, a second LLM query asks what rhetorical function the fragment serves for a given criterion (e.g., “personification”, “war‑related imagery”, “engagement”). The system then labels the fragment as either aligned (satisfying the criterion) or misaligned (violating it).

All fragment‑function pairs are visualized in two complementary ways. A per‑output view lists the functions, allowing users to jump directly to the text that matters, while a global view embeds every fragment in a high‑dimensional semantic space (e.g., Sentence‑BERT) and projects it to 2‑D using t‑SNE/UMAP. Fragments sharing the same function cluster together, revealing systematic patterns such as over‑reliance on war metaphors across many outputs. Cluster‑level alignment ratios are displayed, enabling quick consistency checks across the dataset.

A within‑subjects user study with ten practitioners compared Evalet against a baseline that only shows holistic scores and justifications. Participants using Evalet identified 48 % more instances where the LLM evaluator’s judgment diverged from their own assessment, leading to higher calibration of trust in the automated evaluator. They reported that the fragment‑level view made verification easier, reduced the need to read entire outputs, and supported an inductive‑coding style workflow: starting from a broad criterion, the system surfaced previously unnoticed “codes” (functions) that highlighted hidden strengths or weaknesses.

The authors position Evalet relative to prior fine‑grained evaluation methods such as FactScore, Scarecrow, and BooookScore, which rely on pre‑defined error categories. In contrast, Evalet’s emergent function labeling lets the LLM generate novel, domain‑specific insights without a fixed taxonomy. The paper also discusses limitations: fragment extraction and function labeling depend heavily on prompt engineering; clustering large corpora can be computationally expensive; and the quality of function labels may vary across LLM versions. Future work includes building domain‑specific function ontologies, incorporating human‑in‑the‑loop validation to improve label reliability, and optimizing incremental embedding updates for real‑time analysis.

Overall, Evalet shifts LLM evaluation from opaque numeric scores toward a qualitative, fine‑grained analysis of model behavior. By surfacing the exact textual elements that fulfill or violate evaluation criteria, it enhances transparency, supports systematic error discovery, and improves practitioner trust in LLM‑based evaluation pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment