Fast Task Planning with Neuro-Symbolic Relaxation

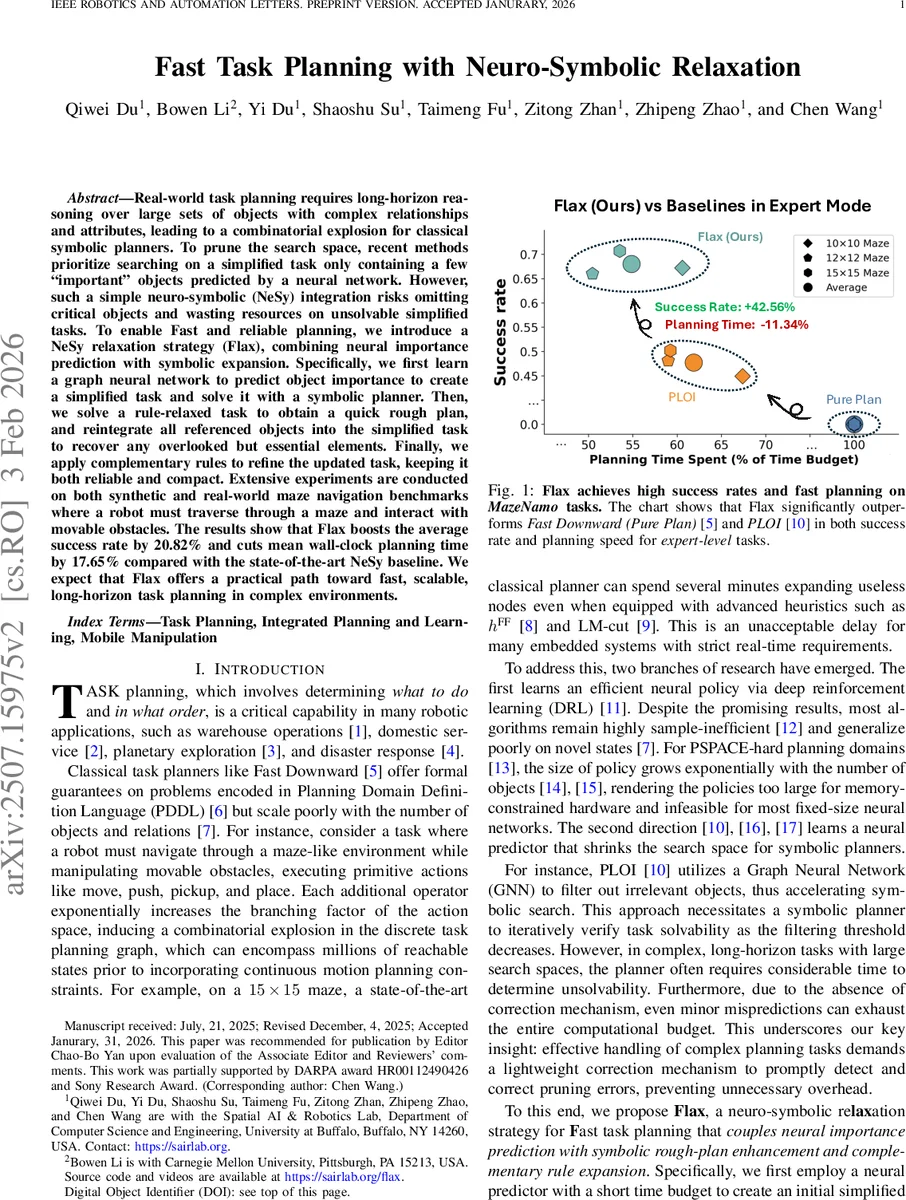

Real-world task planning requires long-horizon reasoning over large sets of objects with complex relationships and attributes, leading to a combinatorial explosion for classical symbolic planners. To prune the search space, recent methods prioritize searching on a simplified task only containing a few ``important" objects predicted by a neural network. However, such a simple neuro-symbolic (NeSy) integration risks omitting critical objects and wasting resources on unsolvable simplified tasks. To enable Fast and reliable planning, we introduce a NeSy relaxation strategy (Flax), combining neural importance prediction with symbolic expansion. Specifically, we first learn a graph neural network to predict object importance to create a simplified task and solve it with a symbolic planner. Then, we solve a rule-relaxed task to obtain a quick rough plan, and reintegrate all referenced objects into the simplified task to recover any overlooked but essential elements. Finally, we apply complementary rules to refine the updated task, keeping it both reliable and compact. Extensive experiments are conducted on both synthetic and real-world maze navigation benchmarks where a robot must traverse through a maze and interact with movable obstacles. The results show that Flax boosts the average success rate by 20.82% and cuts mean wall-clock planning time by 17.65% compared with the state-of-the-art NeSy baseline. We expect that Flax offers a practical path toward fast, scalable, long-horizon task planning in complex environments.

💡 Research Summary

The paper introduces Flax, a neuro‑symbolic relaxation framework designed to accelerate long‑horizon task planning for robots operating in complex environments with many objects and intricate relationships. Classical symbolic planners such as Fast Downward guarantee completeness and optimality but suffer from combinatorial explosion as the number of objects grows. Recent neuro‑symbolic approaches mitigate this by using neural networks to predict “important” objects and prune the search space (e.g., PLOI). However, a single neural filter can discard critical objects, leading to unsolvable reduced problems and wasted computation.

Flax tackles this issue through a three‑stage pipeline that combines fast neural filtering with lightweight symbolic correction mechanisms:

-

Neural Importance Prediction – A graph neural network (GNN) is trained to assign an importance score to every object in a PDDL task. Supervision comes from optimal plans generated by a symbolic planner: objects that appear in the optimal plan are labeled as important (1), all others as unimportant (0). The GNN minimizes binary cross‑entropy loss on these labels. During inference, objects with scores above a threshold q are retained, forming a simplified task τ_O1. The threshold is gradually lowered until a plan is found or a time budget Δt₁ expires.

-

Rule‑Based Relaxation and Rough Planning – If τ_O1 cannot be solved within Δt₁, Flax constructs a relaxed version of the original problem by applying domain‑specific relaxation rules (e.g., temporarily ignoring weight constraints, allowing objects to overlap, or removing stacking restrictions). The relaxed problem is solved quickly within a second time budget Δt₂, producing a rough plan μ_r. All objects referenced in μ_r are merged back into the importance set, yielding O₂. This step does not aim for a valid full plan; its purpose is to surface any objects that the neural filter missed, thereby correcting pruning errors with minimal overhead.

-

Complementary Rule Expansion – Finally, a set of complementary rules re‑introduces essential domain constraints that were relaxed in step 2 (e.g., “a held object requires a free adjacent cell”, “heavy boxes must rest on the floor”). Applying these rules to O₂ produces a final object set O₃, which is used to instantiate the original PDDL problem τ_O₃. The symbolic planner then searches for a complete plan within the remaining time budget. The resulting plan is validated on the original task to ensure correctness.

The authors evaluate Flax on three tiers of environments:

- MazeNamo (MiniGrid) – A newly introduced benchmark where a robot must navigate a maze while manipulating heavy and light boxes, with stacking, pick‑and‑place, and orientation constraints. Experiments cover three map sizes (9×9, 12×12, 15×15) and four difficulty levels.

- Isaac Sim Forklift‑Warehouse – High‑fidelity simulation of a forklift moving pallets and obstacles, testing scalability to continuous‑space dynamics.

- Real‑World Unitree Go2 – A quadruped equipped with a D1 manipulator executing the same planning pipeline on physical mazes with movable obstacles.

Across all settings, Flax achieves a 20.82 % increase in average success rate and a 17.65 % reduction in mean wall‑clock planning time compared to the state‑of‑the‑art neuro‑symbolic baseline (PLOI). In the hardest expert‑level 15×15 mazes, Fast Downward alone exceeds 100 seconds per problem, whereas Flax consistently solves them within ~30 seconds, meeting real‑time constraints for embedded robotic systems. The method also transfers seamlessly to the real robot: the same model generates plans that are directly executed by the Go2, closing the loop from abstract PDDL reasoning to kinodynamic motion without additional tuning.

Key contributions highlighted by the authors are:

- A unified neuro‑symbolic framework that merges fast neural importance prediction with symbolic rough‑plan enhancement and complementary rule expansion, achieving both speed and reliability.

- Domain‑specific relaxation and complementarity mechanisms that act as a lightweight correction layer, quickly recovering from neural pruning errors without exhaustive re‑search.

- The MazeNamo benchmark, which extends classic Sokoban‑style tasks with multiple object categories, stacking, and orientation constraints, providing a rigorous testbed for future planners.

The paper also discusses limitations. Designing relaxation and complementary rules requires domain expertise and may not generalize automatically across disparate tasks. Over‑relaxation could produce meaningless rough plans, while insufficient relaxation may fail to expose missed objects. Future work is suggested in automatic rule learning, multi‑domain generalization, and tighter integration with continuous motion planning.

In summary, Flax demonstrates that a carefully orchestrated combination of neural filtering and symbolic correction can dramatically improve planning efficiency for long‑horizon, object‑rich robotic tasks, offering a practical pathway toward scalable, real‑time task planning in complex real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment