AI-Generated Video Detection via Perceptual Straightening

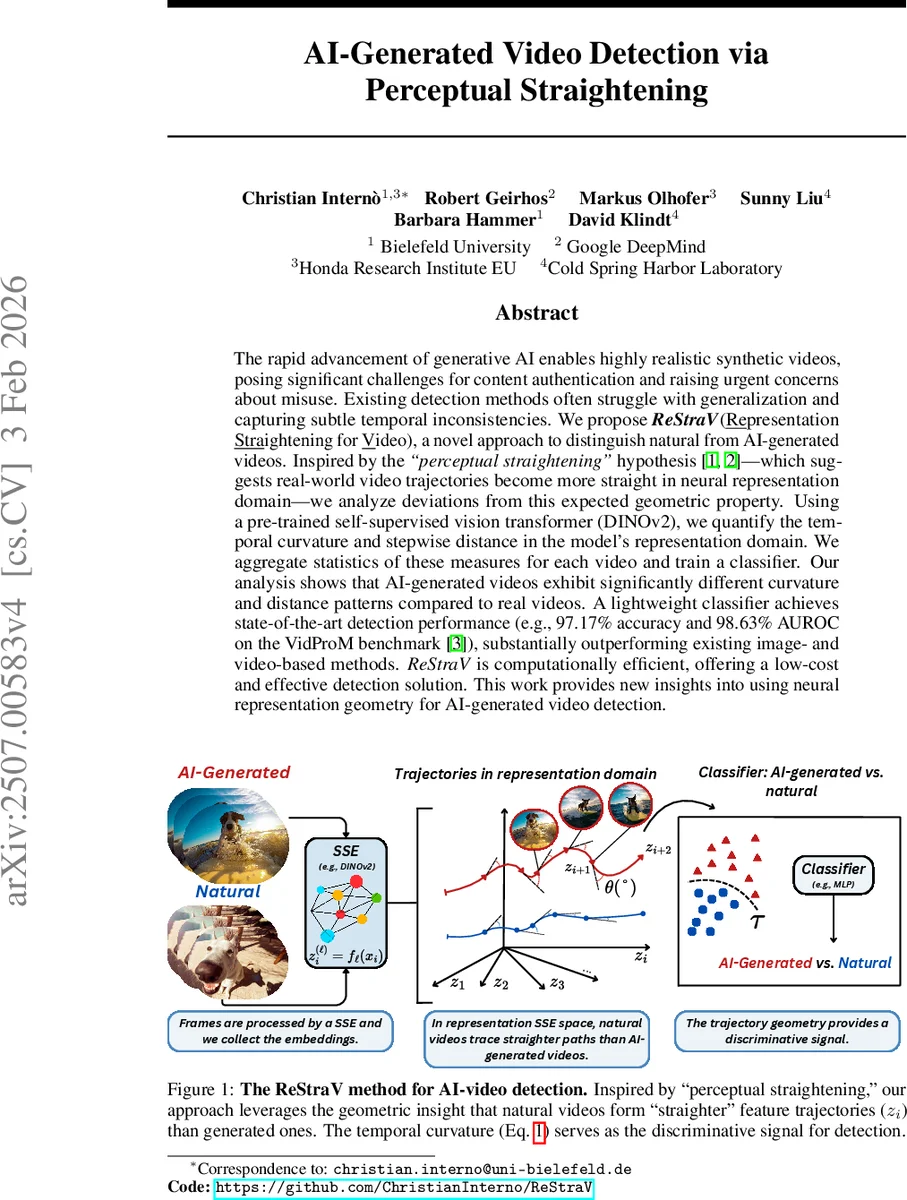

The rapid advancement of generative AI enables highly realistic synthetic videos, posing significant challenges for content authentication and raising urgent concerns about misuse. Existing detection methods often struggle with generalization and capturing subtle temporal inconsistencies. We propose ReStraV(Representation Straightening Video), a novel approach to distinguish natural from AI-generated videos. Inspired by the “perceptual straightening” hypothesis – which suggests real-world video trajectories become more straight in neural representation domain – we analyze deviations from this expected geometric property. Using a pre-trained self-supervised vision transformer (DINOv2), we quantify the temporal curvature and stepwise distance in the model’s representation domain. We aggregate statistics of these measures for each video and train a classifier. Our analysis shows that AI-generated videos exhibit significantly different curvature and distance patterns compared to real videos. A lightweight classifier achieves state-of-the-art detection performance (e.g., 97.17% accuracy and 98.63% AUROC on the VidProM benchmark), substantially outperforming existing image- and video-based methods. ReStraV is computationally efficient, it is offering a low-cost and effective detection solution. This work provides new insights into using neural representation geometry for AI-generated video detection.

💡 Research Summary

The paper introduces ReStraV (Representation Straightening for Video), a novel method for detecting AI‑generated videos that leverages the perceptual‑straightening hypothesis from neuroscience. The hypothesis states that biological visual systems transform temporally curved trajectories in pixel space into nearly straight paths in internal neural representations. The authors hypothesize that a similar effect can be observed in artificial neural networks: natural videos will follow smoother, less curved trajectories in a deep feature space, whereas synthetic videos will exhibit higher curvature and irregular stepwise distances.

To test this, the authors use a pre‑trained self‑supervised vision transformer, DINOv2 (ViT‑S/14). For each video they sample 24 frames over a 2‑second window, resize them to 224×224, and feed them through DINOv2. From the final transformer block they extract the CLS token and all 196 patch embeddings, concatenate them into a 75,648‑dimensional vector per frame, and form a temporal sequence Z = (z₁,…,z_T). They compute the displacement vectors Δz_i = z_{i+1} – z_i, the L2 norm d_i = ‖Δz_i‖ (stepwise distance), and the angle θ_i between successive displacement vectors (curvature) via the arccosine of their cosine similarity.

For each video they summarize the distance and curvature series with four statistical moments: mean, minimum, maximum, and variance, yielding an 8‑dimensional feature vector (μ_d, min_d, max_d, σ²_d, μ_θ, min_θ, max_θ, σ²_θ). A lightweight classifier (e.g., a multilayer perceptron or logistic regression) is trained on these features to discriminate real from synthetic content.

The authors evaluate ReStraV on several large‑scale benchmarks, including VidProM, GenVidBench, and Physics‑IQ, covering a variety of generative models (Pika, VideoCrafter2, Text2Video‑Zero, ModelScope, Sora). They compare 14 different visual encoders (supervised CNNs, other self‑supervised models, HVS‑inspired filters, spatio‑temporal video networks) and find that DINOv2 produces the largest curvature gap (Δθ ≈ 45°) between natural and AI‑generated videos. Importantly, absolute straightening (overall curvature reduction) does not correlate with detection performance; the key is the relative straightening—natural videos become significantly straighter while synthetic ones remain curved.

ReStraV achieves state‑of‑the‑art results: on VidProM it reaches 97.17 % accuracy and 98.63 % AUROC, surpassing prior image‑based detectors (CNNSpot, Gram‑Net, etc.) and video‑based models (TimeSformer, DeMamba, SlowFast). The entire pipeline processes a video in roughly 48 ms on a single GPU, making it suitable for real‑time or large‑scale monitoring.

The paper discusses limitations: the method is tuned to short 2‑second clips with 24 frames, and performance on longer, more complex videos remains to be explored. Moreover, DINOv2 is an image‑focused model; integrating video‑specific self‑supervised architectures could further improve robustness. Nonetheless, ReStraV demonstrates that geometric analysis of neural representation trajectories provides an interpretable, efficient, and highly effective signal for AI‑generated video detection, opening new avenues for media authentication and deep‑fake mitigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment