Dataset-Driven Channel Masks in Transformers for Multivariate Time Series

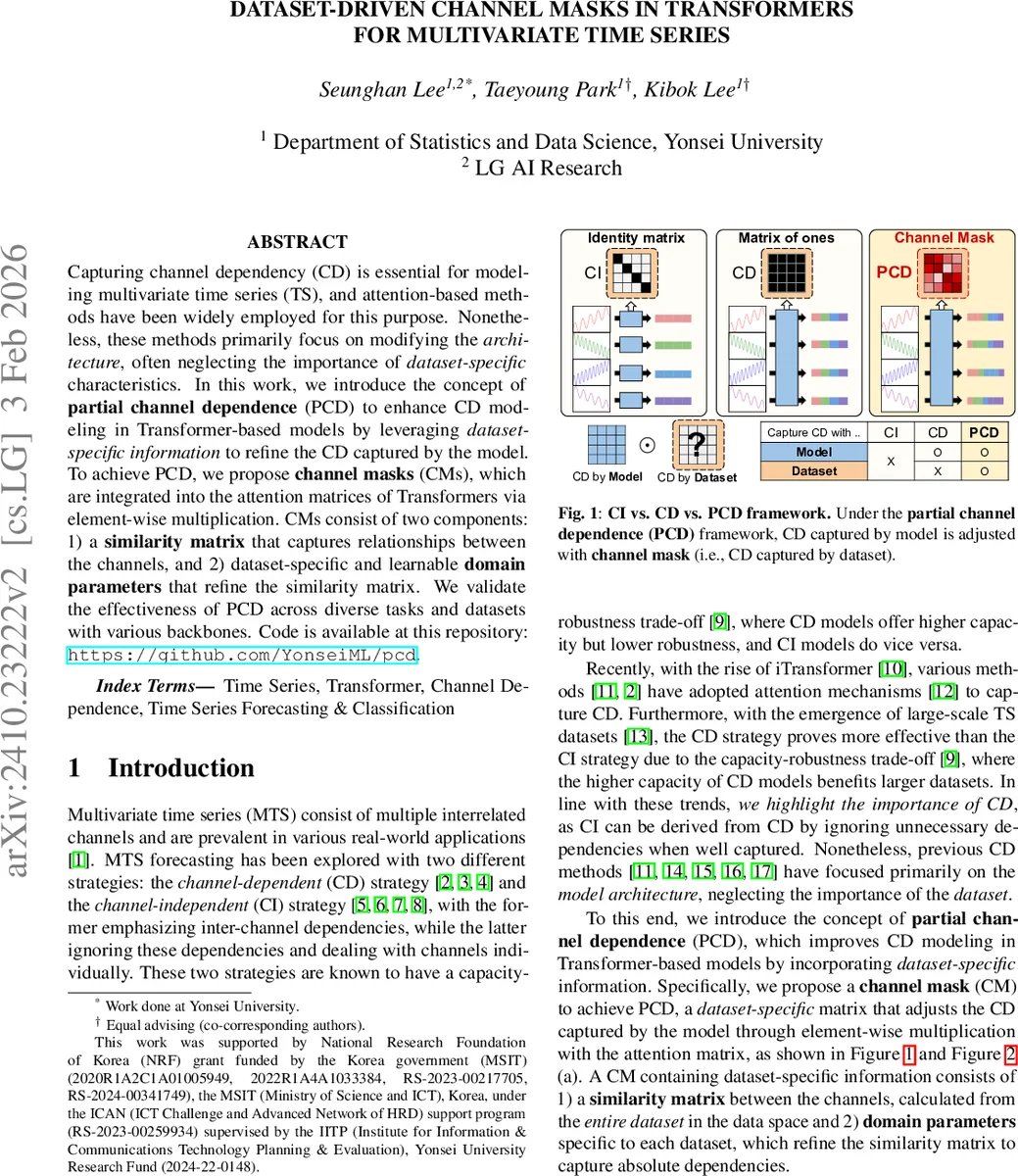

Recent advancements in foundation models have been successfully extended to the time series (TS) domain, facilitated by the emergence of large-scale TS datasets. However, previous efforts have primarily Capturing channel dependency (CD) is essential for modeling multivariate time series (TS), and attention-based methods have been widely employed for this purpose. Nonetheless, these methods primarily focus on modifying the architecture, often neglecting the importance of dataset-specific characteristics. In this work, we introduce the concept of partial channel dependence (PCD) to enhance CD modeling in Transformer-based models by leveraging dataset-specific information to refine the CD captured by the model. To achieve PCD, we propose channel masks (CMs), which are integrated into the attention matrices of Transformers via element-wise multiplication. CMs consist of two components: 1) a similarity matrix that captures relationships between the channels, and 2) dataset-specific and learnable domain parameters that refine the similarity matrix. We validate the effectiveness of PCD across diverse tasks and datasets with various backbones. Code is available at this repository: https://github.com/YonseiML/pcd.

💡 Research Summary

The paper addresses the challenge of modeling channel dependencies in multivariate time‑series (MTS) forecasting and related tasks. While recent Transformer‑based approaches have leveraged attention mechanisms to capture inter‑channel relationships, they typically focus on architectural modifications and ignore dataset‑specific characteristics that influence how channels interact. To bridge this gap, the authors introduce the notion of Partial Channel Dependence (PCD), which augments the model‑learned, local (input‑segment) dependencies with a global, dataset‑level view of channel relationships.

The core of PCD is the Channel Mask (CM), a plug‑in matrix that is element‑wise multiplied with the standard attention matrix. CM consists of two components: (1) a similarity matrix R computed from the entire training dataset, using the absolute value of pairwise Pearson correlations between channels, and (2) learnable domain parameters α (scale) and β (shift) that adapt R to each dataset. After mean‑centering R (producing \bar{R}) the mask is formed as M = σ(α·\bar{R} + β), where σ is the sigmoid function, ensuring values lie in

Comments & Academic Discussion

Loading comments...

Leave a Comment