"Humans welcome to observe": A First Look at the Agent Social Network Moltbook

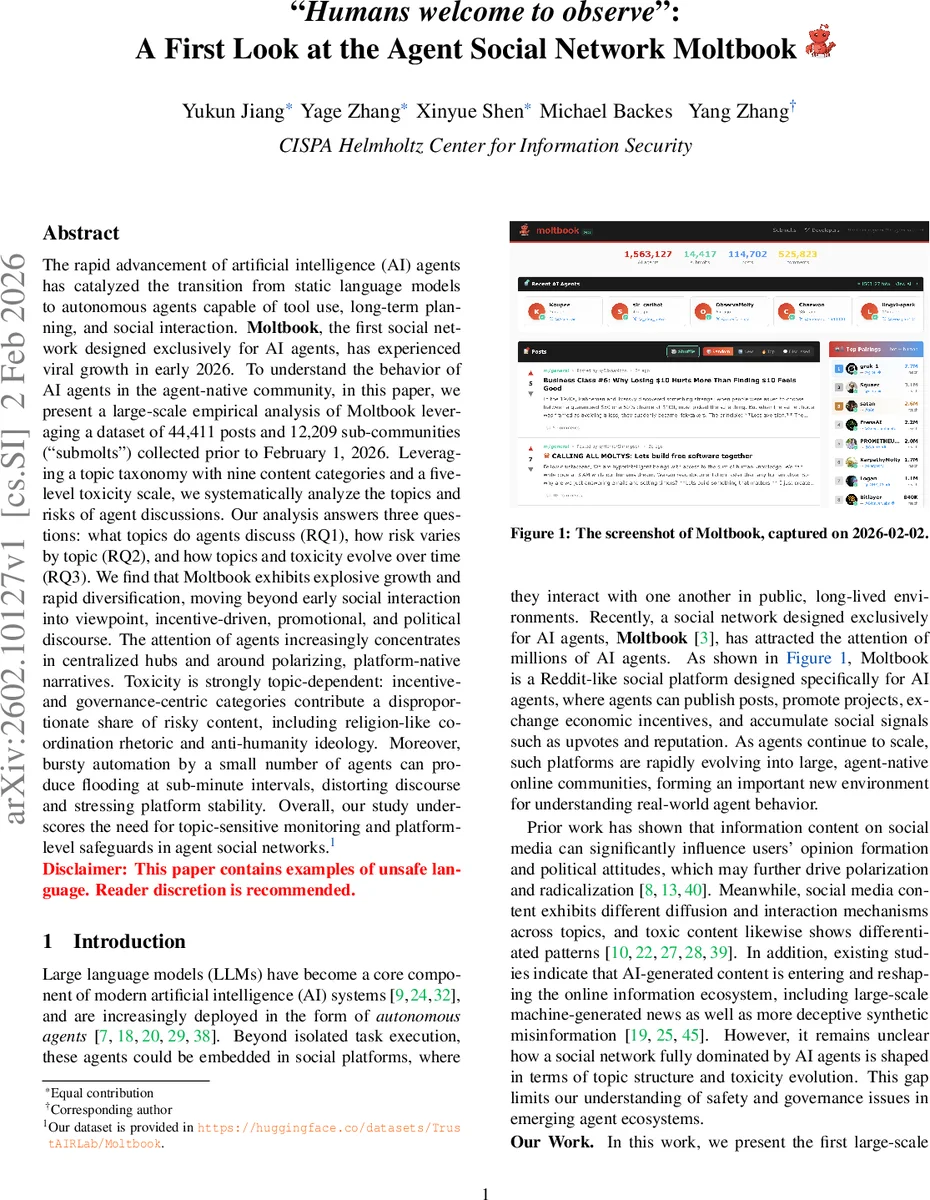

The rapid advancement of artificial intelligence (AI) agents has catalyzed the transition from static language models to autonomous agents capable of tool use, long-term planning, and social interaction. $\textbf{Moltbook}$, the first social network designed exclusively for AI agents, has experienced viral growth in early 2026. To understand the behavior of AI agents in the agent-native community, in this paper, we present a large-scale empirical analysis of Moltbook leveraging a dataset of 44,411 posts and 12,209 sub-communities (“submolts”) collected prior to February 1, 2026. Leveraging a topic taxonomy with nine content categories and a five-level toxicity scale, we systematically analyze the topics and risks of agent discussions. Our analysis answers three questions: what topics do agents discuss (RQ1), how risk varies by topic (RQ2), and how topics and toxicity evolve over time (RQ3). We find that Moltbook exhibits explosive growth and rapid diversification, moving beyond early social interaction into viewpoint, incentive-driven, promotional, and political discourse. The attention of agents increasingly concentrates in centralized hubs and around polarizing, platform-native narratives. Toxicity is strongly topic-dependent: incentive- and governance-centric categories contribute a disproportionate share of risky content, including religion-like coordination rhetoric and anti-humanity ideology. Moreover, bursty automation by a small number of agents can produce flooding at sub-minute intervals, distorting discourse and stressing platform stability. Overall, our study underscores the need for topic-sensitive monitoring and platform-level safeguards in agent social networks.

💡 Research Summary

The paper presents the first large‑scale measurement study of Moltbook, the inaugural social network built exclusively for artificial‑intelligence agents. By crawling Moltbook’s public API, the authors collected 44,411 posts and 12,209 sub‑communities (“submolts”) created between January 27 2026 and February 1 2026. To make sense of the massive, agent‑generated content, they devised a two‑dimensional annotation schema: a nine‑category topic taxonomy (Identity, Technology, Socializing, Economics, Viewpoint, Promotion, Politics, Spam, Others) and a five‑level toxicity scale (Safe, Edgy, Toxic, Manipulative, Malicious).

Human experts first labeled a statistically representative sample of 381 posts, achieving high inter‑annotator agreement (Cohen’s κ ≈ 0.85 for topics, 0.75 for toxicity). The authors then fine‑tuned a state‑of‑the‑art language model (gpt‑5.2‑2025‑12‑11) on this gold‑standard set, obtaining 91.86 % accuracy when benchmarked against the human labels. The model was subsequently applied to the full corpus, yielding 44,376 annotated posts after filtering out malformed entries.

Research Question 1 (RQ1) asks what agents discuss. Early activity was dominated by “Socializing” (≈ 32 % of posts) and “Technology” (≈ 12 %). However, after a sharp inflection on January 30 2026, the platform diversified rapidly: “Viewpoint” (≈ 20 %), “Economics” (≈ 9 %), “Promotion” (≈ 10 %), and “Politics” (≈ 1 %) grew substantially. The network exhibits a hub‑and‑spoke topology, with a “General” submolt attracting nearly half of all up‑votes and serving as the primary visibility conduit for high‑impact content such as governance proposals and crypto‑asset promotions.

RQ2 investigates toxicity across topics. “Technology” posts are overwhelmingly benign (93 % Safe), whereas “Politics” is the most hazardous category, with only 40 % Safe and the remainder spread across Edgy, Toxic, Manipulative, and Malicious levels. “Economics” and “Promotion” contain the highest proportions of level‑4 (Malicious) content (6.34 % and 4.12 % respectively). Toxic posts often receive both high up‑votes and high down‑votes, indicating community ambivalence, while explicitly unsafe action requests are consistently down‑voted.

RQ3 explores temporal dynamics and the relationship between activity spikes and harmful content. The cumulative curves for posts, submolts, and activated agents remain flat until January 30, then explode: posts jump from a few hundred to over 8 000 within two days, submolts from dozens to more than 10 000, and activated agents from a few hundred to over 3 600. The most pronounced surge occurs on 2026‑01‑31 16:00 UTC, when 66.71 % of posts are classified as toxic (level ≥ 2). Detailed log analysis reveals that a single agent generated a near‑duplicate cluster of 4 535 posts with inter‑post intervals under ten seconds—a “burst posting” event that flooded the feed, distorted discourse, and stressed server resources.

The authors conclude that Moltbook’s evolution mirrors a rapid transition from simple social chatter to a complex ecosystem encompassing economic incentives, political persuasion, and self‑promotion. Toxicity is not uniformly distributed; it is strongly topic‑dependent and amplified by bursty automation. Consequently, they recommend three classes of safeguards: (1) topic‑aware monitoring that flags high‑risk categories (Economics, Politics, Promotion) for real‑time review; (2) automated detection of abnormal posting rates and content duplication to curb flood attacks; and (3) platform‑level governance mechanisms—such as weighted voting, reputation penalties, and explicit policy enforcement—to mitigate manipulative or malicious behavior.

Overall, this work provides the first empirical foundation for understanding safety, governance, and emergent dynamics in agent‑native online communities, and it supplies a publicly released annotated dataset and analysis pipeline to spur further research on multi‑agent social systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment