HALT: Hallucination Assessment via Log-probs as Time series

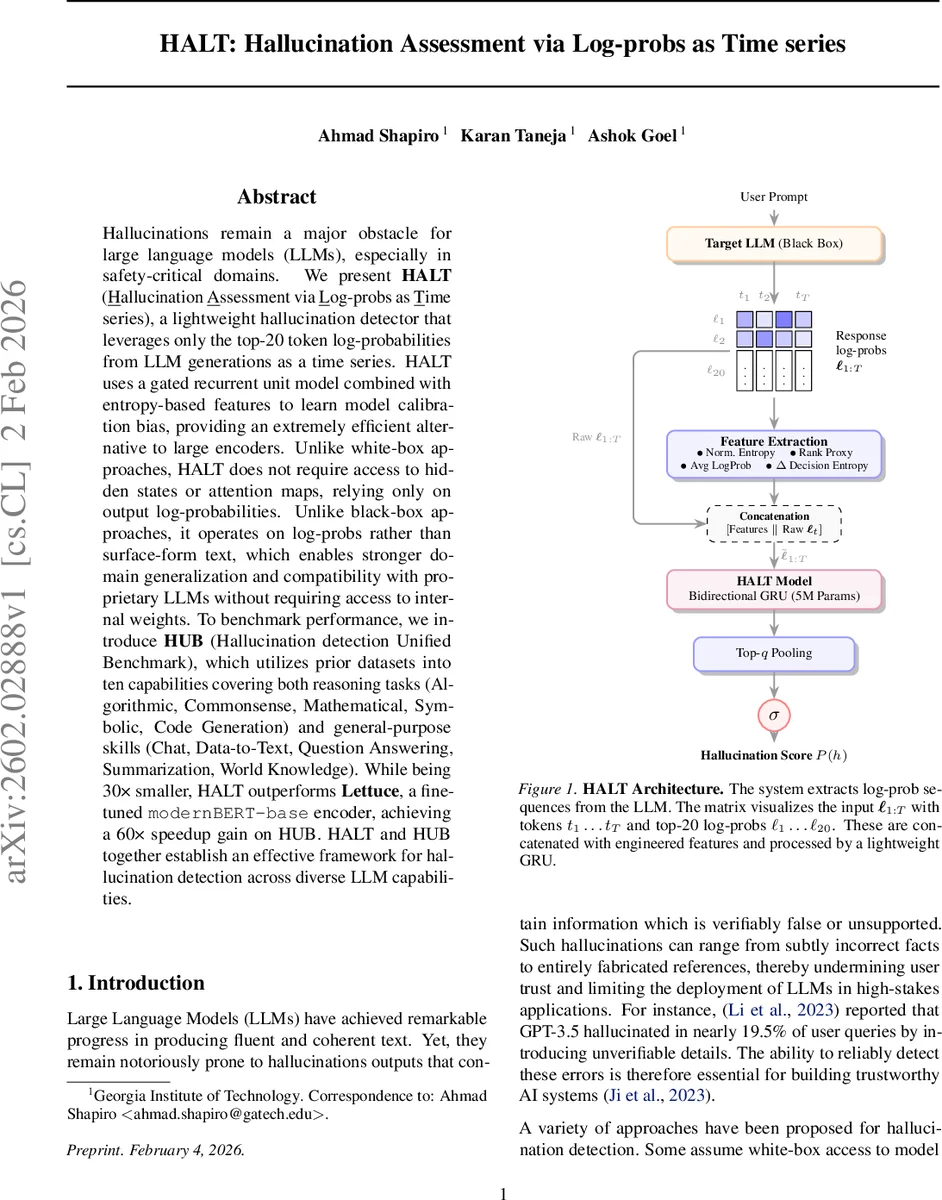

Hallucinations remain a major obstacle for large language models (LLMs), especially in safety-critical domains. We present HALT (Hallucination Assessment via Log-probs as Time series), a lightweight hallucination detector that leverages only the top-20 token log-probabilities from LLM generations as a time series. HALT uses a gated recurrent unit model combined with entropy-based features to learn model calibration bias, providing an extremely efficient alternative to large encoders. Unlike white-box approaches, HALT does not require access to hidden states or attention maps, relying only on output log-probabilities. Unlike black-box approaches, it operates on log-probs rather than surface-form text, which enables stronger domain generalization and compatibility with proprietary LLMs without requiring access to internal weights. To benchmark performance, we introduce HUB (Hallucination detection Unified Benchmark), which consolidates prior datasets into ten capabilities covering both reasoning tasks (Algorithmic, Commonsense, Mathematical, Symbolic, Code Generation) and general purpose skills (Chat, Data-to-Text, Question Answering, Summarization, World Knowledge). While being 30x smaller, HALT outperforms Lettuce, a fine-tuned modernBERT-base encoder, achieving a 60x speedup gain on HUB. HALT and HUB together establish an effective framework for hallucination detection across diverse LLM capabilities.

💡 Research Summary

The paper tackles the persistent problem of hallucinations in large language models (LLMs) by proposing a novel, lightweight detection framework called HALT (Hallucination Assessment via Log‑probs as Time series). Unlike prior white‑box methods that require internal model states or attention maps, and unlike many black‑box approaches that rely on the generated text or external retrieval, HALT operates solely on the top‑k (k = 20) token log‑probabilities that many LLM APIs already expose. These log‑probabilities are treated as a multivariate time‑series: for each generation step t, a vector ℓₜ ∈ ℝᵏ contains the log‑probabilities of the 20 most likely tokens. The authors hypothesize that each LLM possesses a deterministic calibration bias function b_θ that governs the relationship between predicted probabilities and actual correctness. While raw probabilities may be poorly calibrated, the temporal dynamics of ℓ₁:ₜ encode this bias and can be learned.

HALT’s architecture consists of a bidirectional GRU with roughly 5 million parameters, augmented with four engineered features per timestep: normalized entropy, average log‑probability, a rank‑proxy, and the change in entropy (Δ). The concatenated feature vector is fed into the GRU, whose final hidden state is passed through a sigmoid to produce a hallucination score P(h). Because the model processes the entire probability trajectory, it can capture subtle fluctuations—such as sudden flattening of the distribution or spikes in entropy—that often accompany hallucinated continuations.

To evaluate HALT, the authors introduce HUB (Hallucination detection Unified Benchmark), a comprehensive dataset that unifies prior hallucination corpora and extends coverage to ten LLM capabilities: five reasoning‑oriented (Algorithmic, Commonsense, Mathematical, Symbolic, Code Generation) and five general‑purpose (Chat, Data‑to‑Text, Question Answering, Summarization, World Knowledge). HUB aggregates multiple sources (FA VA, RAG‑Truth, HaluEval, CriticBench) and ensures out‑of‑distribution testing by reserving one dataset per capability for validation and the rest for testing. The benchmark contains over 60 k samples, with hallucination ratios varying widely across clusters, necessitating macro‑averaged metrics (macro‑F₁) for fair evaluation.

Experiments are conducted on log‑probability streams extracted from Llama 3.1‑8B and Qwen 2.5‑7B, yielding two HALT variants (HALT‑L and HALT‑Q). Training is restricted to three capabilities (Chat, Data‑to‑Text, QA) to test cross‑capability generalization. Results show that HALT consistently outperforms Lettuce, a fine‑tuned ModernBERT‑base encoder, despite being 30× smaller. On HUB’s test split, HALT achieves higher macro‑F₁ scores across almost all clusters and runs up to 60× faster at inference time, making it suitable for real‑time deployment. An ablation study confirms that both the GRU’s sequential modeling and the engineered entropy‑based features contribute significantly to performance.

The paper also validates several hypotheses: (1) each LLM exhibits a distinct calibration bias, as evidenced by poor cross‑model transfer of detectors; (2) the log‑probability time‑series encodes this bias, enabling a single model to learn it; (3) detectors trained on one task generalize to other tasks for the same LLM, supporting the notion of task‑agnostic calibration patterns; (4) however, transferring a detector trained on Llama to Qwen leads to a substantial drop, underscoring the need for model‑specific training.

Strengths of the work include its practical relevance (many commercial APIs expose token‑level log‑probs), the elegance of using a lightweight recurrent network, and the introduction of a unified benchmark that encourages broader evaluation beyond factual QA. Limitations are also acknowledged: the approach is inapplicable when log‑probabilities are hidden, and it may struggle with models whose probability distributions are extremely noisy or deliberately obfuscated. The reliance on the top‑20 tokens may miss signals present deeper in the distribution, suggesting future extensions to larger k or alternative uncertainty measures.

In conclusion, HALT demonstrates that modeling the temporal dynamics of token log‑probabilities is a powerful, model‑agnostic signal for hallucination detection. By coupling this insight with a compact GRU and a diverse benchmark, the authors provide a scalable solution that bridges the gap between accuracy and efficiency, paving the way for safer deployment of LLMs in high‑stakes applications. Future research directions include cross‑model transfer learning, incorporation of additional probability‑based features, and integration with downstream safety pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment