"I May Not Have Articulated Myself Clearly": Diagnosing Dynamic Instability in LLM Reasoning at Inference Time

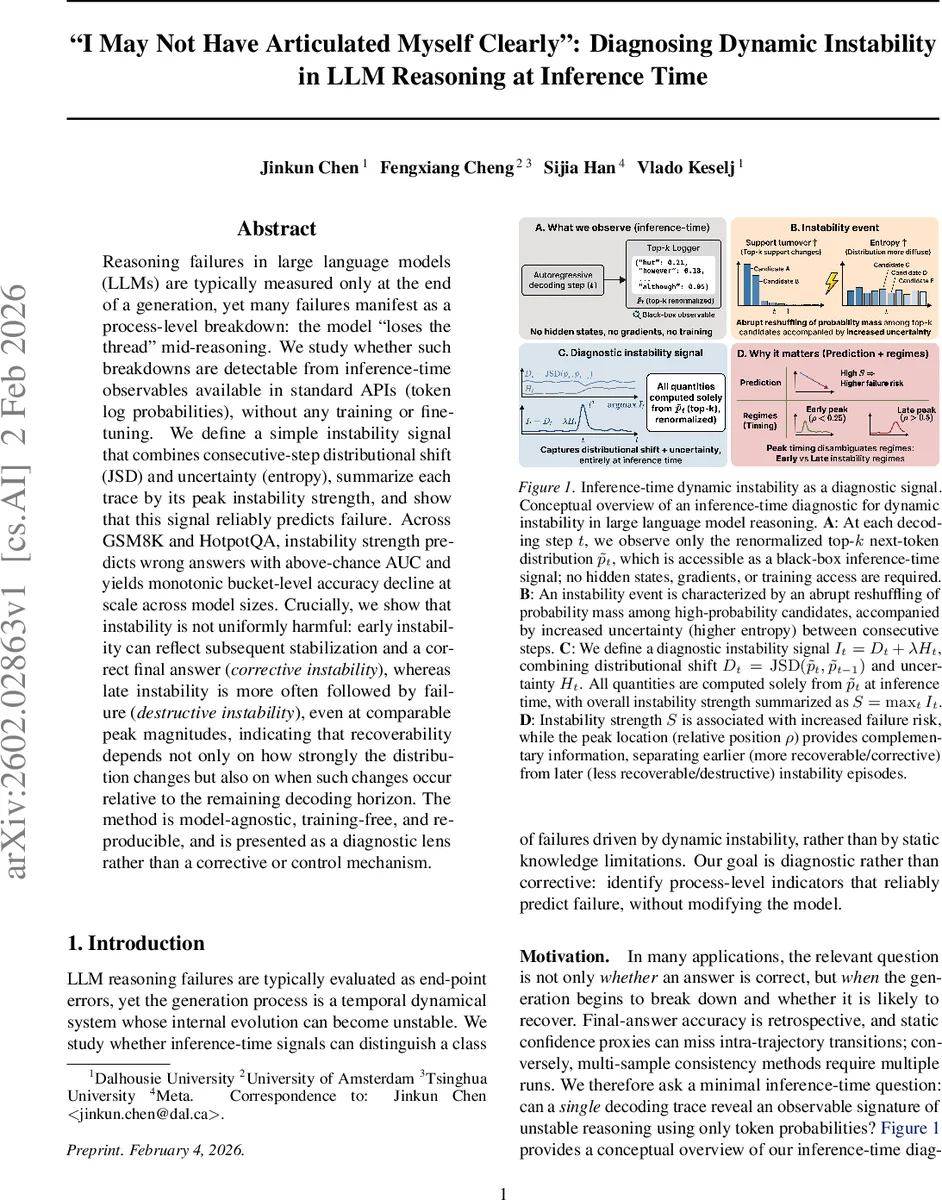

Reasoning failures in large language models (LLMs) are typically measured only at the end of a generation, yet many failures manifest as a process-level breakdown: the model “loses the thread” mid-reasoning. We study whether such breakdowns are detectable from inference-time observables available in standard APIs (token log probabilities), without any training or fine-tuning. We define a simple instability signal that combines consecutive-step distributional shift (JSD) and uncertainty (entropy), summarize each trace by its peak instability strength, and show that this signal reliably predicts failure. Across GSM8K and HotpotQA, instability strength predicts wrong answers with above-chance AUC and yields monotonic bucket-level accuracy decline at scale across model sizes. Crucially, we show that instability is not uniformly harmful: early instability can reflect subsequent stabilization and a correct final answer (\emph{corrective instability}), whereas late instability is more often followed by failure (\emph{destructive instability}), even at comparable peak magnitudes, indicating that recoverability depends not only on how strongly the distribution changes but also on when such changes occur relative to the remaining decoding horizon. The method is model-agnostic, training-free, and reproducible, and is presented as a diagnostic lens rather than a corrective or control mechanism.

💡 Research Summary

The paper tackles a largely overlooked aspect of large language model (LLM) failures: the moment during a generation when the model’s internal reasoning becomes unstable, causing it to “lose the thread.” While most prior work evaluates LLMs only by their final answer, the authors ask whether a single inference‑time trace—available through standard APIs that expose token log‑probabilities—contains a detectable signature of such dynamic instability, without any model fine‑tuning or additional supervision.

Core Idea

At each decoding step t the model emits a probability distribution over the vocabulary. The authors restrict themselves to the top‑k logits that are typically returned by black‑box APIs, renormalize them to a distribution (\tilde p_t), and compute two quantities:

- Distributional shift (D_t) = Jensen‑Shannon Divergence between (\tilde p_t) and (\tilde p_{t-1}). This captures an abrupt reshuffling of probability mass between consecutive steps.

- Uncertainty (H_t) = entropy of (\tilde p_t). Higher entropy indicates the model is less confident about the next token.

These are combined linearly as an “instability signal” (I_t = D_t + \lambda H_t) with a fixed weight (\lambda = 1). For a full generation trace the authors define the instability strength (S = \max_t I_t) (the peak of the signal) and also record the relative position (\rho = t^*/T) where the peak occurs (with T the total number of generated tokens). A windowed version (S_{50} = \max_{t\le 50} I_t) is used to control for trace‑length bias.

Experimental Setup

The method is evaluated on two reasoning benchmarks: GSM8K (grade‑school math) and HotpotQA (multi‑hop question answering). Models from the Llama and Qwen families are tested across six scales (0.5 B to 8 B parameters) and under both deterministic (temperature 0) and stochastic (temperature 0.7) decoding. No hidden states, gradients, or multiple samples are required—only the top‑k log‑probabilities per step.

Key Findings

-

Predictive Power of Instability Strength – Across all settings, higher (S) correlates with a higher probability of a wrong answer. The area under the ROC curve (AUC) for predicting failure from a single trace ranges from 0.66 to 0.74, demonstrating that a simple, training‑free signal can reliably flag problematic generations.

-

Timing Matters More Than Magnitude – Traces with comparable peak values of (I_t) can have opposite outcomes depending on when the peak occurs. Early peaks (small (\rho)) often precede a period of re‑stabilization and lead to correct final answers; the authors term this corrective instability. Late peaks (large (\rho)) leave little decoding horizon for recovery and are usually followed by incorrect answers, dubbed destructive instability. This dichotomy holds even when the peak magnitudes are matched, indicating that the temporal context of the shift is crucial.

-

Robustness Across Models and Decoding – The relationship between instability and failure persists across model families, sizes, and both deterministic and sampling decoding. Larger models tend to exhibit slightly lower failure rates for a given instability strength, but the trend remains monotonic.

-

Ablation on λ – Setting (\lambda = 0) (using only JSD) reduces predictive performance, confirming that entropy adds complementary information. The fixed λ = 1 works well without any hyper‑parameter tuning, reinforcing the method’s simplicity.

-

Computational Efficiency – Computing (I_t) requires O(T · k) operations per trace (k is the top‑k size) and only O(k) memory, making it streamable and practical for real‑time monitoring of deployed LLM services.

Position Relative to Prior Work

The authors distinguish their approach from confidence calibration, self‑consistency, and verifier‑style methods, which either need multiple sampled traces, hidden‑state access, or additional training. Their instability signal is purely intra‑trajectory, focusing on how the token distribution evolves rather than how certain the model is about the final answer. It also differs from static uncertainty measures that average entropy over a whole trace; the signal captures localized regime shifts that static metrics would miss.

Limitations and Future Directions

The paper is diagnostic only; it does not propose mechanisms to intervene once instability is detected. Potential extensions include:

- Using the signal to trigger dynamic decoding strategies (e.g., switching to higher‑temperature sampling, re‑prompting, or beam search) when a destructive peak is imminent.

- Combining the token‑level instability with higher‑level semantic entropy or hidden‑state probes for richer diagnostics.

- Training lightweight controllers that, given the instability trajectory, decide whether to continue generation or request external verification.

Conclusion

By defining a lightweight, inference‑time instability signal based on consecutive‑step Jensen‑Shannon divergence and entropy, the authors provide a practical tool for monitoring LLM reasoning dynamics. The signal’s peak magnitude predicts failure, while its timing separates corrective from destructive instability, offering nuanced insight into when a model is likely to self‑correct versus when it is about to diverge irreversibly. This work opens a new avenue for real‑time reliability monitoring of LLMs and suggests promising paths toward dynamic, self‑aware generation control.

Comments & Academic Discussion

Loading comments...

Leave a Comment