Smell with Genji: Rediscovering Human Perception through an Olfactory Game with AI

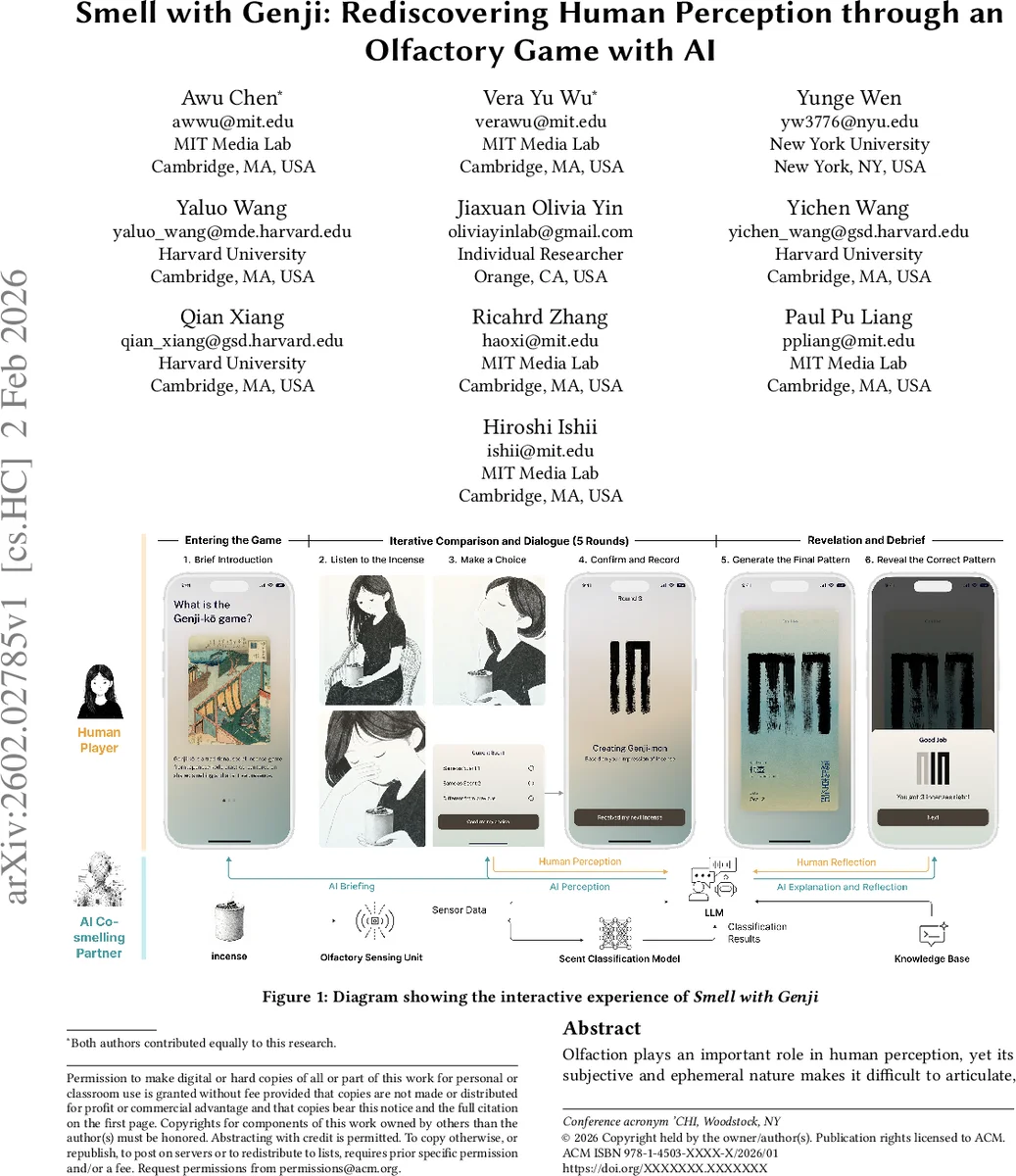

Olfaction plays an important role in human perception, yet its subjective and ephemeral nature makes it difficult to articulate, compare, and share across individuals. Traditional practices like the Japanese incense game Genji-ko offer one way to structure olfactory experience through shared interpretation. In this work, we present Smell with Genji, an AI-mediated olfactory interaction system that reinterprets Genji-ko as a collaborative human-AI sensory experience. By integrating a game setup, a mobile application, and an LLM-powered co-smelling partner equipped with olfactory sensing and LLM-based conversation, the system invites participants to compare scents and construct Genji-mon patterns, fostering reflection through a dialogue that highlights the alignment and discrepancies between human and machine perception. This work illustrates how sensing-enabled AI can participate in olfactory experience alongside users, pointing toward new possibilities for AI-supported sensory interaction and reflection in HCI.

💡 Research Summary

The paper introduces “Smell with Genji,” an AI‑mediated olfactory interaction that reimagines the traditional Japanese incense game Genji‑ko as a collaborative human‑AI sensory experience. Recognizing that smell is notoriously difficult to verbalize, the authors leverage the comparative structure of Genji‑ko—where participants compare sequences of five incense scents and encode similarities in a visual “Genji‑mon” diagram—to scaffold users’ attention to subtle olfactory differences.

The system comprises three tightly integrated components. First, a mobile application (built with React and WebSockets) orchestrates the game flow, records participants’ comparative judgments, and incrementally renders the Genji‑mon pattern. Participants scan a QR code that links the physical incense set to a predefined correct pattern, ensuring that the digital interface stays synchronized with the physical ritual. Second, a 3‑D‑printed enclosure houses the AI co‑smelling partner. Inside, three metal‑oxide semiconductor sensors (BME680 for temperature/humidity/pressure, SGP30 for TVOC/eCO₂, and a multichannel gas sensor for VOCs, NO₂, ethanol, CO) capture nine channels of environmental data at 10 Hz while a sealed bag containing 10 g of incense sits 20 mm away. Data are collected over five‑minute intervals across varied indoor/outdoor and diurnal conditions, yielding 405 000 data points. Third, a processing pipeline combines a temporal Transformer encoder with an MLP head to classify the five incense types. Because the VOC signatures of chemically similar incenses overlap, the model achieves only ~40 % accuracy under controlled conditions. Rather than treating the AI as a competitor, the design deliberately positions it as a “smell partner,” allowing the inherent uncertainty to become a learning opportunity for participants.

On top of the sensor‑driven classification, a large language model (LLM) provides conversational feedback. Two Retrieval‑Augmented Generation (RAG) databases support context‑aware dialogue: a static repository holds incense knowledge, olfactory AI principles, and dialogue templates; a dynamic repository logs session‑specific sensor traces, classification outputs, and interaction history. Guided by a system prompt and a custom persona, the LLM translates sensor trends into natural‑language descriptions, asks reflective questions, and compares its own judgments with the user’s. Text‑to‑speech synthesis delivers these prompts in a consistent voice, creating a seamless turn‑based dialogue.

Gameplay unfolds over five rounds. The first round serves as a baseline “listening” phase with no judgment required. From round two onward, participants decide whether the current scent matches any previously encountered scent. After each decision, the AI co‑smeller engages the user in a brief dialogue, offering observations such as “this scent feels similar to the second one but has a faint citrus note.” Users may revise their judgment after hearing the AI’s perspective. Each confirmed decision updates the Genji‑mon diagram in real time: matching scents are linked at the top, distinct scents remain separate, thereby visualizing the traditional pattern. The iterative loop of smelling → judging → dialogue → visualization encourages participants to compare the current scent against multiple prior samples, exercising memory, attention, and perceptual adjustment.

At the conclusion, the system reveals the participant’s final Genji‑mon pattern alongside the correct pattern and provides a physical bookmark as a tangible artifact. A debriefing phase lets the AI explain how temporal sensor dynamics informed its judgments, highlighting moments of alignment and divergence between human intuition and machine inference. This reflective comparison is intended to deepen participants’ awareness of their own sensory heuristics and to illustrate how AI can augment, rather than replace, human perception.

The authors claim three primary contributions: (1) an AI‑mediated extension of a culturally grounded olfactory game that preserves its social dynamics; (2) a multimodal pipeline that fuses real‑time olfactory sensing, a temporal classification model, and LLM‑driven dialogue to enable a co‑smelling partner; and (3) an empirical platform that surfaces human‑machine perceptual discrepancies as a catalyst for reflective sensory learning.

Future work outlined includes improving sensor placement and incorporating temperature modeling to boost classification fidelity, experimenting with varied LLM personas and dialogue pacing to assess effects on sensory awareness, and conducting longitudinal user studies to measure impacts on olfactory vocabulary, mood, and well‑being. The authors also envision expanding the AI’s role beyond a conversational partner to functions such as scent synthesis, creative storytelling, or therapeutic interventions.

Overall, “Smell with Genji” demonstrates a novel approach to integrating multimodal AI into embodied, culturally rich HCI experiences, offering a concrete example of how sensing‑enabled AI can participate in and enrich human sensory perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment