BiTimeCrossNet: Time-Aware Self-Supervised Learning for Pediatric Sleep

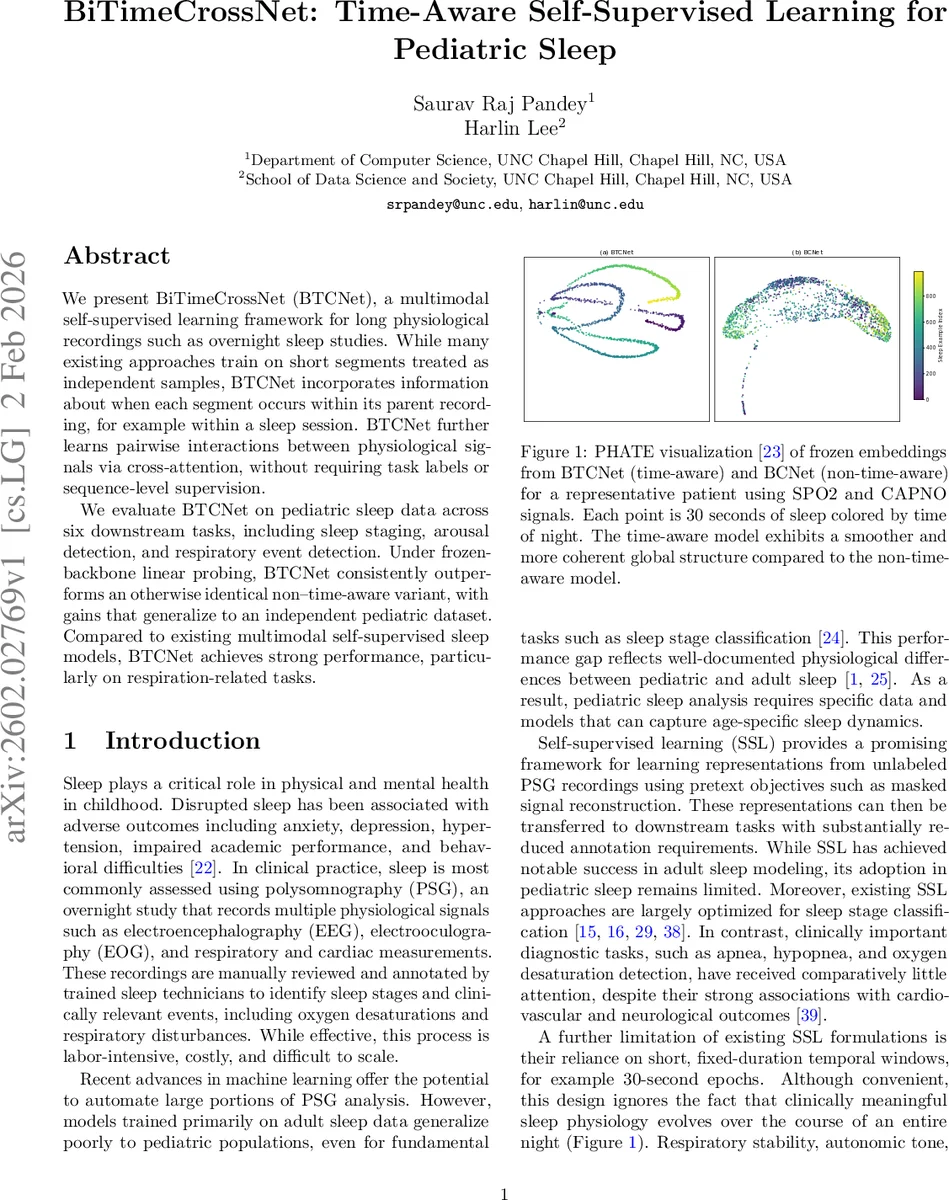

We present BiTimeCrossNet (BTCNet), a multimodal self-supervised learning framework for long physiological recordings such as overnight sleep studies. While many existing approaches train on short segments treated as independent samples, BTCNet incorporates information about when each segment occurs within its parent recording, for example within a sleep session. BTCNet further learns pairwise interactions between physiological signals via cross-attention, without requiring task labels or sequence-level supervision. We evaluate BTCNet on pediatric sleep data across six downstream tasks, including sleep staging, arousal detection, and respiratory event detection. Under frozen-backbone linear probing, BTCNet consistently outperforms an otherwise identical non-time-aware variant, with gains that generalize to an independent pediatric dataset. Compared to existing multimodal self-supervised sleep models, BTCNet achieves strong performance, particularly on respiration-related tasks.

💡 Research Summary

BiTimeCrossNet (BTCNet) introduces a novel self‑supervised learning (SSL) framework tailored for pediatric polysomnography (PSG) recordings, addressing two critical shortcomings of prior SSL approaches: the neglect of long‑range temporal context across an entire night and the limited modeling of multimodal interactions. BTCNet operates in two stages. In Stage 1, each of the 16 physiological channels (EEG, EOG, EMG, ECG, respiratory, SpO₂, etc.) is equipped with its own Vision‑Transformer encoder. These encoders are trained independently using a hybrid objective that combines masked auto‑encoding (MAE) reconstruction of randomly masked 30‑second patches (50 % mask ratio) with a contrastive NT‑Xent loss that aligns an original view and a lightly perturbed view of the same signal. This yields robust, modality‑specific embeddings.

Stage 2 introduces two innovations. First, a random modality‑pair selection mechanism samples two distinct channels at each training iteration, forming a bimodal input tensor that is processed by a cross‑attention encoder. The cross‑attention operates bidirectionally, allowing each modality to attend to the other’s visible patches, thereby learning explicit physiological relationships (e.g., SpO₂‑respiratory coupling) while remaining resilient to missing or noisy channels. Second, BTCNet incorporates a global time‑aware positional conditioning scheme. Beyond the usual spatial, temporal (within‑window), and token‑level positional embeddings, each 30‑second segment is assigned a standardized session index (z‑score of its position within the night). A lightweight two‑layer FiLM‑style network maps this index to feature‑wise scale (γ) and shift (β) vectors, which are applied to the token embeddings after the standard positional encodings. This affine modulation injects night‑scale temporal context that cannot be captured by local patch positions alone.

The model is pretrained on the Nationwide Children’s Hospital (NCH) Sleep Databank (2,379 overnight PSGs) and evaluated on six clinically relevant downstream tasks: five‑class sleep staging, arousal detection, apnea detection, hypopnea detection, oxygen desaturation detection, and a composite respiratory event detection. Linear probing (freezing the pretrained backbone and training a simple classifier) reveals that the time‑aware version consistently outperforms an otherwise identical non‑time‑aware baseline across all tasks, with AUROC and F1 gains ranging from 2 to 5 percentage points. Gains are most pronounced for respiration‑related tasks, underscoring the importance of night‑scale dynamics (e.g., REM density shifts, respiratory stability trends).

External validation is performed on the Childhood Adenotonsillectomy Trial (CHA T) dataset (422 PSGs) that was not seen during pretraining. BTCNet’s frozen embeddings again surpass recent multimodal SSL models such as PedSleepMAE and SleepFM on oxygen desaturation and apnea detection, demonstrating cross‑dataset generalization.

Key contributions of the work are: (1) a global time‑aware conditioning mechanism that encodes the position of each window within the full sleep session, (2) a random modality‑pair cross‑attention pretraining strategy that learns robust pairwise physiological interactions and tolerates missing modalities, (3) a hybrid MAE + contrastive objective that balances reconstruction fidelity with representation invariance, and (4) extensive empirical validation on two large pediatric PSG cohorts across multiple diagnostic tasks.

The authors plan to release the code and pretrained weights as open source, facilitating adoption in clinical research and enabling downstream applications such as automated screening for sleep‑disordered breathing, neurodevelopmental monitoring, and personalized sleep medicine in children. Future directions include exploring multi‑scale attention windows, real‑time inference optimizations, and transfer to other age groups or disease populations.

Comments & Academic Discussion

Loading comments...

Leave a Comment