When Noise Lowers The Loss: Rethinking Likelihood-Based Evaluation in Music Large Language Models

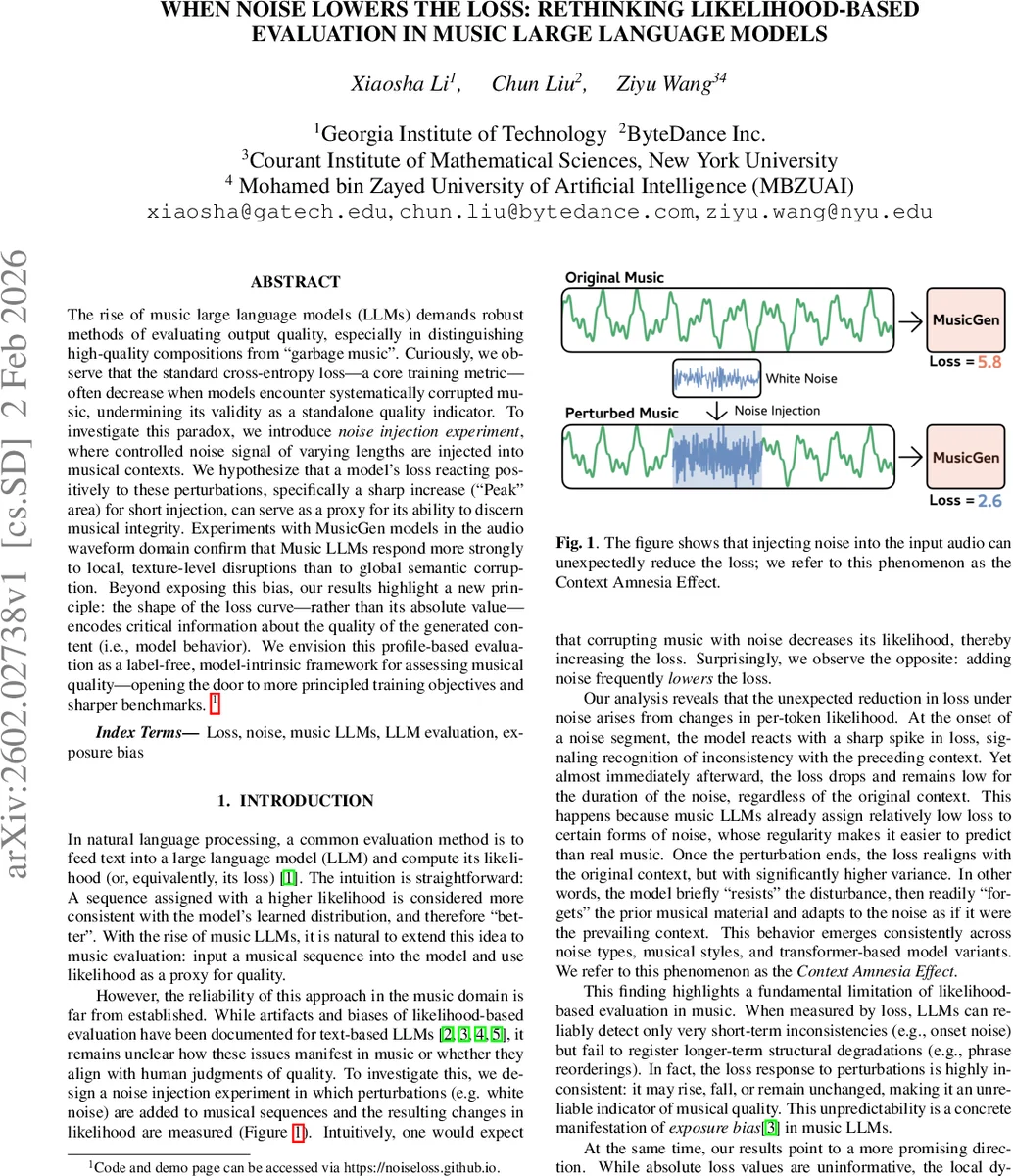

The rise of music large language models (LLMs) demands robust methods of evaluating output quality, especially in distinguishing high-quality compositions from “garbage music”. Curiously, we observe that the standard cross-entropy loss – a core training metric – often decrease when models encounter systematically corrupted music, undermining its validity as a standalone quality indicator. To investigate this paradox, we introduce noise injection experiment, where controlled noise signal of varying lengths are injected into musical contexts. We hypothesize that a model’s loss reacting positively to these perturbations, specifically a sharp increase (“Peak” area) for short injection, can serve as a proxy for its ability to discern musical integrity. Experiments with MusicGen models in the audio waveform domain confirm that Music LLMs respond more strongly to local, texture-level disruptions than to global semantic corruption. Beyond exposing this bias, our results highlight a new principle: the shape of the loss curve – rather than its absolute value – encodes critical information about the quality of the generated content (i.e., model behavior). We envision this profile-based evaluation as a label-free, model-intrinsic framework for assessing musical quality – opening the door to more principled training objectives and sharper benchmarks.

💡 Research Summary

The paper investigates a fundamental flaw in using cross‑entropy loss (or likelihood) as a quality metric for music large language models (LLMs). While likelihood‑based evaluation works reasonably well for text, the authors discover that, for music models such as MusicGen (various sizes) and YUE, the loss often decreases when systematic noise is injected into the input audio, contradicting the intuitive expectation that corrupting a sequence should increase loss.

Noise Injection Experiment

- Audio is tokenized with EnCodec (≈20 ms per token, 50 Hz).

- A white‑noise segment of controlled loudness (‑30 to ‑12 dB) is inserted at token 250 (5 s) of a 750‑token (15 s) clip.

- Noise lengths range from 5 to 200 tokens (0.1 s to 4 s), covering frame‑, note‑, beat‑, and measure‑scale disruptions.

- Loss is computed as the negative log‑likelihood summed over tokens; the per‑token loss difference Δℓₜ = −log p(x′ₜ|x′<ₜ) + log p(xₜ|x<ₜ) quantifies the effect of the perturbation.

Across all model sizes, datasets (training set, generated samples, out‑of‑distribution classical pieces), and noise lengths, the authors observe a consistent pattern: short noise (≤0.2 s) yields Δℓ near zero, while longer noise leads to negative average Δℓ, meaning the model assigns higher likelihood to the noisy segment than to the clean music. Pearson and Spearman correlations between noise length and Δℓ are strongly negative (r < ‑0.85, p < 0.001), confirming a robust trend. The same effect appears in the YUE 1 B model, reinforcing that it is not architecture‑specific.

Three‑Stage Loss Dynamics

Token‑wise visualizations reveal three distinct regions after noise insertion:

-

Peak – a sharp loss spike lasting ≈5 tokens (≈0.1 s) at the onset of the perturbation, reflecting immediate detection of inconsistency with the preceding context.

-

Assimilation – within the noisy window the loss drops dramatically and stabilizes at a low value, largely independent of the original musical context. The model treats the regular statistical pattern of white noise as easy to predict, effectively “forgetting” earlier music.

-

Recovery – after the noise ends, loss oscillates around the baseline with high variance, indicating difficulty in re‑establishing the original context. This mirrors exposure bias: after an error the autoregressive model relies on its own (now corrupted) predictions, shortening the effective context window.

The authors term this phenomenon the Context Amnesia Effect.

Order‑Shuffling Perturbation

To test whether the effect is specific to acoustic noise, the authors also shuffle contiguous segments of varying lengths (1–200 tokens) while preserving the original audio content. Results mirror the noise experiment: short shuffles cause only a peak, while longer shuffles produce a prolonged assimilation phase where loss remains near baseline despite substantial structural disruption. Hence, loss is insensitive to long‑range order violations.

Implications for Evaluation

Because absolute loss values can decrease under severe perturbations, they cannot reliably indicate whether a piece is high‑quality or heavily corrupted. Instead, the shape of the loss curve—the magnitude of the initial peak, its duration, and the variance during recovery—encodes richer information about a model’s ability to detect and react to inconsistencies. This profile‑based evaluation is label‑free and intrinsic to the model, offering a more principled alternative to raw likelihood for music generation assessment.

The paper situates these findings within the broader literature on exposure bias, shortcut learning, and likelihood bias in language models, noting that music generation adds unique challenges: long‑range structure, tonal tension, and novelty are essential, yet current autoregressive music LLMs treat unfamiliar but meaningful passages as noise, undervaluing them.

Conclusion

The study demonstrates that likelihood‑based metrics are fundamentally flawed for music LLM evaluation. The Context Amnesia Effect explains why models quickly adapt to local perturbations but ignore global structural damage. By focusing on loss dynamics rather than absolute values, researchers can develop more reliable, automatic, and scalable evaluation tools, and potentially redesign training objectives to mitigate exposure bias in music generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment