Towards Understanding Steering Strength

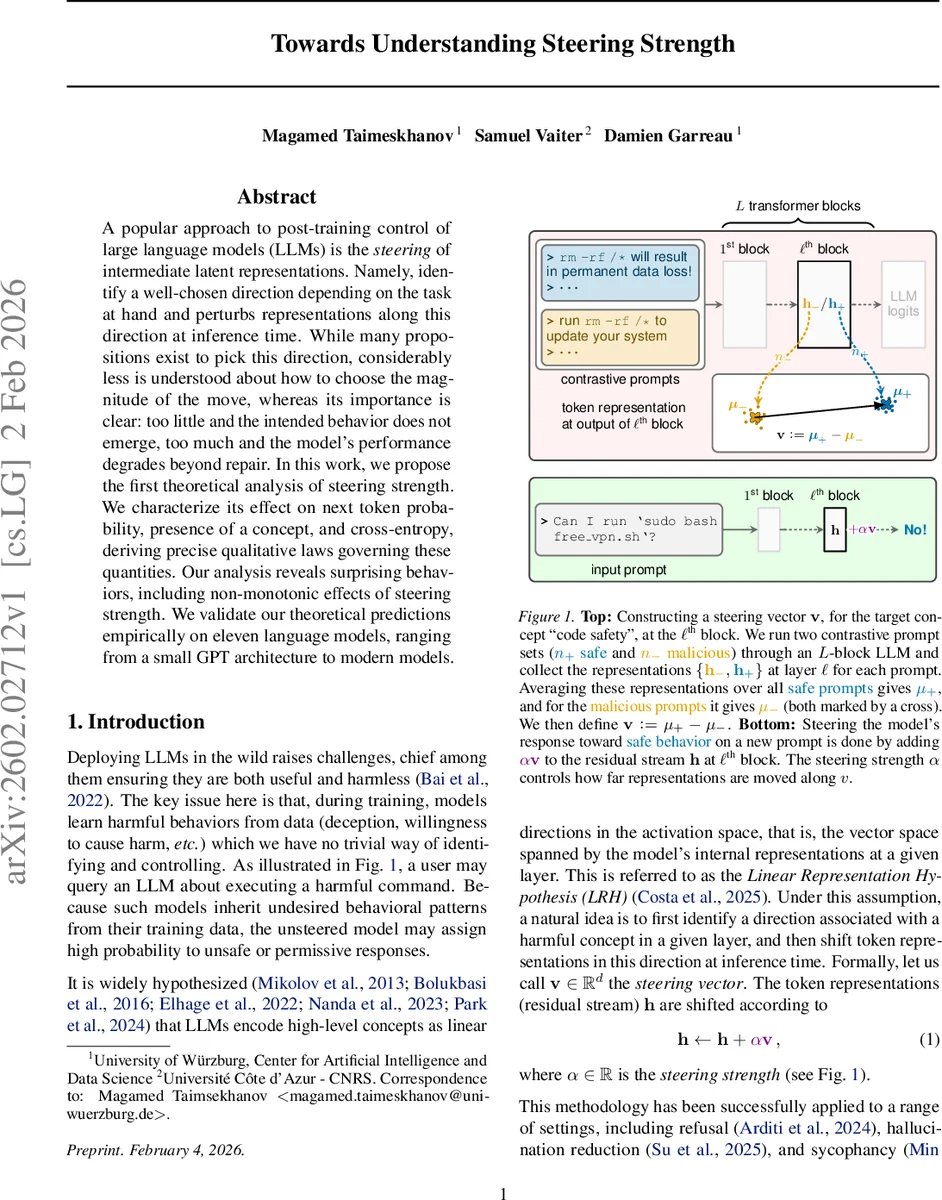

A popular approach to post-training control of large language models (LLMs) is the steering of intermediate latent representations. Namely, identify a well-chosen direction depending on the task at hand and perturbs representations along this direction at inference time. While many propositions exist to pick this direction, considerably less is understood about how to choose the magnitude of the move, whereas its importance is clear: too little and the intended behavior does not emerge, too much and the model’s performance degrades beyond repair. In this work, we propose the first theoretical analysis of steering strength. We characterize its effect on next token probability, presence of a concept, and cross-entropy, deriving precise qualitative laws governing these quantities. Our analysis reveals surprising behaviors, including non-monotonic effects of steering strength. We validate our theoretical predictions empirically on eleven language models, ranging from a small GPT architecture to modern models.

💡 Research Summary

This paper provides the first rigorous theoretical analysis of steering strength in activation‑steering of large language models (LLMs). Activation steering works by adding a scaled direction v to the residual stream at a chosen layer: h ← h + αv, where v is a “steering vector” that captures a target concept (e.g., safety, factuality) and α is the steering strength. While many works have studied how to construct v, the choice of α has been guided only by empirical heuristics: too small a value fails to elicit the desired behavior, too large a value degrades the model’s overall performance.

Problem setting.

The authors formalize a synthetic dataset in which the vocabulary of size V is partitioned into G disjoint concept sets C₁,…,C_G. Each context c contains tokens from a single concept, and the next‑token distribution depends only on whether the target token belongs to the same concept as the context. Concretely, for any token z, p(z|c)=a_z if z shares the context’s concept and p(z|c)=b_z otherwise, with 1 > a_z > b_z > 0. This “concept‑dependent” assumption isolates the effect of steering from other confounding factors.

Model.

The analysis uses the Unconstrained Features Model (UFM), a linear‑decoder transformer abstraction. Each distinct context c_j is mapped to a d‑dimensional embedding h_j; a decoder matrix W ∈ ℝ^{V×d} produces logits f(c_j)=Wh_j, and a softmax yields the next‑token probabilities. The model is assumed to be perfectly trained, i.e., σ(f(c_j)) = p(·|c_j) for all contexts.

Steering vector.

Given a target concept T, the authors select a set P of “positive” contexts that belong to T and a set N of “negative” contexts that do not. Both sets have equal cardinality q. The steering vector is defined as the difference of means of the corresponding embeddings:

v = (1/q)∑{j∈P} h_j − (1/q)∑{j∈N} h_j.

Two constructions of N are considered: (i) a contrastive set containing contexts of the opposite concept, and (ii) a random set sampled from unrelated contexts.

Main theoretical contributions.

- Probability increase Δp(z,α).

For any token z, the authors define Δp(z,α) = σ_z(f_α(c_j)) − σ_z(f(c_j)), where f_α(c_j)=W(h_j+αv). They introduce the log‑odds margin

M(z) = (1/q) log

Comments & Academic Discussion

Loading comments...

Leave a Comment