WideSeek: Advancing Wide Research via Multi-Agent Scaling

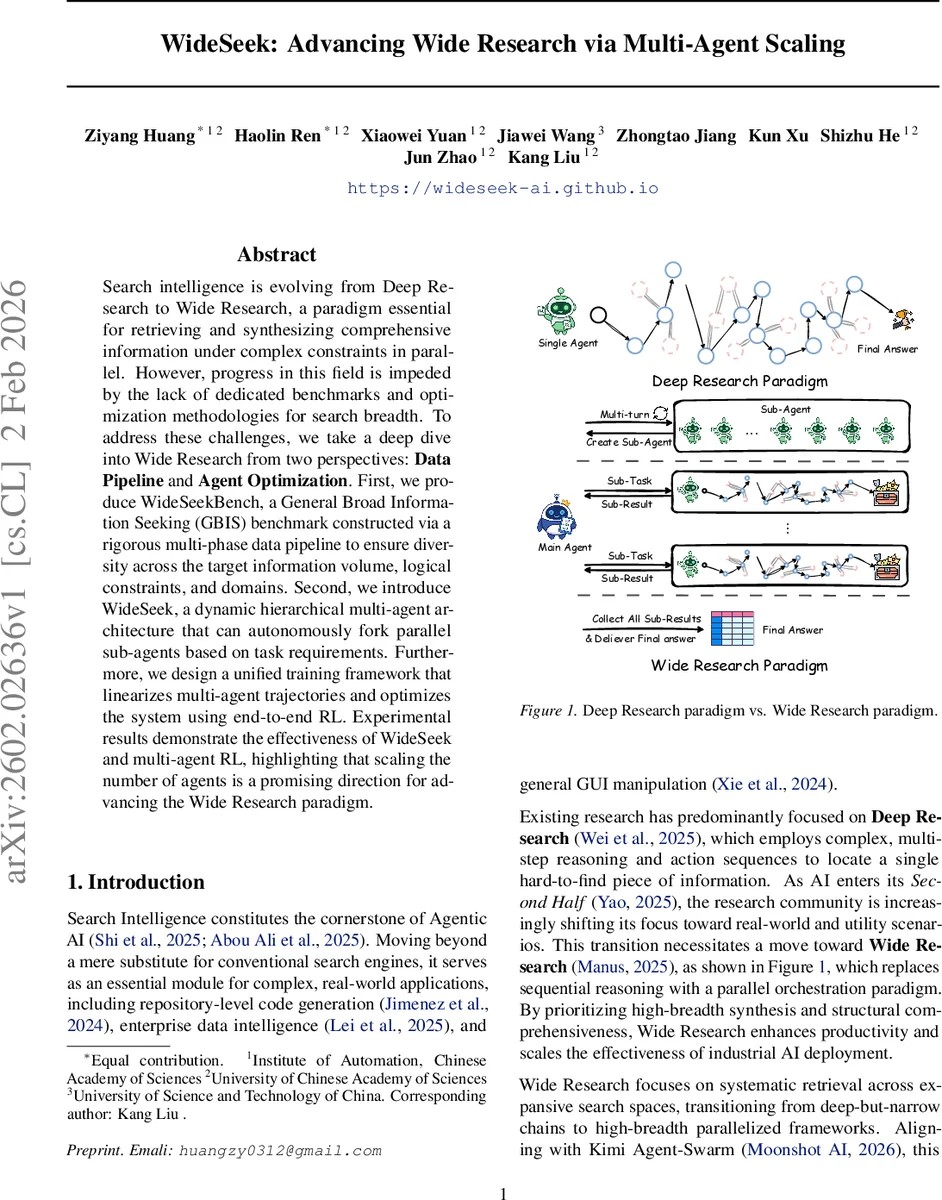

Search intelligence is evolving from Deep Research to Wide Research, a paradigm essential for retrieving and synthesizing comprehensive information under complex constraints in parallel. However, progress in this field is impeded by the lack of dedicated benchmarks and optimization methodologies for search breadth. To address these challenges, we take a deep dive into Wide Research from two perspectives: Data Pipeline and Agent Optimization. First, we produce WideSeekBench, a General Broad Information Seeking (GBIS) benchmark constructed via a rigorous multi-phase data pipeline to ensure diversity across the target information volume, logical constraints, and domains. Second, we introduce WideSeek, a dynamic hierarchical multi-agent architecture that can autonomously fork parallel sub-agents based on task requirements. Furthermore, we design a unified training framework that linearizes multi-agent trajectories and optimizes the system using end-to-end RL. Experimental results demonstrate the effectiveness of WideSeek and multi-agent RL, highlighting that scaling the number of agents is a promising direction for advancing the Wide Research paradigm.

💡 Research Summary

WideSeek addresses the emerging “Wide Research” paradigm, which shifts the focus of search intelligence from deep, single‑answer reasoning to broad, parallelized information synthesis under complex constraints. The authors first construct a novel benchmark, WideSeekBench, for General Broad Information Seeking (GBIS). Using a large‑scale knowledge graph, they sample seed entities and seed constraints, then recursively compose logical expressions (AND, OR, NOT) to define complex filters Φ. Applying Φ to the graph yields target entity sets, from which diverse attribute schemas are derived. An LLM‑based self‑refining loop converts these logical specifications into natural‑language queries while verifying consistency, and column‑wise evaluation rubrics are automatically generated. After three stages of filtering—rule‑based, LLM‑based, and human verification—the final dataset contains 5,156 high‑quality tasks, split into 4,436 training and 720 test instances. The test set is carefully balanced across information volume, constraint complexity, and domain diversity, and a simulated local document corpus ensures reproducible evaluation using Success Rate, Row F1, and Item F1 metrics.

The second contribution is WideSeek, a dynamic hierarchical multi‑agent system. A centralized Main Agent (Planner) operates on a global state that includes the user query, high‑level thoughts, and accumulated sub‑results. At each planning step, the Main Agent decides—via a unified policy πθ—whether to terminate or to create a variable number k of Sub‑Agents (Executors). Each Sub‑Agent runs its own local MDP, using the same policy to invoke atomic search tools (search, open_page, etc.), collect observations, and produce a textual sub‑result. Upon completion, sub‑results are merged into the global state, and the Main Agent may either spawn additional agents or synthesize the final answer table. This architecture departs from prior static multi‑agent designs by allowing the policy to determine the number and timing of sub‑agents, thereby adapting to task difficulty and constraint complexity.

Training proceeds by first distilling high‑quality trajectories from multiple teacher models and fine‑tuning the policy via supervised fine‑tuning (SFT). The hierarchical rollouts are then linearized into a single sequence, enabling end‑to‑end reinforcement learning. The RL objective combines Item F1 reward, tool‑error penalties, and group‑normalization regularization, optimized with PPO. Experiments demonstrate that scaling the number of sub‑agents markedly improves performance: success rates rise from 62 % with a single agent to 85 % with eight agents, while Row F1 and Item F1 also increase. WideSeek outperforms existing deep‑research baselines by 12–18 percentage points across all metrics, especially on tasks with high logical complexity or multi‑domain constraints. Analysis reveals that the policy learns to allocate more sub‑agents when constraints are intricate and to conserve resources when the target information volume is low.

In summary, the paper makes three key contributions: (1) a rigorously constructed, diverse GBIS benchmark that supports both training and evaluation; (2) a flexible, dynamically scaling multi‑agent framework that can autonomously decide how many parallel workers to spawn; and (3) an end‑to‑end RL training pipeline that jointly optimizes planning and execution across all agents. By empirically validating the benefits of agent scaling, the work establishes “Wide Research” as a distinct and promising direction for future search‑intelligence systems. Future work may explore richer inter‑agent communication, real‑time data streams, and human‑in‑the‑loop feedback to further enhance scalability and robustness.

Comments & Academic Discussion

Loading comments...

Leave a Comment