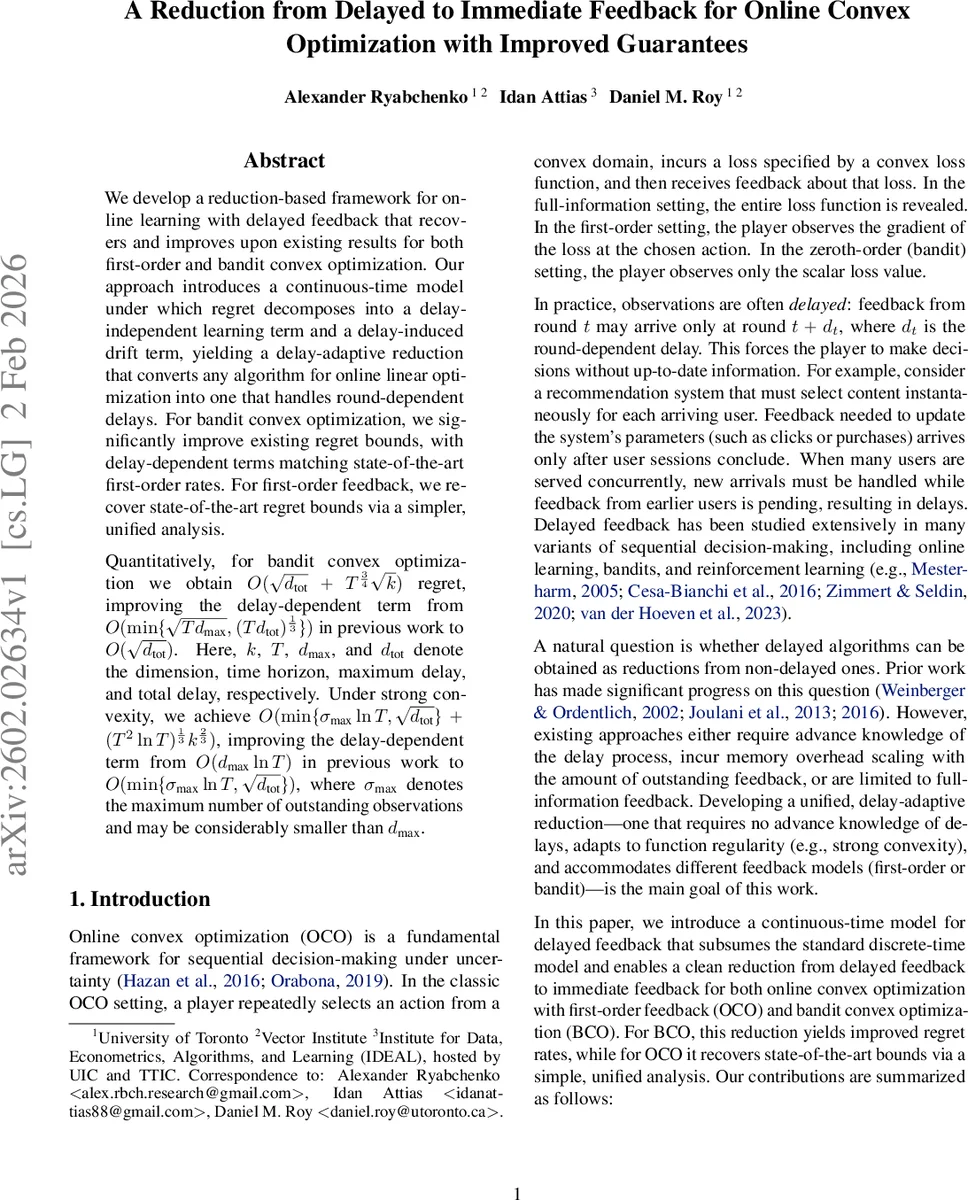

A Reduction from Delayed to Immediate Feedback for Online Convex Optimization with Improved Guarantees

We develop a reduction-based framework for online learning with delayed feedback that recovers and improves upon existing results for both first-order and bandit convex optimization. Our approach introduces a continuous-time model under which regret decomposes into a delay-independent learning term and a delay-induced drift term, yielding a delay-adaptive reduction that converts any algorithm for online linear optimization into one that handles round-dependent delays. For bandit convex optimization, we significantly improve existing regret bounds, with delay-dependent terms matching state-of-the-art first-order rates. For first-order feedback, we recover state-of-the-art regret bounds via a simpler, unified analysis. Quantitatively, for bandit convex optimization we obtain $O(\sqrt{d_{\text{tot}}} + T^{\frac{3}{4}}\sqrt{k})$ regret, improving the delay-dependent term from $O(\min{\sqrt{T d_{\text{max}}},(Td_{\text{tot}})^{\frac{1}{3}}})$ in previous work to $O(\sqrt{d_{\text{tot}}})$. Here, $k$, $T$, $d_{\text{max}}$, and $d_{\text{tot}}$ denote the dimension, time horizon, maximum delay, and total delay, respectively. Under strong convexity, we achieve $O(\min{σ_{\text{max}} \ln T, \sqrt{d_{\text{tot}}}} + (T^2\ln T)^{\frac{1}{3}} {k}^{\frac{2}{3}})$, improving the delay-dependent term from $O(d_{\text{max}} \ln T)$ in previous work to $O(\min{σ_{\text{max}} \ln T, \sqrt{d_{\text{tot}}}})$, where $σ_{\text{max}}$ denotes the maximum number of outstanding observations and may be considerably smaller than $d_{\text{max}}$.

💡 Research Summary

The paper introduces a unified reduction framework that transforms online convex optimization (OCO) and online bandit convex optimization (BCO) problems with delayed feedback into equivalent problems with immediate feedback. The authors first propose a continuous‑time model (CTM) in which predictions and observations are placed as events on a real‑valued timeline. By defining latency intervals (

Comments & Academic Discussion

Loading comments...

Leave a Comment