AgentRx: Diagnosing AI Agent Failures from Execution Trajectories

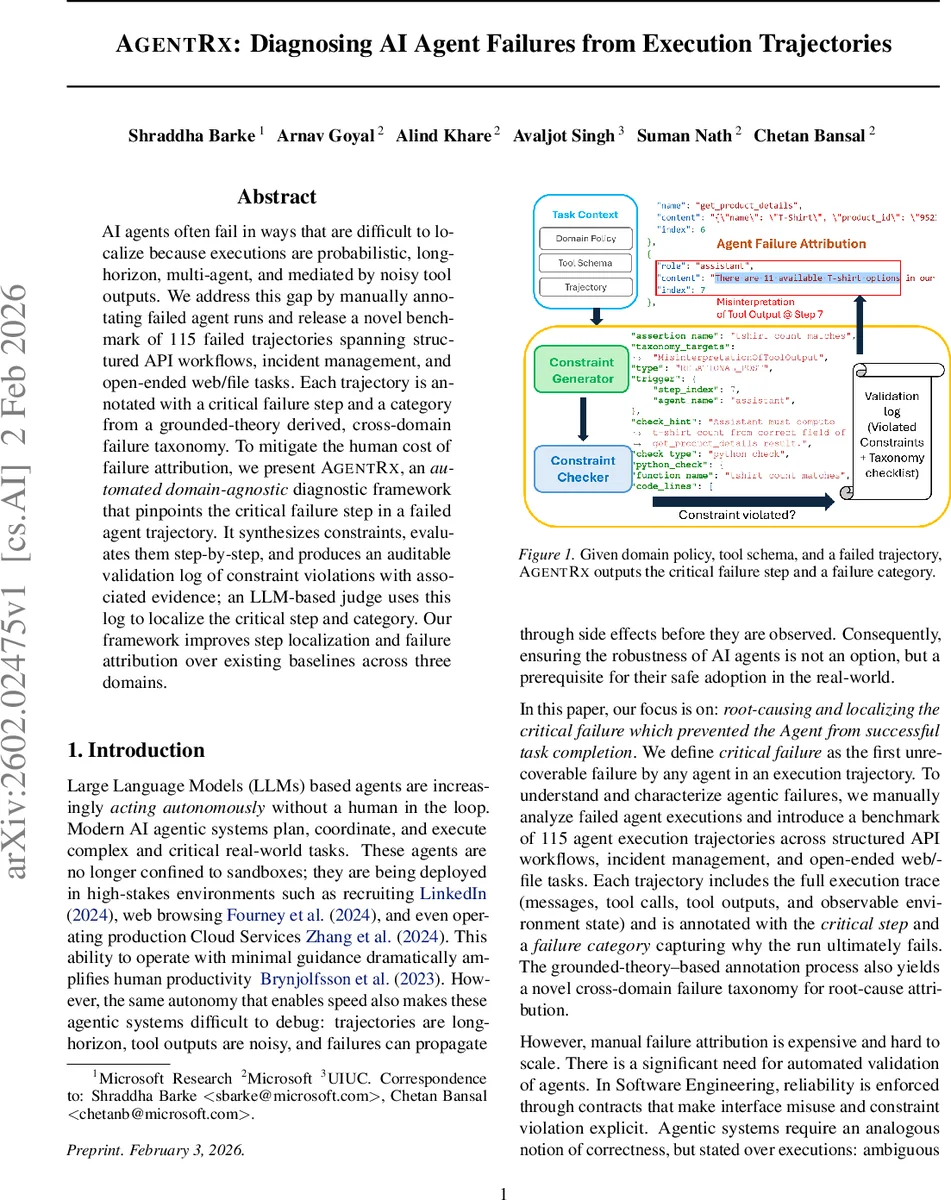

AI agents often fail in ways that are difficult to localize because executions are probabilistic, long-horizon, multi-agent, and mediated by noisy tool outputs. We address this gap by manually annotating failed agent runs and release a novel benchmark of 115 failed trajectories spanning structured API workflows, incident management, and open-ended web/file tasks. Each trajectory is annotated with a critical failure step and a category from a grounded-theory derived, cross-domain failure taxonomy. To mitigate the human cost of failure attribution, we present AGENTRX, an automated domain-agnostic diagnostic framework that pinpoints the critical failure step in a failed agent trajectory. It synthesizes constraints, evaluates them step-by-step, and produces an auditable validation log of constraint violations with associated evidence; an LLM-based judge uses this log to localize the critical step and category. Our framework improves step localization and failure attribution over existing baselines across three domains.

💡 Research Summary

AgentRx addresses the challenging problem of pinpointing the first unrecoverable failure—termed the “critical failure”—in long‑horizon, probabilistic, and often multi‑agent executions of large‑language‑model (LLM) based agents. The authors first construct a benchmark consisting of 115 failed execution trajectories drawn from three distinct domains: structured API workflows (τ‑bench), incident‑management troubleshooting (Flash), and open‑ended web/file tasks (Magentic‑One). Each trajectory is fully logged (messages, tool calls, tool outputs, and environment state) and manually annotated by three annotators using a grounded‑theory coding process. Annotators mark every observable failure, then work backwards from the terminal outcome to identify the earliest failure that the agents never recover from, designating it as the critical failure. This process yields a cross‑domain taxonomy of nine root‑cause categories: Plan Adherence, Invention of Information, Invalid Invocation, Misinterpretation of Tool Output, Intent‑Plan Misalignment, Under‑specified Intent, Intent Not Supported, Guardrails Triggered, and System Failure.

AgentRx itself is a domain‑agnostic diagnostic pipeline. It takes as input (i) a toolset with input‑output schemas, (ii) optional natural‑language domain policies, and (iii) a failed trajectory. First, the system normalizes heterogeneous logs into a common intermediate representation. It then synthesizes two kinds of constraints: (a) global constraints derived once from tool schemas and policies, and (b) dynamic constraints generated at each step from the user instruction and the prefix of the trajectory observed so far. Each constraint consists of a guard (determining when the constraint applies) and an assertion (the condition to be satisfied). Guards are typically structural (e.g., “step contains a call to tool X”), while assertions can be checked programmatically (schema validation, equality, membership) or semantically via an LLM‑based checker for natural‑language predicates.

During execution, AgentRx evaluates all applicable constraints step‑by‑step, recording any violations together with supporting evidence in a step‑indexed validation log. This log is auditable and directly links each violation to the concrete part of the trajectory that triggered it.

The validation log is then fed to an LLM‑based judge together with a checklist that operationalizes the nine taxonomy categories as sets of yes/no questions. The judge selects the critical failure step as the earliest step whose violation(s) plausibly explain the overall failure, and assigns the most appropriate failure category based on the mapping between violations and taxonomy labels. The judge can override pure constraint violations when contextual cues suggest a non‑critical error, allowing it to filter out spurious signals.

Experimental evaluation compares AgentRx against several baselines, including simple rule‑based detectors and prior trajectory‑analysis methods. Across all three domains, AgentRx improves critical‑step localization by an absolute 23.6 % and root‑cause categorization by 22.9 % over the strongest baseline. The gains are especially pronounced in the multi‑agent Magentic‑One setting, where complex inter‑tool interactions often mask the true point of failure.

The paper’s contributions are fourfold: (1) the release of a publicly available benchmark of 115 annotated failed trajectories, (2) a novel, domain‑agnostic diagnostic framework that combines constraint synthesis with LLM adjudication, (3) a grounded‑theory derived, cross‑domain failure taxonomy, and (4) extensive experiments demonstrating substantial performance improvements. Limitations include reliance on the quality of natural‑language policies (which may be misinterpreted by the LLM), dependence on a single LLM judge (potential bias), and computational overhead for very long trajectories. The authors suggest future work on formalizing policies, employing ensemble judges, and optimizing constraint indexing.

In summary, AgentRx offers a practical, scalable solution for automatically locating and categorizing the first unrecoverable failure in AI agent executions, substantially reducing the human effort required for debugging and paving the way for more reliable deployment of autonomous LLM‑driven systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment