TIC-VLA: A Think-in-Control Vision-Language-Action Model for Robot Navigation in Dynamic Environments

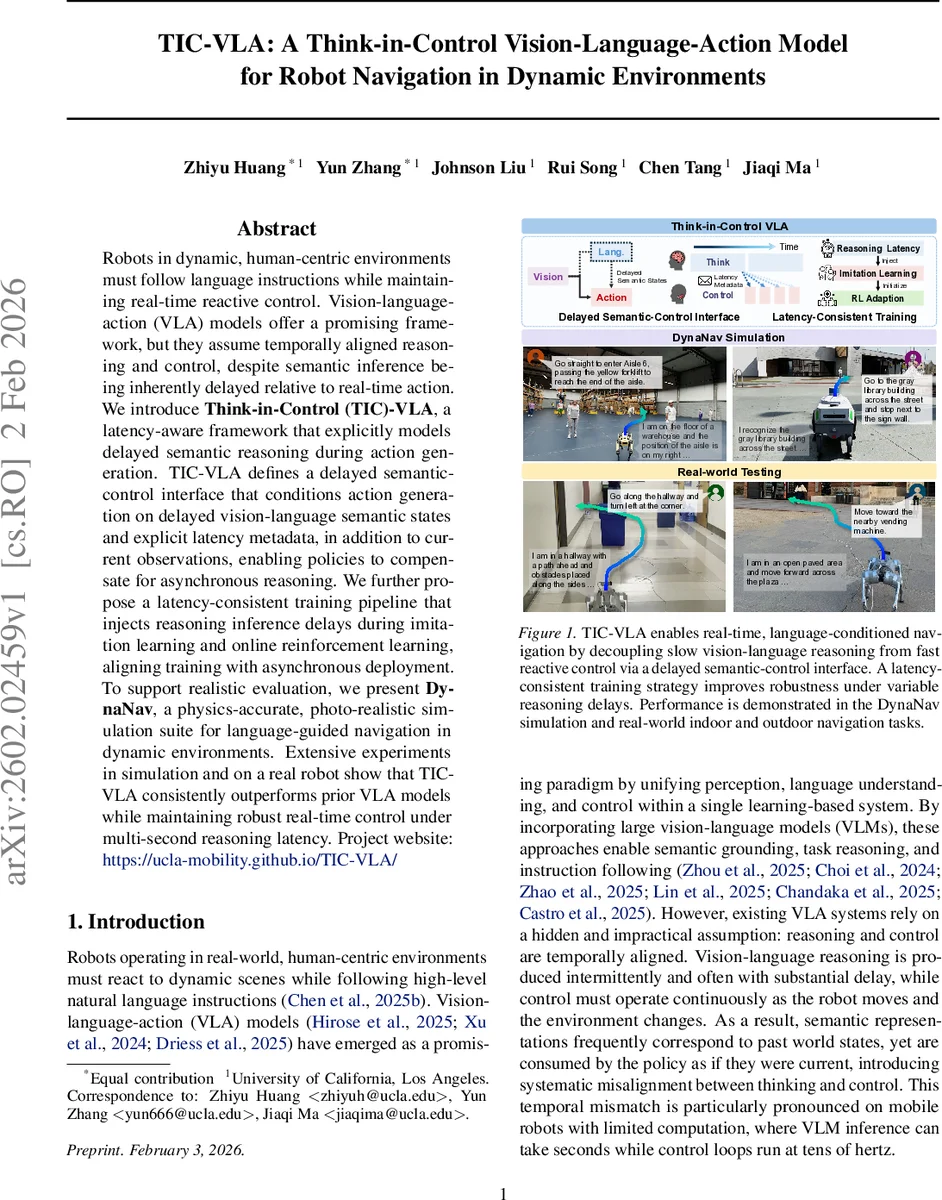

Robots in dynamic, human-centric environments must follow language instructions while maintaining real-time reactive control. Vision-language-action (VLA) models offer a promising framework, but they assume temporally aligned reasoning and control, despite semantic inference being inherently delayed relative to real-time action. We introduce Think-in-Control (TIC)-VLA, a latency-aware framework that explicitly models delayed semantic reasoning during action generation. TIC-VLA defines a delayed semantic-control interface that conditions action generation on delayed vision-language semantic states and explicit latency metadata, in addition to current observations, enabling policies to compensate for asynchronous reasoning. We further propose a latency-consistent training pipeline that injects reasoning inference delays during imitation learning and online reinforcement learning, aligning training with asynchronous deployment. To support realistic evaluation, we present DynaNav, a physics-accurate, photo-realistic simulation suite for language-guided navigation in dynamic environments. Extensive experiments in simulation and on a real robot show that TIC-VLA consistently outperforms prior VLA models while maintaining robust real-time control under multi-second reasoning latency. Project website: https://ucla-mobility.github.io/TIC-VLA/

💡 Research Summary

Robots operating in human‑centric, dynamic environments must follow natural‑language instructions while maintaining high‑frequency reactive control. Existing vision‑language‑action (VLA) models assume that semantic reasoning from large vision‑language models (VLMs) is temporally aligned with control, an assumption that breaks down when VLM inference incurs seconds‑long latency on embedded hardware. This paper introduces TIC‑VLA, a “Think‑in‑Control” framework that explicitly models and exploits inference delay. The system defines a delayed semantic‑control interface: the VLM processes visual frames anchored at time t‑Δt and outputs a key‑value cache together with explicit latency Δt and accumulated ego‑motion Δp since inference started. The fast action policy, implemented as a Transformer with cross‑attention, receives the current RGB observation, robot state, the delayed semantic representation, and the latency metadata, and predicts a short horizon of continuous actions. By exposing the delay to the policy, TIC‑VLA learns to reinterpret past semantic information in the current context.

Training is made latency‑consistent: during imitation learning, VLM inference is artificially delayed; during reinforcement learning (PPO), random latency values are sampled each step, encouraging robustness to variable delays. The authors also present DynaNav, a physics‑accurate, photorealistic simulator that supports dynamic humans, moving obstacles, and realistic lighting, enabling systematic evaluation of language‑conditioned navigation under controllable latency.

Experiments across eight indoor and outdoor scenarios show that TIC‑VLA outperforms prior VLA baselines (e.g., NavILA, OmniVLA) by 12 % higher success rate, 15 % shorter path length, and maintains performance even with 2–3 seconds of reasoning delay. Real‑world tests on wheeled and legged robots confirm that the method can handle multi‑second latencies while safely navigating around moving people and obstacles. Ablation studies reveal that removing latency metadata degrades success rates by over 20 %, highlighting the importance of the delayed semantic‑control interface.

The work demonstrates that inference latency is not merely an engineering bottleneck but a fundamental modeling challenge; by treating latency as a first‑class variable, TIC‑VLA bridges the gap between high‑capacity semantic reasoning and low‑latency control, paving the way for robust, on‑device embodied agents that can reason and act concurrently in real time. Limitations include reliance on a specific VLM (InternVL‑3‑1B) and the need for accurate latency measurement; future directions involve integrating latency prediction, scaling to larger multimodal models, and extending to additional sensor modalities.

Comments & Academic Discussion

Loading comments...

Leave a Comment