Superman: Unifying Skeleton and Vision for Human Motion Perception and Generation

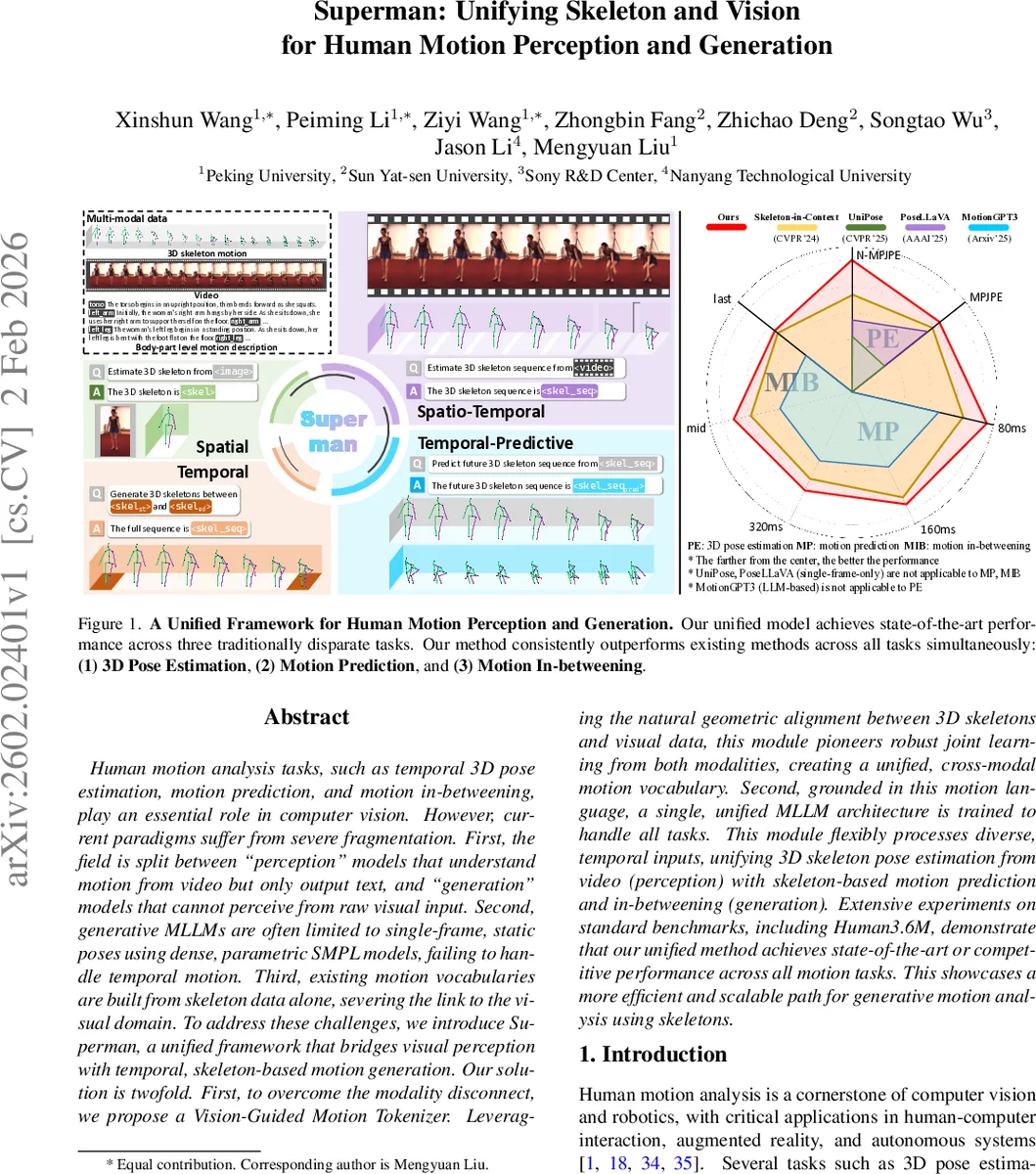

Human motion analysis tasks, such as temporal 3D pose estimation, motion prediction, and motion in-betweening, play an essential role in computer vision. However, current paradigms suffer from severe fragmentation. First, the field is split between perception'' models that understand motion from video but only output text, and generation’’ models that cannot perceive from raw visual input. Second, generative MLLMs are often limited to single-frame, static poses using dense, parametric SMPL models, failing to handle temporal motion. Third, existing motion vocabularies are built from skeleton data alone, severing the link to the visual domain. To address these challenges, we introduce Superman, a unified framework that bridges visual perception with temporal, skeleton-based motion generation. Our solution is twofold. First, to overcome the modality disconnect, we propose a Vision-Guided Motion Tokenizer. Leveraging the natural geometric alignment between 3D skeletons and visual data, this module pioneers robust joint learning from both modalities, creating a unified, cross-modal motion vocabulary. Second, grounded in this motion language, a single, unified MLLM architecture is trained to handle all tasks. This module flexibly processes diverse, temporal inputs, unifying 3D skeleton pose estimation from video (perception) with skeleton-based motion prediction and in-betweening (generation). Extensive experiments on standard benchmarks, including Human3.6M, demonstrate that our unified method achieves state-of-the-art or competitive performance across all motion tasks. This showcases a more efficient and scalable path for generative motion analysis using skeletons.

💡 Research Summary

The paper “Superman: Unifying Skeleton and Vision for Human Motion Perception and Generation” tackles a fundamental fragmentation in human motion analysis: perception models that understand video but cannot generate motion, and generation models that can synthesize motion but cannot ingest raw visual data. Moreover, existing motion tokenizers are built solely on skeletal data, ignoring visual cues, and most generative large language models (LLMs) are limited to static, single‑frame poses. To bridge these gaps, the authors propose a unified framework named Superman that jointly learns a cross‑modal motion vocabulary and a single multimodal LLM capable of both perception and generation tasks.

The core technical contribution is the Vision‑Guided Motion Tokenizer (VGMT). VGMT is a VQ‑VAE‑style architecture that processes a video clip and its corresponding 3D pose sequence through two parallel streams. The visual stream extracts pose‑centric features using a backbone (e.g., HRNet) and refines them with a Visual‑Skeleton Attention (VSA) module that samples and aggregates features around each joint’s 2D projection, making the visual representation robust to occlusions. The skeletal stream encodes the raw joint coordinates with lightweight 2‑D convolutions to capture spatio‑temporal geometry. After temporal down‑sampling, the two streams produce window‑level embeddings that are quantized against a hybrid codebook where each entry consists of a paired visual prototype and a geometric prototype. Token assignment minimizes a joint Euclidean distance, ensuring that each discrete token simultaneously encodes visual appearance and 3D structure. The decoder reconstructs the 3D pose from the skeletal part of the token, and the whole tokenizer is trained with a reconstruction loss plus modality‑specific commitment terms.

With this visually grounded motion language, the authors fine‑tune a decoder‑only large language model (LLM) to treat motion tasks as conditional sequence generation. The same model can (1) estimate 3D pose from video by converting the video into a token sequence and decoding it back to 3D joints, (2) predict future motion by autoregressively extending an observed token sequence, and (3) perform motion in‑betweening by inserting tokens between two key‑frame token sequences. No task‑specific heads or separate networks are required; the model simply receives different conditioning signals (video frames, skeleton tokens, or text prompts) and generates the appropriate token output.

Extensive experiments on Human3.6M demonstrate that Superman achieves state‑of‑the‑art or competitive results across all three tasks. For 3D pose estimation, it improves mean per‑joint position error (MPJPE) by 11.97 % over the best multi‑task perception baseline and by 10.91 % over the strongest LLM‑based baseline. For motion prediction and in‑betweening, it matches or surpasses specialized models such as MotionGPT and MotionLLM across multiple temporal horizons (80 ms, 160 ms, 320 ms). Importantly, an ablation where the visual component of the tokenizer is removed leads to a sharp performance drop, confirming that the cross‑modal tokenization is the key driver of the gains. Moreover, when trained only on Human3.6M, Superman generalizes well to the out‑of‑domain 3DPW dataset, indicating that the hybrid codebook captures visual diversity robustly.

The paper also discusses limitations. The VSA module relies on accurate 2D joint detections; errors in detection can propagate to token quality. The size of the hybrid codebook and the temporal window length affect both accuracy and computational cost, which may hinder real‑time deployment. Finally, the current evaluation focuses on single‑person actions; extending the approach to multi‑person interactions or human‑object manipulation remains an open challenge. Future work could explore detection‑free visual encoders, dynamic codebook updates, and tighter integration of textual prompts for richer multimodal reasoning.

In summary, Superman presents a compelling unified architecture that dissolves the traditional “read‑only vs. write‑only” divide in human motion analysis. By grounding motion tokens in both visual and skeletal modalities and leveraging a single multimodal LLM, it achieves strong performance across perception and generation tasks while maintaining a compact, scalable design. The work opens promising avenues for more integrated, multimodal human‑centric AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment