Uncertainty-Aware Image Classification In Biomedical Imaging Using Spectral-normalized Neural Gaussian Processes

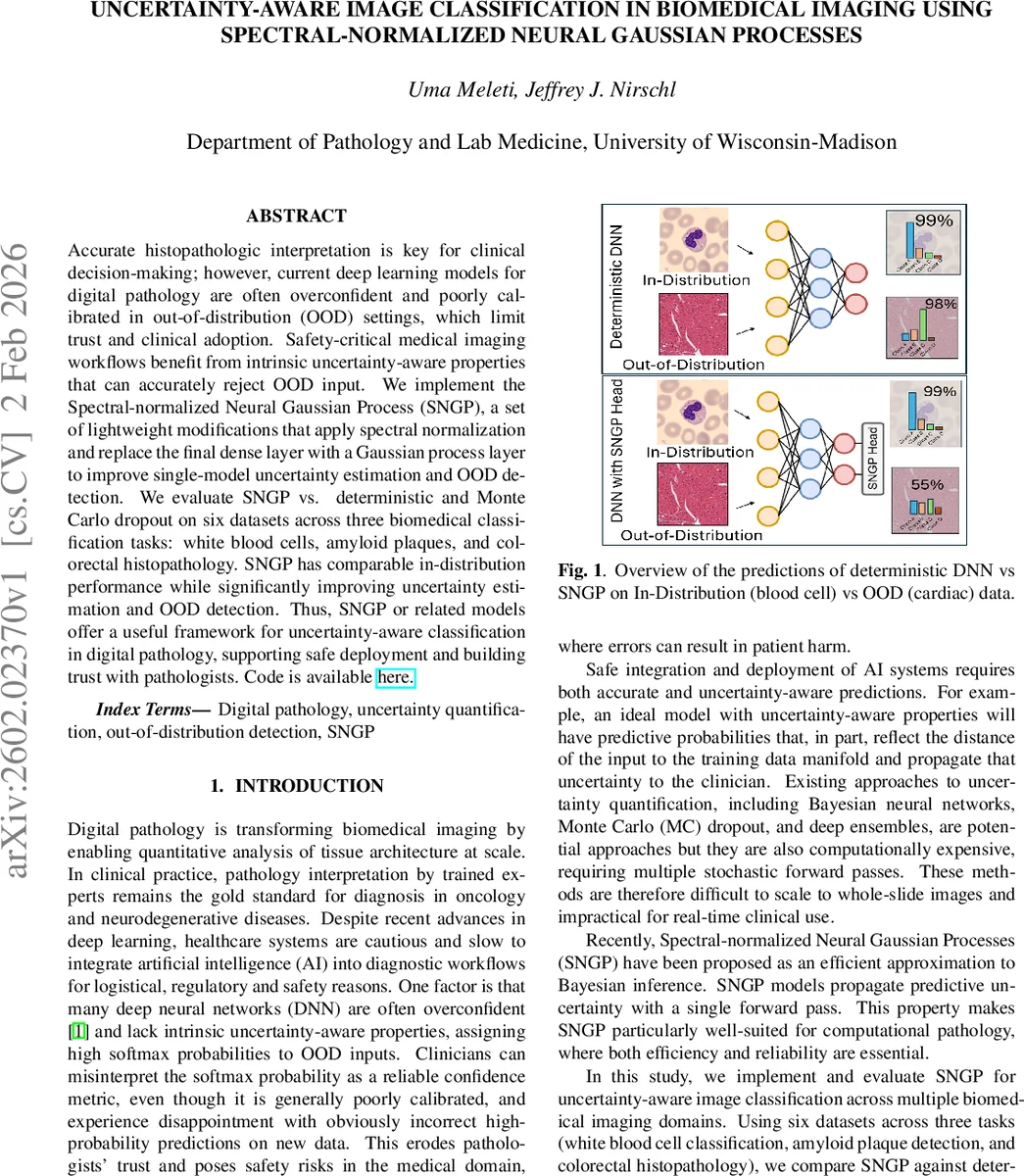

Accurate histopathologic interpretation is key for clinical decision-making; however, current deep learning models for digital pathology are often overconfident and poorly calibrated in out-of-distribution (OOD) settings, which limit trust and clinical adoption. Safety-critical medical imaging workflows benefit from intrinsic uncertainty-aware properties that can accurately reject OOD input. We implement the Spectral-normalized Neural Gaussian Process (SNGP), a set of lightweight modifications that apply spectral normalization and replace the final dense layer with a Gaussian process layer to improve single-model uncertainty estimation and OOD detection. We evaluate SNGP vs. deterministic and MonteCarlo dropout on six datasets across three biomedical classification tasks: white blood cells, amyloid plaques, and colorectal histopathology. SNGP has comparable in-distribution performance while significantly improving uncertainty estimation and OOD detection. Thus, SNGP or related models offer a useful framework for uncertainty-aware classification in digital pathology, supporting safe deployment and building trust with pathologists.

💡 Research Summary

This paper addresses a critical barrier to the clinical deployment of deep learning in digital pathology: the lack of reliable uncertainty estimates and poor out‑of‑distribution (OOD) detection. The authors implement Spectral‑Normalized Neural Gaussian Processes (SNGP), a lightweight modification that adds spectral normalization to all hidden layers and replaces the final dense classifier with a Gaussian Process (GP) layer approximated via Random Fourier Features (RFF). Spectral normalization constrains the Lipschitz constant of the network, stabilizing feature representations and making the model “distance‑aware.” The GP layer provides a closed‑form posterior mean and variance, allowing uncertainty to increase naturally as inputs drift away from the training manifold, all in a single forward pass.

Experiments span three biomedical imaging tasks—white blood cell classification, amyloid plaque detection, and colorectal cancer histopathology—each represented by two distinct datasets collected under different staining, scanner, and laboratory conditions. In total, six publicly available datasets from the MicroBench benchmark are used. All models share a ResNet‑18 backbone, are trained from scratch with AdamW (initial LR = 1e‑3) and a multi‑step scheduler, and undergo identical data preprocessing (RGB zero‑mean/unit‑variance, 224 × 224 resizing). Baseline deterministic models, Monte‑Carlo (MC) dropout (10 stochastic forward passes at test time), and SNGP are compared under the same training regime.

Evaluation metrics include in‑distribution (ID) accuracy, F1 score, Expected Calibration Error (ECE), Brier score, and OOD detection AUROC based on maximum softmax probability (MSP) or entropy. Results show that SNGP matches the ID accuracy of the baselines (≈0.98) while achieving the lowest ECE (≈0.003), indicating superior calibration. More strikingly, SNGP attains near‑perfect OOD AUROC values (0.97–1.00) across all external datasets, whereas deterministic and MC‑dropout models linger around 0.6–0.7 AUROC, reflecting over‑confidence on OOD inputs. Inference latency for SNGP (≈0.21–0.25 ms) is comparable to the deterministic baseline and dramatically lower than MC‑dropout (≈1.4–1.5 ms), confirming its suitability for real‑time clinical workflows.

A notable exception occurs when OOD datasets are highly similar to the training set (e.g., two amyloid plaque collections with low Fréchet Inception Distance), where AUROC drops to random‑guess levels, highlighting the intrinsic difficulty of distinguishing semantically identical classes across domains. The authors discuss this limitation and suggest future work on domain adaptation and more expressive kernel designs.

The paper contributes an open‑source PyTorch implementation of SNGP for pathology, a systematic benchmark across diverse tasks, and a clear demonstration that single‑pass uncertainty‑aware models can provide calibrated predictions and robust OOD detection without sacrificing computational efficiency. Ethical compliance is addressed by using only de‑identified public data, and the authors declare no conflicts of interest. Overall, the study positions SNGP as a practical, trustworthy alternative to Monte‑Carlo or ensemble methods for safe AI integration in digital pathology.

Comments & Academic Discussion

Loading comments...

Leave a Comment