Prediction-Powered Risk Monitoring of Deployed Models for Detecting Harmful Distribution Shifts

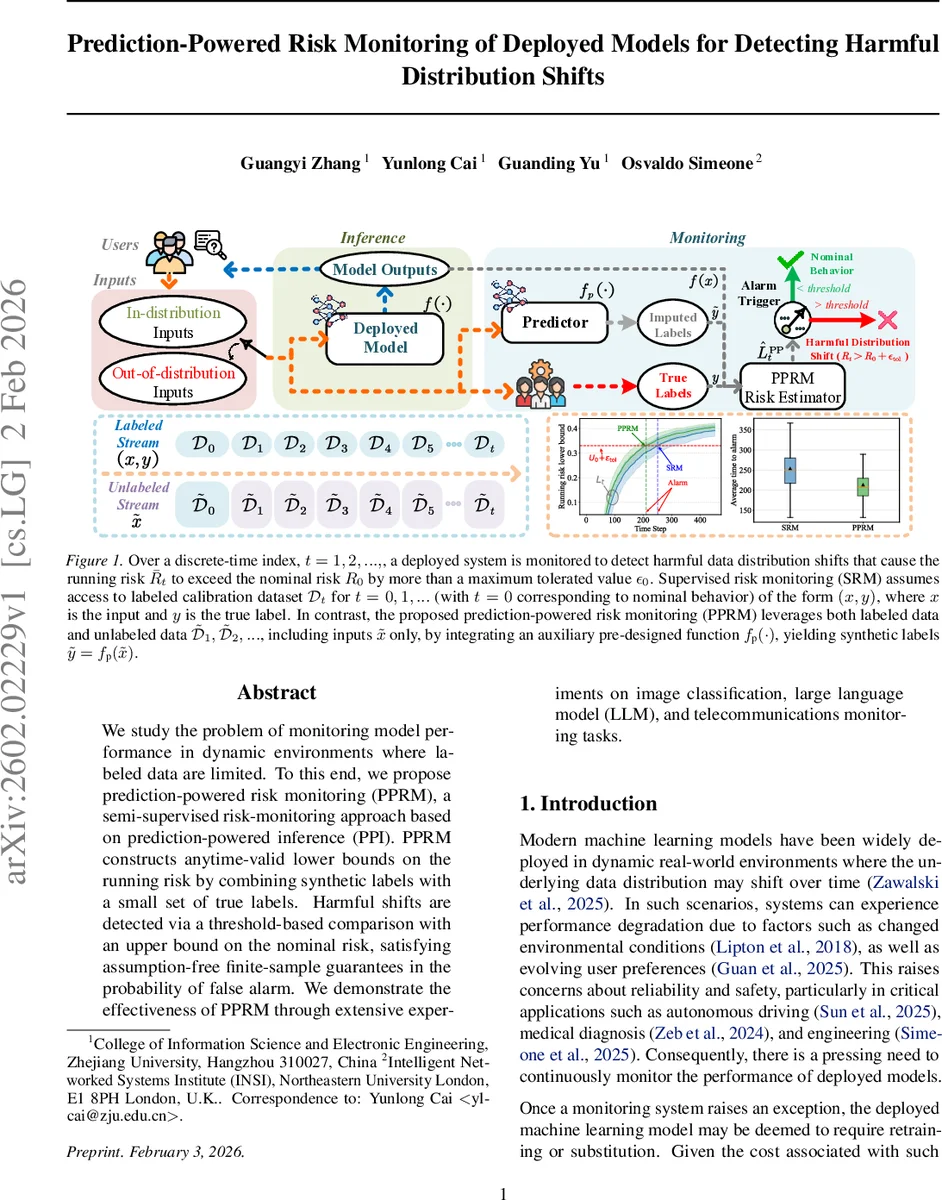

We study the problem of monitoring model performance in dynamic environments where labeled data are limited. To this end, we propose prediction-powered risk monitoring (PPRM), a semi-supervised risk-monitoring approach based on prediction-powered inference (PPI). PPRM constructs anytime-valid lower bounds on the running risk by combining synthetic labels with a small set of true labels. Harmful shifts are detected via a threshold-based comparison with an upper bound on the nominal risk, satisfying assumption-free finite-sample guarantees in the probability of false alarm. We demonstrate the effectiveness of PPRM through extensive experiments on image classification, large language model (LLM), and telecommunications monitoring tasks.

💡 Research Summary

The paper addresses the critical problem of continuously monitoring the performance of deployed machine learning models in environments where labeled data are scarce. Traditional supervised risk monitoring (SRM) relies on abundant labeled calibration data to construct confidence bounds on the source risk and on the running test risk; when the lower bound on the running risk exceeds an upper bound on the source risk plus a tolerance, an alarm is raised. However, SRM’s reliance on many true labels makes it impractical for many real‑world deployments, while purely unsupervised approaches either require strong calibration assumptions on the deployed model or need a large volume of unlabeled data before they become reliable.

To bridge this gap, the authors propose Prediction‑Powered Risk Monitoring (PPRM), a semi‑supervised framework that leverages both a small set of true labels and a large stream of unlabeled inputs. The key idea is to use an auxiliary predictor (f_p(\cdot)) (which can be a large language model, a domain expert, or even the deployed model itself) to generate synthetic labels (\tilde y) for the unlabeled inputs. Because synthetic labels are biased, PPRM corrects this bias by incorporating a small batch of true labeled samples at each time step. The risk estimator is a convex combination of the synthetic‑label risk and a bias‑correction term, controlled by a hyper‑parameter (\eta_t) that determines how much weight is given to the unlabeled data. Importantly, Lemma 3.1 proves that, as long as the sequence ({\eta_t}) is predictable (i.e., depends only on past data), the estimator remains unbiased: (\mathbb{E}

Comments & Academic Discussion

Loading comments...

Leave a Comment