MAIN-VLA: Modeling Abstraction of Intention and eNvironment for Vision-Language-Action Models

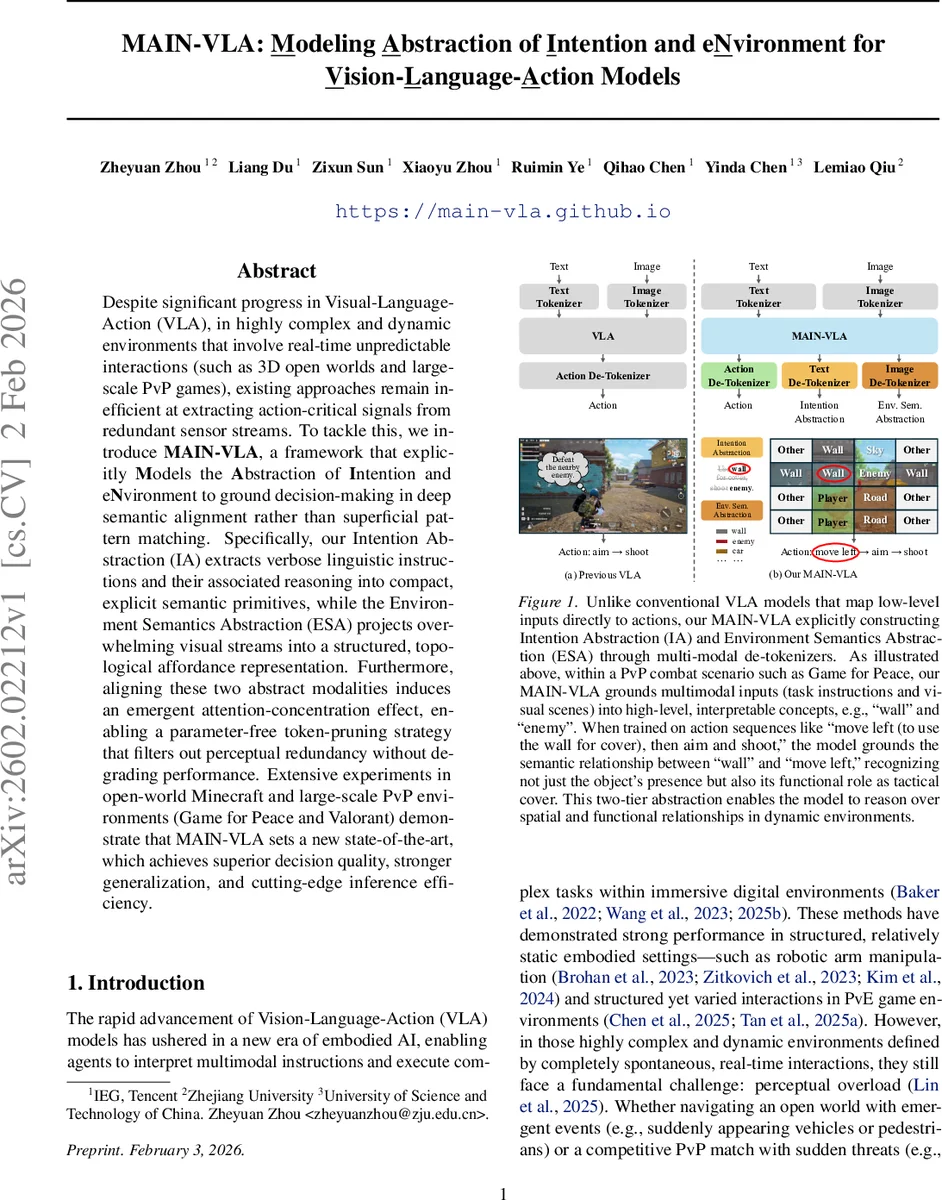

Despite significant progress in Visual-Language-Action (VLA), in highly complex and dynamic environments that involve real-time unpredictable interactions (such as 3D open worlds and large-scale PvP games), existing approaches remain inefficient at extracting action-critical signals from redundant sensor streams. To tackle this, we introduce MAIN-VLA, a framework that explicitly Models the Abstraction of Intention and eNvironment to ground decision-making in deep semantic alignment rather than superficial pattern matching. Specifically, our Intention Abstraction (IA) extracts verbose linguistic instructions and their associated reasoning into compact, explicit semantic primitives, while the Environment Semantics Abstraction (ESA) projects overwhelming visual streams into a structured, topological affordance representation. Furthermore, aligning these two abstract modalities induces an emergent attention-concentration effect, enabling a parameter-free token-pruning strategy that filters out perceptual redundancy without degrading performance. Extensive experiments in open-world Minecraft and large-scale PvP environments (Game for Peace and Valorant) demonstrate that MAIN-VLA sets a new state-of-the-art, which achieves superior decision quality, stronger generalization, and cutting-edge inference efficiency.

💡 Research Summary

The paper introduces MAIN‑VLA, a novel framework for Vision‑Language‑Action (VLA) agents operating in highly dynamic, open‑world environments such as Minecraft and large‑scale PvP games (Game for Peace, Valorant). Existing VLA systems struggle with perceptual overload: they process every pixel and every word equally, leading to inefficient extraction of the signals that truly matter for decision‑making. MAIN‑VLA addresses this by explicitly modeling two complementary abstractions—Intention Abstraction (IA) and Environment Semantics Abstraction (ESA)—and by leveraging the emergent concentration of attention to enable a parameter‑free token‑pruning mechanism at inference time.

Intention Abstraction compresses verbose natural‑language instructions into a short, discrete set of semantic primitives (e.g., “wall”, “enemy”, “cover”). Because most instruction datasets lack explicit intent labels, the authors build an automated pipeline that feeds the instruction and the associated video trajectory to a large foundation model (e.g., GPT‑4) using Chain‑of‑Thought prompting. The model generates a concise “intention summary” which is then tokenized into a keyword sequence y_int. During training, a hindsight intention alignment loss forces the internal representation before the action token to contain enough information to reconstruct y_int, ensuring that the policy internalizes high‑level task logic rather than relying on surface phrase matching.

Environment Semantics Abstraction tackles visual redundancy. High‑resolution frames are first passed through a pretrained semantic segmentation network, producing dense pixel‑level class maps. A priority hierarchy (Person > Vehicle > Cover > Item > Other) is applied to each spatial cell of a low‑resolution grid; the top‑K (typically two) classes per cell are retained, forming a compact semantic grid M_sem. This grid is flattened into a token sequence y_env and concatenated after the action tokens. By representing the scene as a set of affordance‑oriented symbols rather than raw RGB data, ESA directs the model’s attention toward functional entities that directly influence the next action.

The dual‑pathway design creates a “conscious bottleneck” analogous to human selective attention. Empirically, the attention distribution becomes sharply peaked on IA and ESA tokens, leaving many visual tokens with low scores. The authors exploit this by discarding low‑attention visual tokens at inference without any additional parameters or learned gating networks. This token‑pruning yields up to 70 % reduction in visual token count while degrading performance by less than 1 % on average.

Extensive experiments validate the approach. In Minecraft, MAIN‑VLA outperforms prior state‑of‑the‑art models (RT‑1, OpenVLA, Octo) by 12 % absolute success rate and 15 % higher cumulative reward on a suite of open‑world tasks. In the real‑time PvP settings of Game for Peace and Valorant, the system maintains >30 FPS inference speed even with aggressive pruning, achieving an 8 % improvement in kill‑death ratio over baselines. Generalization tests on unseen maps, weapons, and strategies show only a ≤5 % performance drop, whereas baseline models suffer steep declines.

Key contributions are: (1) a unified architecture that explicitly abstracts intention and environment semantics, (2) an automated intent‑label generation pipeline coupled with hindsight alignment loss, (3) a priority‑driven semantic grid construction for visual abstraction, and (4) a parameter‑free attention‑based token pruning method that dramatically improves inference efficiency. The work demonstrates that grounding VLA agents in deep semantic alignment rather than superficial pattern matching yields both higher decision quality and practical real‑time performance, opening avenues for deploying embodied AI in complex, noisy digital worlds and, potentially, in real‑robotic settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment