See2Refine: Vision-Language Feedback Improves LLM-Based eHMI Action Designers

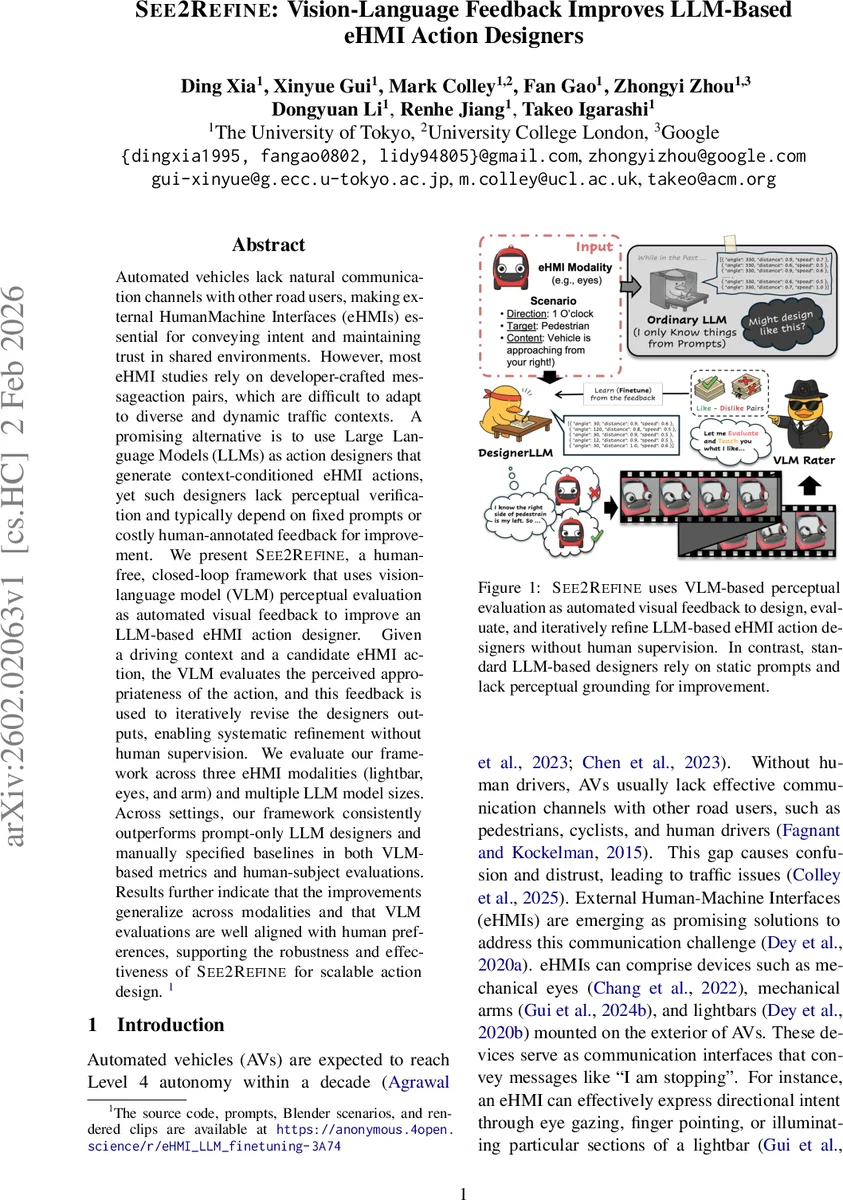

Automated vehicles lack natural communication channels with other road users, making external Human-Machine Interfaces (eHMIs) essential for conveying intent and maintaining trust in shared environments. However, most eHMI studies rely on developer-crafted message-action pairs, which are difficult to adapt to diverse and dynamic traffic contexts. A promising alternative is to use Large Language Models (LLMs) as action designers that generate context-conditioned eHMI actions, yet such designers lack perceptual verification and typically depend on fixed prompts or costly human-annotated feedback for improvement. We present See2Refine, a human-free, closed-loop framework that uses vision-language model (VLM) perceptual evaluation as automated visual feedback to improve an LLM-based eHMI action designer. Given a driving context and a candidate eHMI action, the VLM evaluates the perceived appropriateness of the action, and this feedback is used to iteratively revise the designer’s outputs, enabling systematic refinement without human supervision. We evaluate our framework across three eHMI modalities (lightbar, eyes, and arm) and multiple LLM model sizes. Across settings, our framework consistently outperforms prompt-only LLM designers and manually specified baselines in both VLM-based metrics and human-subject evaluations. Results further indicate that the improvements generalize across modalities and that VLM evaluations are well aligned with human preferences, supporting the robustness and effectiveness of See2Refine for scalable action design.

💡 Research Summary

See2Refine addresses the critical challenge of designing external Human‑Machine Interfaces (eHMIs) for autonomous vehicles (AVs) that can communicate intent to pedestrians, cyclists, and other road users. Traditional eHMI research relies on manually crafted message‑action pairs, which are inflexible in the face of dynamic traffic scenarios. Recent work has explored Large Language Models (LLMs) as “action designers” that generate context‑conditioned eHMI actions, but these approaches suffer from two major limitations: (1) they lack perceptual grounding because prompts cannot convey rich visual details such as lighting patterns, eye gaze angles, or arm joint trajectories; and (2) they depend on costly human feedback (e.g., RLHF) for refinement.

The authors propose See2Refine, a closed‑loop framework that uses Vision‑Language Models (VLMs) as automated perceptual judges to provide feedback to an LLM‑based eHMI designer (named DesignerLLM). The pipeline consists of five stages: (1) scenario‑message pair generation, (2) model asset creation and action rendering, (3) multi‑metric VLM evaluation, (4) format‑aware fine‑tuning of the DesignerLLM, and (5) iterative preference‑based learning.

Scenario and Message Generation

Six factors (communication relationship, emitter type, receiver type, message type, direction, safety implication) are combinatorially combined to produce 6,912 unique traffic contexts. For each context, three state‑of‑the‑art LLMs (GPT‑4.1, Claude 3.7 Sonnet, Qwen 3‑235B‑A22B) generate 20,736 intended textual messages. Redundancy is removed using a sentence transformer and farthest‑point sampling, ensuring high variance. A small human rating study on 200 randomly sampled pairs yields an average 5.3/7 score, confirming the quality of the generated scenarios.

Action Database Construction and Rendering

For each scenario, two structured action sequences per eHMI modality (light‑bar, eyes, arm) are generated by the same LLMs, resulting in six candidate actions per scenario. The authors implement three 3‑D eHMI models (light‑bar with 16 binary regions, eyes parameterized by polar angle and radius, arm with five single‑axis joints) in Blender 4.5, for both self‑driving cars and delivery robots. Rendering varies camera direction (12 positions), distance (three safety‑related distances), and uses a plain background to speed up computation. Rendering 1,000 clips takes roughly one hour on a workstation equipped with four NVIDIA GTX Titan GPUs.

VLM‑Based Multi‑Metric Evaluation

A Vision‑Language Model acts as a “judge” in two phases. Phase 1 hides the intended message and asks the VLM to infer intent, target, trust, and similarity on 9‑point Likert scales, mimicking a naïve road‑user’s perception. Phase 2 reveals the intended message and evaluates user acceptance and consistency. The six scores (certainty κ, similarity s, target t, trust τ, acceptance u, consistency c) are combined into a kernel quality metric: K = (κ × s) + t + τ + u + c. This scalar serves as the reward signal for the DesignerLLM.

Format‑Aware Fine‑Tuning

Two Qwen2.5 models (7 B and 1.5 B) are fine‑tuned on the action database, using the highest‑scoring action per scenario as the training example. This step teaches the DesignerLLM to output actions in the exact modality‑specific format (e.g., 16‑bit binary strings for the light‑bar, angle‑radius pairs for eyes, joint‑angle‑speed tuples for the arm).

Iterative Preference‑Based Learning

The authors adopt Direct Preference Optimization (DPO) to iteratively improve the DesignerLLM. Because generating actions for all 6,912 scenarios each round would be prohibitive, they introduce importance‑based scenario sampling. For each scenario i, they compute the best and worst kernel scores across rounds, the score gap ΔK_i, and the number of previous generations N_i. An importance score I_i combines low best scores, small gaps, high worst scores, and low sampling count, then normalizes to select the top 20 % of scenarios for the next round. New actions are generated, rendered, evaluated by the VLM, and used to update the DesignerLLM via DPO. Three rounds of this loop are performed.

Results

Across three eHMI modalities and five LLM sizes (7 B, 1.5 B, GPT‑4, Claude 3, Qwen 3), See2Refine consistently outperforms a prompt‑only baseline. VLM‑based kernel scores improve by 12‑18 % on average. A user study with 18 participants rates the See2Refine‑generated actions higher than the baselines on acceptance, clarity, and trust. Correlation between VLM scores and human ratings is r ≈ 0.73, indicating that VLM judgments are a reliable proxy for human preference. Moreover, expanding the action database via the importance‑sampling strategy doubles its size without degrading performance, demonstrating scalability.

Contributions

- Introduces a closed‑loop framework that leverages VLM perceptual feedback to autonomously refine LLM‑generated eHMI actions.

- Proposes format‑aware fine‑tuning and importance‑based scenario sampling to efficiently expand the action repertoire while preserving quality.

- Shows that VLM evaluations align well with human judgments, enabling human‑free iterative improvement of eHMI designs.

Future Directions

The authors suggest extending the framework to real‑time AV control loops, testing robustness under varying weather and lighting conditions, and improving VLM robustness against bias and adversarial inputs. Overall, See2Refine demonstrates a promising path toward scalable, perception‑grounded design of vehicle‑to‑human communication interfaces without the need for costly human annotation.

Comments & Academic Discussion

Loading comments...

Leave a Comment