FiLoRA: Focus-and-Ignore LoRA for Controllable Feature Reliance

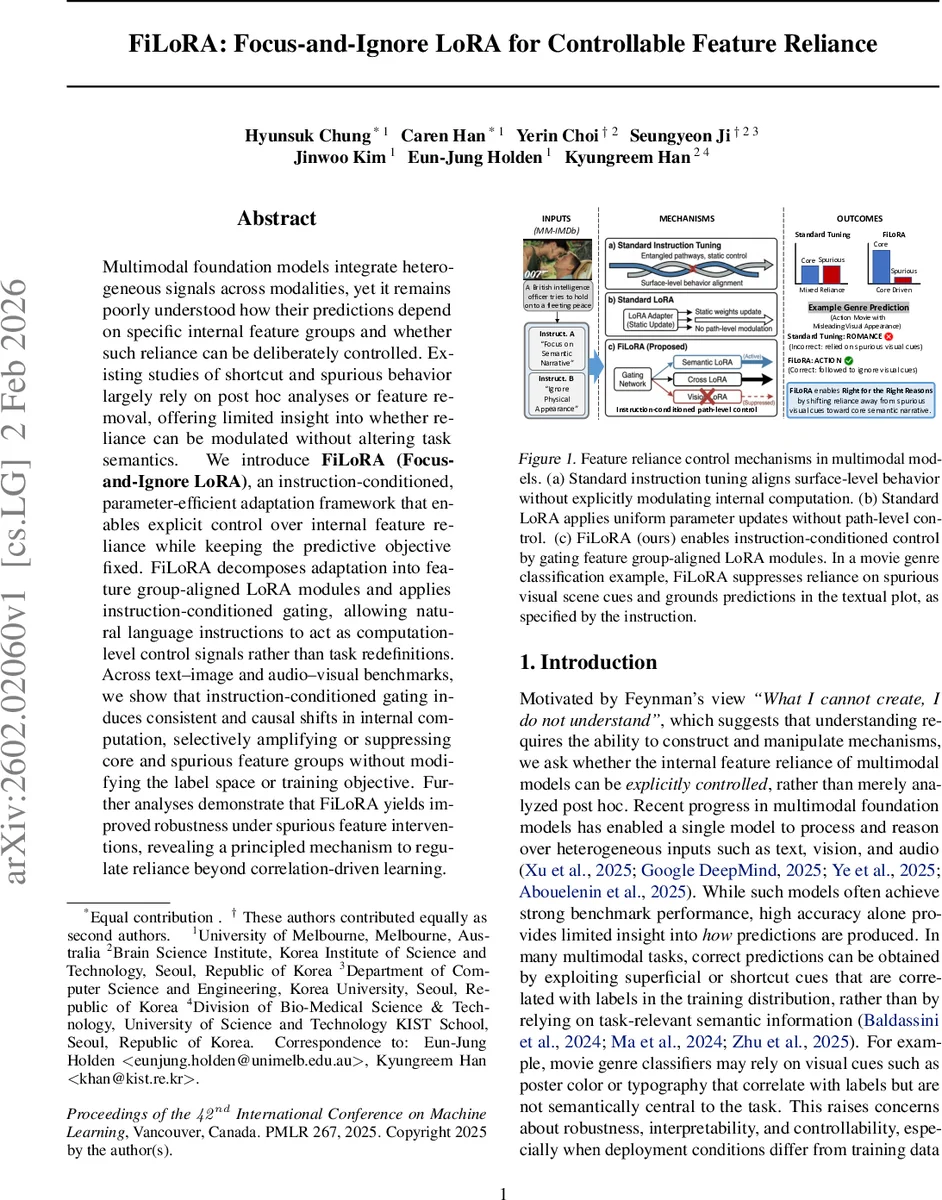

Multimodal foundation models integrate heterogeneous signals across modalities, yet it remains poorly understood how their predictions depend on specific internal feature groups and whether such reliance can be deliberately controlled. Existing studies of shortcut and spurious behavior largely rely on post hoc analyses or feature removal, offering limited insight into whether reliance can be modulated without altering task semantics. We introduce FiLoRA (Focus-and-Ignore LoRA), an instruction-conditioned, parameter-efficient adaptation framework that enables explicit control over internal feature reliance while keeping the predictive objective fixed. FiLoRA decomposes adaptation into feature group-aligned LoRA modules and applies instruction-conditioned gating, allowing natural language instructions to act as computation-level control signals rather than task redefinitions. Across text–image and audio–visual benchmarks, we show that instruction-conditioned gating induces consistent and causal shifts in internal computation, selectively amplifying or suppressing core and spurious feature groups without modifying the label space or training objective. Further analyses demonstrate that FiLoRA yields improved robustness under spurious feature interventions, revealing a principled mechanism to regulate reliance beyond correlation-driven learning.

💡 Research Summary

FiLoRA (Focus‑and‑Ignore LoRA) introduces a novel, parameter‑efficient method for actively controlling the internal feature reliance of multimodal foundation models using natural‑language instructions, without altering the underlying task or label space. The authors begin by highlighting a gap in existing research: while many studies identify shortcut or spurious feature usage through post‑hoc analyses, they provide no mechanism to intervene and reshape these dependencies during training or inference.

To address this, FiLoRA builds on the LoRA (Low‑Rank Adaptation) paradigm but extends it in two critical ways. First, LoRA updates are decomposed into semantically meaningful groups aligned with functional computation pathways—such as text semantics, visual appearance encoding, cross‑modal fusion, and acoustic feature extraction. Each group g receives its own low‑rank matrices (ΔW_g = B_g A_g^T), allowing the model to adapt distinct pathways independently while keeping the base model frozen.

Second, the framework introduces instruction‑conditioned gating. An instruction (I_i) is encoded by a lightweight language encoder (h_φ) and projected through a sigmoid to produce a gate vector (g(I_i) ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment