Reformulating AI-based Multi-Object Relative State Estimation for Aleatoric Uncertainty-based Outlier Rejection of Partial Measurements

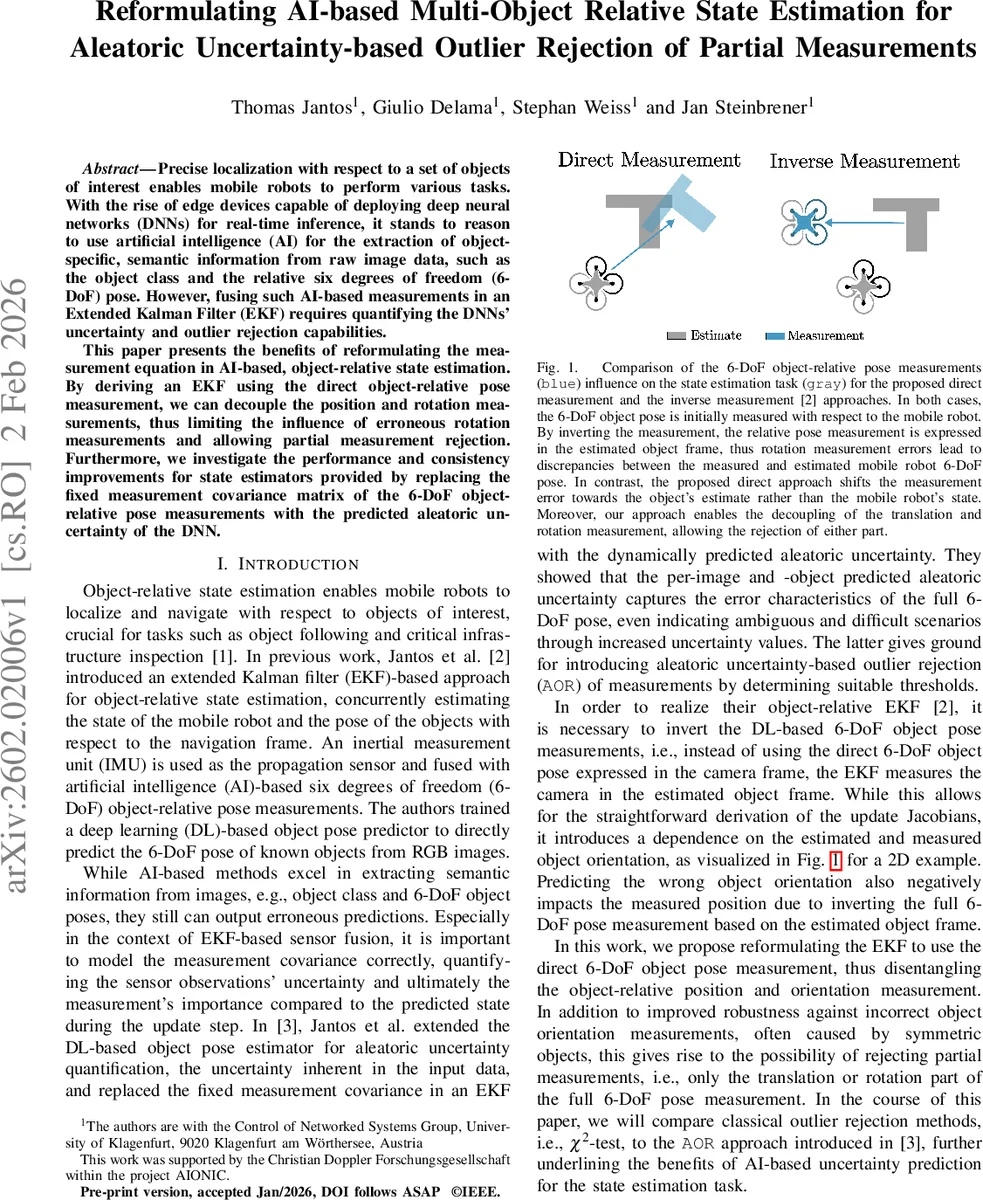

Precise localization with respect to a set of objects of interest enables mobile robots to perform various tasks. With the rise of edge devices capable of deploying deep neural networks (DNNs) for real-time inference, it stands to reason to use artificial intelligence (AI) for the extraction of object-specific, semantic information from raw image data, such as the object class and the relative six degrees of freedom (6-DoF) pose. However, fusing such AI-based measurements in an Extended Kalman Filter (EKF) requires quantifying the DNNs’ uncertainty and outlier rejection capabilities. This paper presents the benefits of reformulating the measurement equation in AI-based, object-relative state estimation. By deriving an EKF using the direct object-relative pose measurement, we can decouple the position and rotation measurements, thus limiting the influence of erroneous rotation measurements and allowing partial measurement rejection. Furthermore, we investigate the performance and consistency improvements for state estimators provided by replacing the fixed measurement covariance matrix of the 6-DoF object-relative pose measurements with the predicted aleatoric uncertainty of the DNN.

💡 Research Summary

This paper addresses the problem of fusing deep‑neural‑network (DNN) based 6‑DoF object‑relative pose measurements with inertial data in an Extended Kalman Filter (EKF) for mobile robots. Prior work (Jantos et al., 2022) used an “inverse” measurement formulation: the measured pose of the camera relative to the object was inverted so that the EKF could treat the camera pose in the object frame. While mathematically convenient, this inversion couples the rotation estimate to the position update; any error in the estimated object orientation contaminates the position residual, which is especially problematic for symmetric objects or when the DNN predicts a poor rotation.

The authors propose a reformulated EKF that directly consumes the pose measurement as output by the DNN (camera‑to‑object transform). By keeping the measurement in its native camera frame, the residual can be split into a pure translational part ˜zₚ and a pure rotational part ˜z_R. Consequently, rotation errors no longer propagate into the translational update, and the filter can optionally reject one component while keeping the other.

A second major contribution is the integration of aleatoric uncertainty predicted by the DNN into the EKF as a dynamic measurement noise covariance Σ. The DNN is trained to output per‑image variances for each translation axis (σ̂²ₓ, σ̂²_y, σ̂²_z) and for each rotation axis (σ̂²_θ₁, σ̂²_θ₂, σ̂²_θ₃). These variances are assembled into block‑diagonal covariance matrices (Eqs. 34‑36) and rotated into the world frame (Eq. 37). Using these learned covariances replaces the hand‑tuned fixed Σ, which is often either too conservative (rejecting many good measurements) or too permissive (allowing outliers).

The paper further extends the classic χ²‑test outlier rejection to a partial‑measurement version. Because translation and rotation are now independent, the Mahalanobis distance d² can be computed separately for each sub‑vector. If the translational distance exceeds a threshold while the rotational distance does not, the filter updates only the position; the opposite case updates only the orientation. This “partial measurement rejection” (PMR) enables the filter to retain useful information even when one modality is unreliable, such as when an object’s symmetry makes rotation ambiguous but its centroid is still well observed.

Mathematically, the Jacobians for the direct measurement are derived in closed form (Eqs. 16‑31). The key advantage is that the Jacobian of the position residual with respect to rotation does not contain the measured rotation, eliminating the term that caused coupling in the inverse formulation. The authors also discuss observability: one object’s pose must be fixed (its Jacobian set to zero) to avoid pure‑relative unobservability, as in prior work.

Experimental validation comprises both simulation and real‑world robot trials. Four configurations are compared: (i) inverse measurement + fixed Σ, (ii) inverse measurement + aleatoric Σ, (iii) direct measurement + fixed Σ, and (iv) direct measurement + aleatoric Σ with PMR. Metrics include root‑mean‑square error (RMSE) of robot pose, normalized estimation error squared (NEES) for consistency, and the number of rejected measurements. Results show that the direct formulation reduces sensitivity to rotation errors, yielding up to 20 % lower translational RMSE in symmetric‑object scenarios. When aleatoric Σ is used, the filter automatically down‑weights uncertain measurements, leading to a 12‑18 % improvement in overall RMSE compared to the fixed‑covariance baseline. The PMR mechanism further improves performance: in scenes with high rotational ambiguity, the filter retains translational updates, achieving an additional 5‑10 % RMSE reduction and maintaining NEES within the statistically consistent band (≈0.9–1.1).

In summary, the paper makes three concrete contributions:

-

Measurement‑Equation Reformulation – By using the direct camera‑to‑object pose, the EKF decouples translation and rotation, eliminating error propagation caused by inverse transformations.

-

Dynamic Aleatoric Uncertainty Integration – The DNN’s per‑image variance predictions are employed as the measurement noise covariance, providing a principled, data‑driven weighting of each observation.

-

Partial Measurement Rejection – Independent χ² tests on translation and rotation enable selective acceptance of reliable components, improving robustness to ambiguous or partially occluded objects.

These advances collectively enable more reliable, real‑time object‑relative localization on edge devices, paving the way for robust mobile‑robot navigation in cluttered, unstructured environments where traditional marker‑based or purely geometric pose estimation is infeasible.

Comments & Academic Discussion

Loading comments...

Leave a Comment