Beyond Open Vocabulary: Multimodal Prompting for Object Detection in Remote Sensing Images

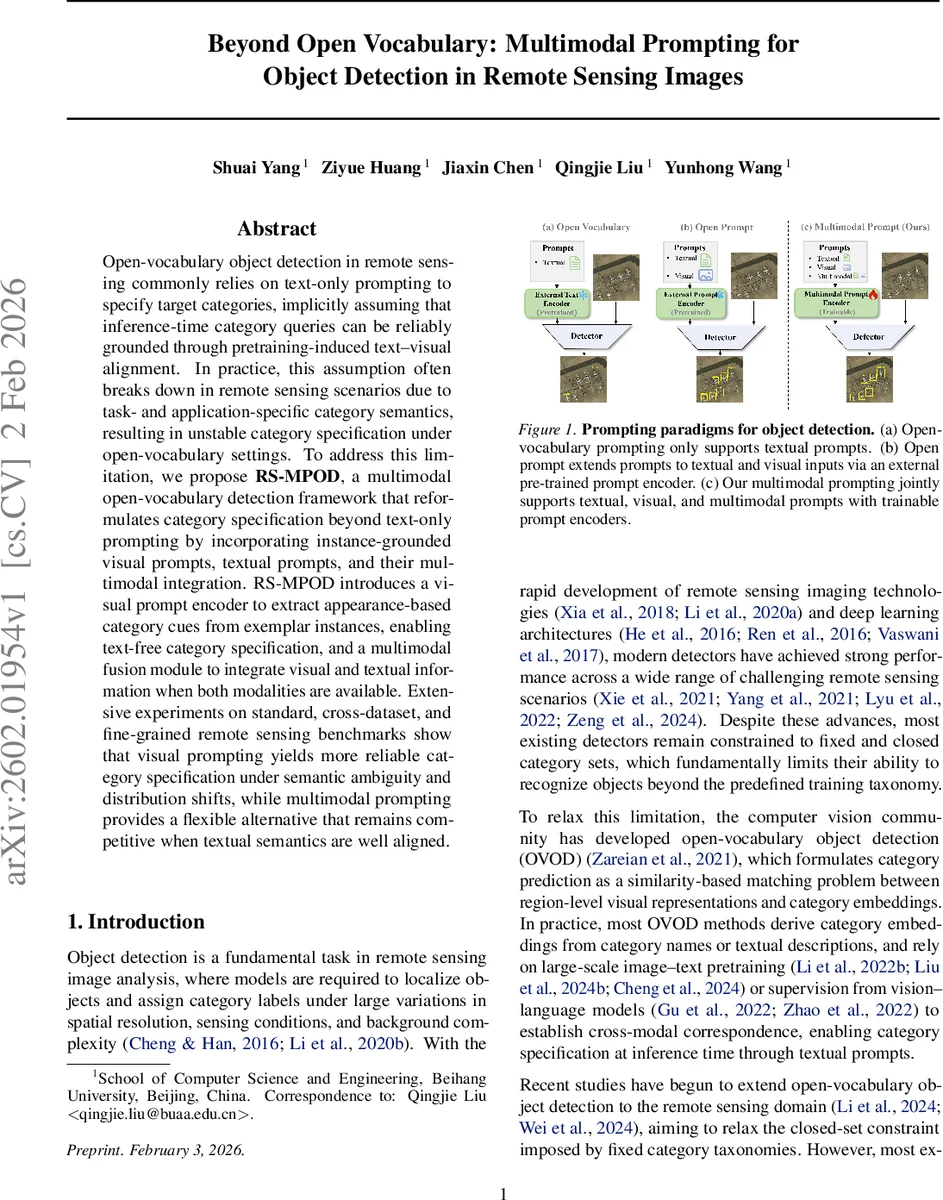

Open-vocabulary object detection in remote sensing commonly relies on text-only prompting to specify target categories, implicitly assuming that inference-time category queries can be reliably grounded through pretraining-induced text-visual alignment. In practice, this assumption often breaks down in remote sensing scenarios due to task- and application-specific category semantics, resulting in unstable category specification under open-vocabulary settings. To address this limitation, we propose RS-MPOD, a multimodal open-vocabulary detection framework that reformulates category specification beyond text-only prompting by incorporating instance-grounded visual prompts, textual prompts, and their multimodal integration. RS-MPOD introduces a visual prompt encoder to extract appearance-based category cues from exemplar instances, enabling text-free category specification, and a multimodal fusion module to integrate visual and textual information when both modalities are available. Extensive experiments on standard, cross-dataset, and fine-grained remote sensing benchmarks show that visual prompting yields more reliable category specification under semantic ambiguity and distribution shifts, while multimodal prompting provides a flexible alternative that remains competitive when textual semantics are well aligned.

💡 Research Summary

The paper addresses a critical limitation of current open‑vocabulary object detection (OVOD) methods in remote sensing (RS) that rely exclusively on textual prompts to specify target categories. Because RS datasets often use task‑specific vocabularies that differ from the large‑scale image‑text corpora used for pre‑training vision‑language models, the assumed alignment between text and visual concepts frequently breaks down, leading to unstable or ambiguous detections. To overcome this, the authors propose RS‑MPOD, a multimodal prompting framework that extends category specification beyond text‑only prompts by incorporating instance‑grounded visual prompts and a multimodal fusion module.

RS‑MPOD builds on GroundingDINO, a prompt‑based detection architecture. The system supports three prompting configurations within a unified pipeline: (1) textual prompting, where category names are tokenized and encoded by a text backbone to produce a sequence of prompt embeddings; (2) visual prompting, where a newly introduced visual prompt encoder extracts appearance‑based embeddings from exemplar objects using deformable attention. For each category present in an image, a ground‑truth bounding box is sampled, its coordinates are position‑encoded, projected through an MLP, concatenated with a learnable content vector, and used as a query that attends to multi‑scale visual features. The attended representation is passed through a feed‑forward network to yield a single visual prompt vector per category. (3) multimodal prompting, which fuses the textual token sequence and the visual vector via a lightweight cross‑attention module. A learnable query aggregates information from both modalities, producing a unified category prompt.

During training, the authors adopt a stage‑wise strategy: first train the detector with textual prompts only, then freeze the image encoder and train the visual prompt encoder, and finally train the multimodal fusion module while keeping earlier components fixed. The loss combines a cosine‑based classification loss (softmax over prompt similarities) with L1 and GIoU bounding‑box regression losses, following the DETR paradigm.

Extensive experiments are conducted on standard RS benchmarks (e.g., DOTA, HRSC), cross‑dataset transfer scenarios (different sensors, resolutions), and fine‑grained classification tasks (vehicle sub‑types, ship categories). Results show that visual prompting significantly improves robustness when textual semantics are ambiguous or misaligned, achieving 4–6 percentage‑point gains in mean average precision (mAP) on categories such as “building” vs. “house”. In domain‑shift experiments, visual prompts mitigate performance drops that plague text‑only baselines. The multimodal configuration consistently matches or exceeds the best of either modality, delivering an additional 3–5 percentage‑point boost when both reliable text and visual exemplars are available.

Key contributions include: (1) a systematic analysis of why text‑only prompting fails in RS OVOD; (2) the design of a visual prompt encoder that provides a text‑free, appearance‑driven way to specify categories; (3) a multimodal fusion module that seamlessly integrates textual semantics and visual appearance; and (4) comprehensive empirical evidence that multimodal prompting enhances detection stability under semantic ambiguity and distribution shifts while remaining competitive in well‑aligned settings. RS‑MPOD thus offers a practical solution for deploying open‑vocabulary detectors in the diverse and often noisy domain of remote sensing imagery.

Comments & Academic Discussion

Loading comments...

Leave a Comment