GuideWeb: A Benchmark for Automatic In-App Guide Generation on Real-World Web UIs

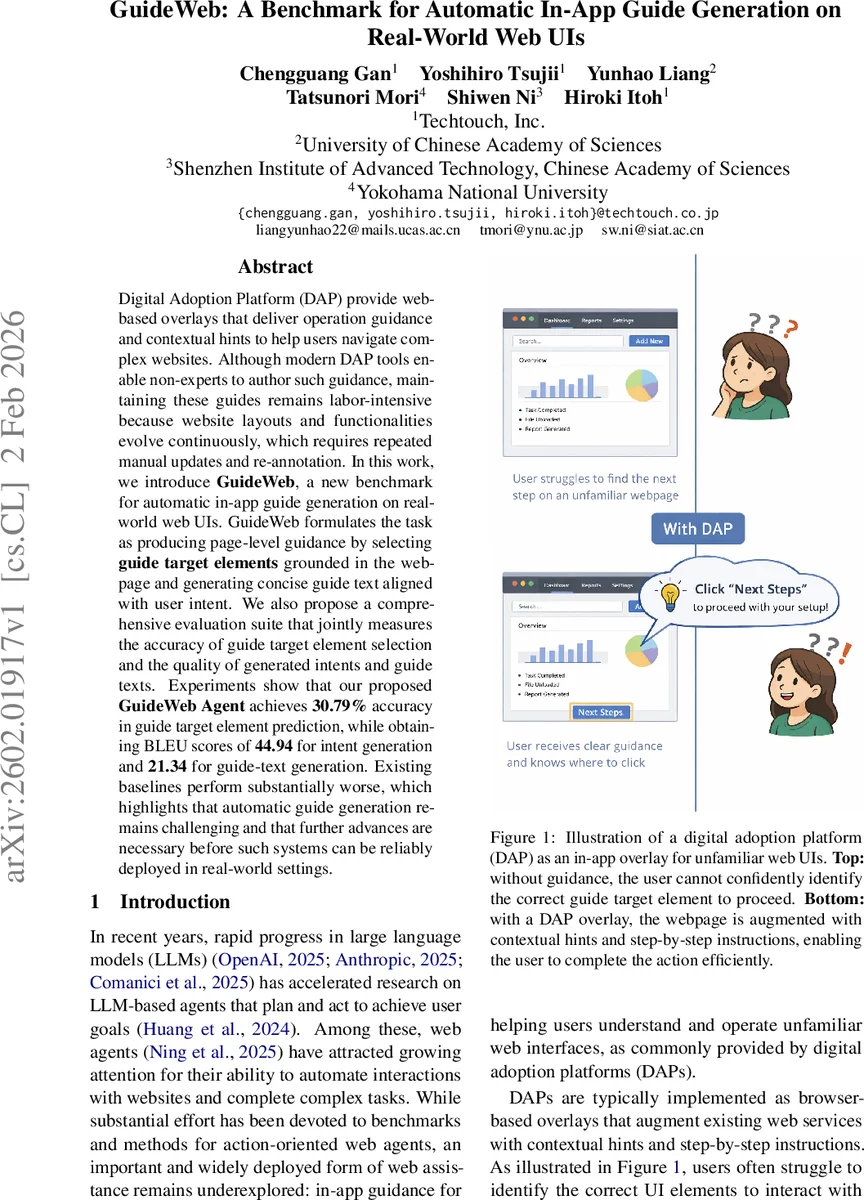

Digital Adoption Platform (DAP) provide web-based overlays that deliver operation guidance and contextual hints to help users navigate complex websites. Although modern DAP tools enable non-experts to author such guidance, maintaining these guides remains labor-intensive because website layouts and functionalities evolve continuously, which requires repeated manual updates and re-annotation. In this work, we introduce \textbf{GuideWeb}, a new benchmark for automatic in-app guide generation on real-world web UIs. GuideWeb formulates the task as producing page-level guidance by selecting \textbf{guide target elements} grounded in the webpage and generating concise guide text aligned with user intent. We also propose a comprehensive evaluation suite that jointly measures the accuracy of guide target element selection and the quality of generated intents and guide texts. Experiments show that our proposed \textbf{GuideWeb Agent} achieves \textbf{30.79%} accuracy in guide target element prediction, while obtaining BLEU scores of \textbf{44.94} for intent generation and \textbf{21.34} for guide-text generation. Existing baselines perform substantially worse, which highlights that automatic guide generation remains challenging and that further advances are necessary before such systems can be reliably deployed in real-world settings.

💡 Research Summary

The paper introduces GuideWeb, a novel benchmark designed to evaluate automatic in‑app guide generation for real‑world web user interfaces. Digital Adoption Platforms (DAPs) currently rely on manual authoring of overlays that highlight UI elements and provide step‑by‑step textual hints. Maintaining these overlays is costly because web layouts and functionalities evolve continuously, forcing frequent re‑annotation. GuideWeb formalizes the problem as a two‑stage task: (1) Guide Target Identification – given the raw HTML of a page, the system must decide whether any guidance is needed (binary flag g) and, if so, select a subset E⁺ of interactive DOM elements that merit a guide; (2) Element‑Grounded Guide Generation – for each selected element, the system must produce a structured annotation consisting of a natural‑language intent, a high‑level action_type (e.g., search, navigation, login), and a concise guide_text that explains how to use the element. All annotations are grounded in the original page via tag, visible text, and XPath, and are stored in a JSON schema.

Dataset Construction

The authors built the dataset by sampling 1 000 domains from the Cisco Umbrella Popularity List, crawling each site’s main landing page, and filtering for pages that contain a sufficient number of interactive controls. After human verification, 996 pages remained, of which 980 (98.4 %) required guidance. On average each page received 3.09 guide annotations, with a hard cap of five guides per page (over half of the pages hit this cap).

Annotation was performed through a human‑in‑the‑loop LLM‑assisted pipeline: a large language model first performed page‑level analysis (needs_guides, coarse category) and then identified candidate guide targets and generated intent/action_type/guide_text for each. The model’s output was forced into the predefined JSON format; any malformed output triggered an automatic regeneration loop. Human annotators then verified and corrected each entry, ensuring structural correctness and practical usefulness. The final dataset includes a balanced distribution of action types (search, navigate, login, contact support, etc.) and provides full DOM grounding for each guide.

Task Definition and Model

Formally, the system is a mapping f : X → {0,1} × Y, where X is the raw HTML, g ∈ {0,1} indicates whether the page needs any guides, and Y is the set of element‑grounded annotations. The first stage can be seen as a multi‑label classification over the set of interactive elements, while the second stage is a conditional text‑generation problem conditioned on the selected element’s attributes.

The authors propose GuideWeb Agent, a lightweight architecture built on a pre‑trained LLM (e.g., GPT‑4). The agent first encodes the entire page and predicts g and a binary mask over E(x) to obtain E⁺. For each element in E⁺, a shared decoder generates the three textual fields. Training uses a combined loss: binary cross‑entropy for the mask and cross‑entropy for the generated strings.

Evaluation Protocol

Performance is measured on three fronts: (1) Guide Target Selection Accuracy (precision, recall, F1) comparing E⁺ to the gold set; (2) Intent Generation Quality using BLEU‑4; (3) Guide‑Text Generation Quality also using BLEU‑4. A composite score aggregates these metrics to rank systems.

Results

GuideWeb Agent achieves 30.79 % accuracy in guide‑target selection, substantially higher than baseline methods (which hover around 10 %). For the textual components, the agent reaches BLEU‑4 = 44.94 for intents and BLEU‑4 = 21.34 for guide texts. The relatively high intent score suggests that LLMs are adept at inferring the underlying user goal, while the lower guide‑text score indicates difficulty in producing concise, context‑aware instructions that align with UI semantics.

Analysis and Limitations

The benchmark reveals a clear gap between the ability to generate fluent language and the ability to correctly locate the UI elements that truly need assistance. Current models rely solely on DOM‑derived textual cues; visual cues such as icons, layout geometry, color, and hover states are ignored, likely limiting selection performance. Moreover, BLEU, while convenient, does not capture user‑centric notions of usefulness, clarity, or task‑completion speed. Human‑in‑the‑loop evaluations (e.g., A/B testing in a live DAP) are needed to validate real‑world impact. The fixed cap of five guides per page, though pragmatic, may not reflect optimal guidance density for all domains.

Future Directions

- Multimodal Integration – Incorporate screenshots and visual feature extractors (e.g., Vision Transformers) to enrich element representations.

- Reinforcement Learning – Use user interaction signals (clicks, dwell time, error rates) as rewards to fine‑tune guide‑target policies.

- Human‑Centric Metrics – Deploy crowd‑sourced or field studies to assess comprehension, error reduction, and satisfaction, moving beyond BLEU.

- Dynamic Guide Budgeting – Learn to allocate a variable number of guides per page based on complexity, user expertise, or task urgency.

Conclusion

GuideWeb constitutes the first large‑scale, real‑world benchmark for automatic in‑app guide generation on web UIs. By providing a well‑structured dataset, a clear two‑stage task formulation, and a comprehensive evaluation suite, the authors lay the groundwork for systematic research in this under‑explored area. The initial GuideWeb Agent demonstrates that large language models can generate meaningful intents and reasonable guide texts, yet substantial challenges remain in accurately identifying which UI elements truly need assistance. Addressing these challenges through multimodal modeling, reinforcement learning, and rigorous human evaluation will be essential for deploying reliable, automated DAP solutions in production environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment