Towards Long-Horizon Interpretability: Efficient and Faithful Multi-Token Attribution for Reasoning LLMs

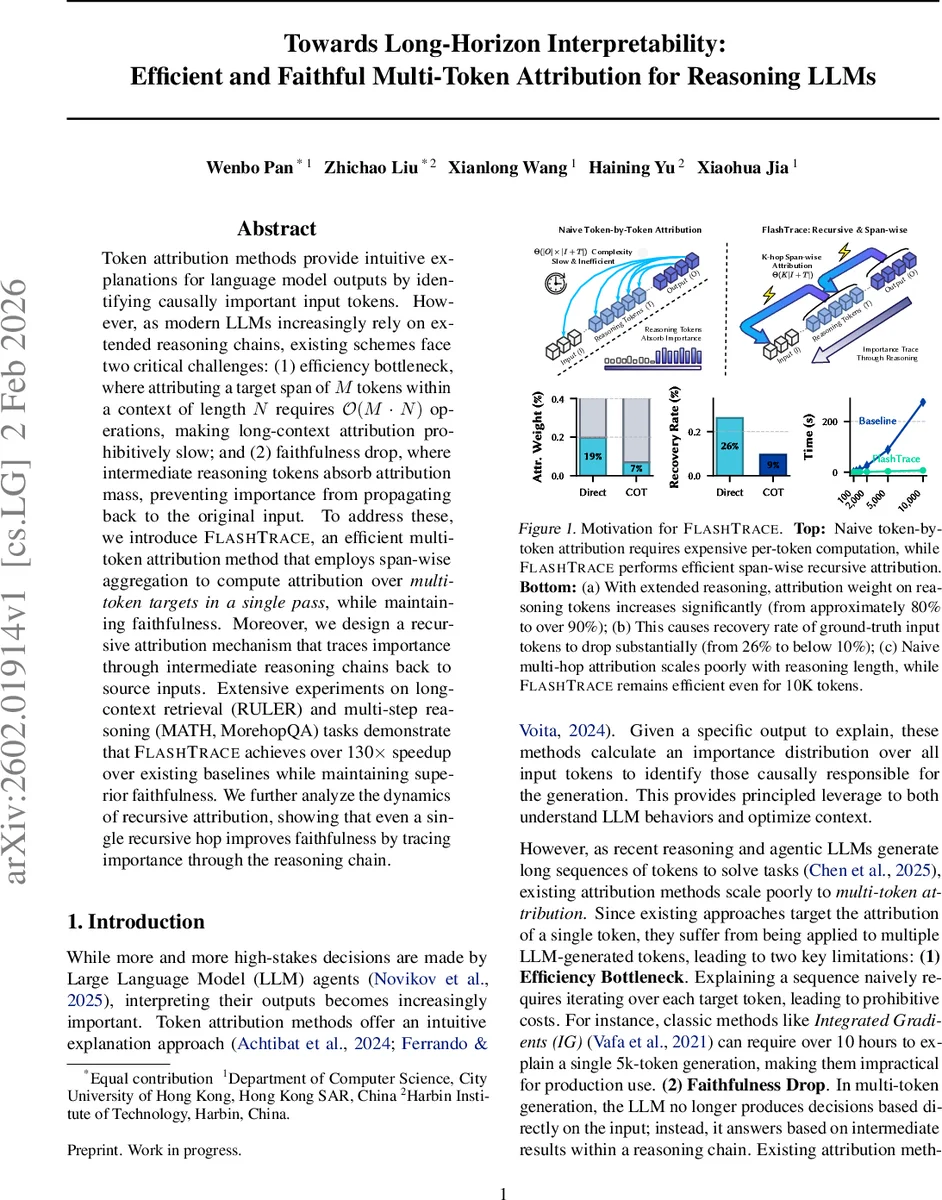

Token attribution methods provide intuitive explanations for language model outputs by identifying causally important input tokens. However, as modern LLMs increasingly rely on extended reasoning chains, existing schemes face two critical challenges: (1) efficiency bottleneck, where attributing a target span of M tokens within a context of length N requires O(M*N) operations, making long-context attribution prohibitively slow; and (2) faithfulness drop, where intermediate reasoning tokens absorb attribution mass, preventing importance from propagating back to the original input. To address these, we introduce FlashTrace, an efficient multi-token attribution method that employs span-wise aggregation to compute attribution over multi-token targets in a single pass, while maintaining faithfulness. Moreover, we design a recursive attribution mechanism that traces importance through intermediate reasoning chains back to source inputs. Extensive experiments on long-context retrieval (RULER) and multi-step reasoning (MATH, MorehopQA) tasks demonstrate that FlashTrace achieves over 130x speedup over existing baselines while maintaining superior faithfulness. We further analyze the dynamics of recursive attribution, showing that even a single recursive hop improves faithfulness by tracing importance through the reasoning chain.

💡 Research Summary

The paper “Towards Long‑Horizon Interpretability: Efficient and Faithful Multi‑Token Attribution for Reasoning LLMs” introduces FlashTrace, a novel attribution framework designed to explain the outputs of large language models (LLMs) that generate long chains of reasoning or tool‑use tokens. Traditional token‑level attribution methods such as Integrated Gradients, AttnLRP, or IFR treat each target token independently, leading to two major problems when applied to modern LLMs: (1) an efficiency bottleneck, because attributing a span of M tokens in a context of length N requires O(M·N) operations, making explanations for 5‑10k token generations impractically slow; and (2) a faithfulness drop, where intermediate reasoning tokens (the “T” segment) absorb most of the attribution mass, preventing importance from propagating back to the original input (the “I” segment).

FlashTrace tackles both issues with two key innovations. First, Span‑wise Aggregation replaces per‑token attribution with a single pass that aggregates contributions across an entire target span. By reformulating the transformer’s attention, feed‑forward, and residual pathways as token‑to‑span interactions, the method computes a proximity score (based on an L1‑norm metric) between the aggregated span representation and each input token. This reduces the computational complexity to O(N) per hop, independent of the span length M.

Second, Recursive Attribution addresses the faithfulness problem. After an initial attribution from the final output span O to the context, FlashTrace identifies the most influential reasoning tokens T̂ and treats them as a new target span. The span‑wise aggregation is then reapplied, tracing importance further back toward the original input. Repeating this process for K hops (typically 1–2) yields a multi‑hop attribution that distributes relevance from the final answer through the entire reasoning chain. The authors show that even a single recursive hop substantially improves the recovery rate of ground‑truth input tokens.

The theoretical foundation builds on the ALTI (Aggregation of Layer‑wise Token‑to‑Token Interactions) and IFR (Information Flow Route) frameworks. Proximity is defined as max(0, –‖y – z‖₁ + ‖y‖₁), measuring how much the target vector would shrink if a contribution were removed. By applying this metric layer‑wise and aggregating over spans, FlashTrace obtains faithful importance estimates while keeping memory usage linear in the number of layers and heads.

Experiments span three benchmark suites: RULER (long‑document retrieval), MATH (multi‑step mathematical proofs), and MorehopQA (multi‑hop question answering). The authors evaluate multiple LLMs, including Qwen‑3‑8B Instruct and GPT‑4o. Compared to baselines, FlashTrace achieves an average 130× speedup, processing a 10 k‑token context in roughly 2 seconds on a single GPU. In terms of faithfulness, the recovery rate for chain‑of‑thought (CoT) settings improves from ~9 % (baseline) to ~23 % after one recursive hop, and up to ~27 % after two hops, effectively restoring the ability to pinpoint original input tokens.

Ablation studies confirm that the span‑wise aggregation alone yields the speed gains, while recursive hops are responsible for the faithfulness improvements. Visualizations of importance diffusion across hops illustrate how relevance gradually shifts from reasoning tokens back to the input, providing a quantitative view of the model’s internal causal flow.

Limitations noted by the authors include the current focus on contiguous spans—handling non‑contiguous token sets would require additional bookkeeping—and the potential accumulation of approximation error with deeper recursion. Future work is suggested on extending the method to arbitrary token subsets, developing error‑correction mechanisms for deep recursion, and integrating FlashTrace into real‑time LLM agents with user‑friendly visual explanations.

In summary, FlashTrace delivers a practical, scalable, and more faithful solution for multi‑token attribution in long‑horizon LLM reasoning, bridging the gap between interpretability research and the operational demands of production‑grade AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment