ES-MemEval: Benchmarking Conversational Agents on Personalized Long-Term Emotional Support

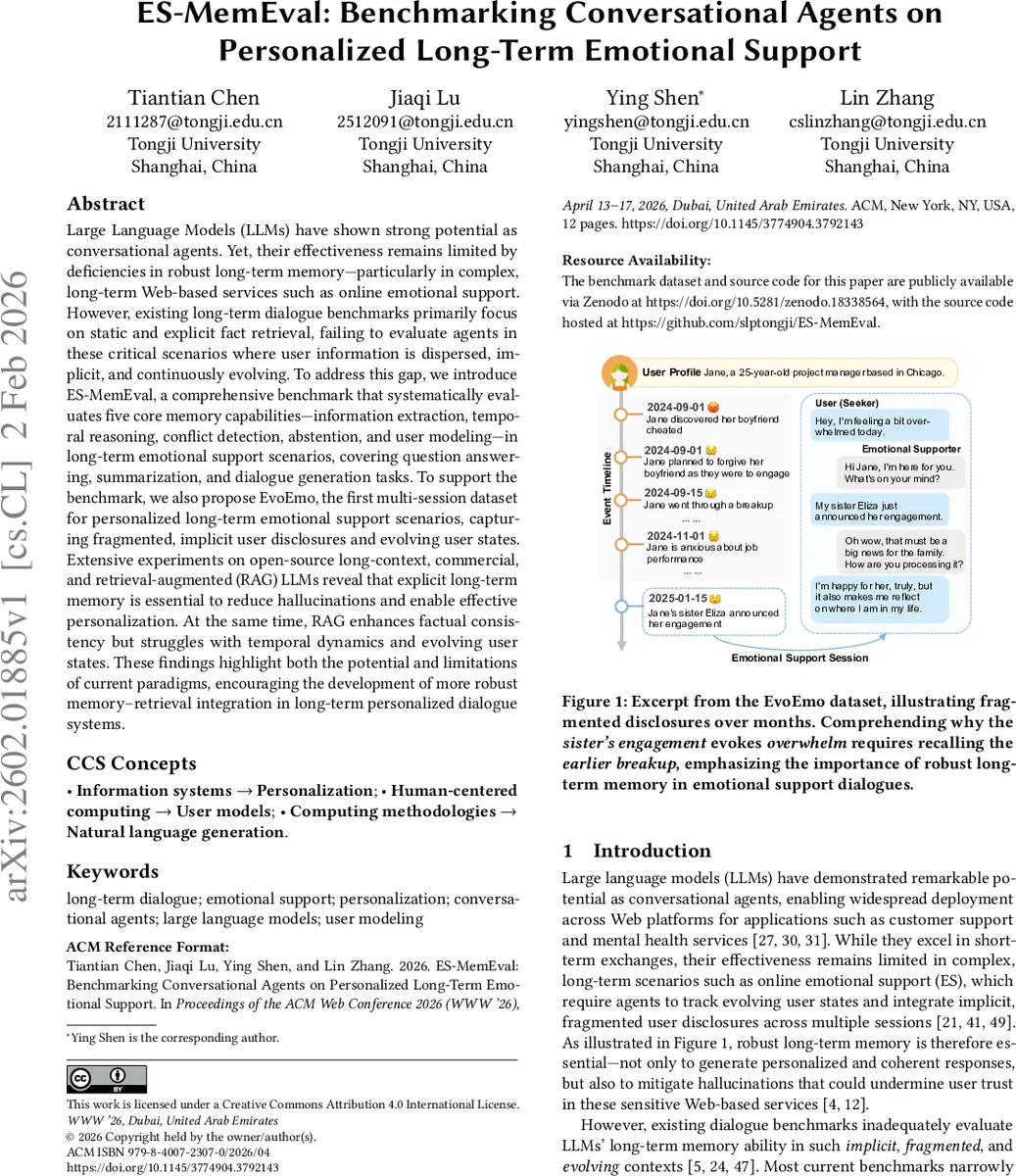

Large Language Models (LLMs) have shown strong potential as conversational agents. Yet, their effectiveness remains limited by deficiencies in robust long-term memory, particularly in complex, long-term web-based services such as online emotional support. However, existing long-term dialogue benchmarks primarily focus on static and explicit fact retrieval, failing to evaluate agents in critical scenarios where user information is dispersed, implicit, and continuously evolving. To address this gap, we introduce ES-MemEval, a comprehensive benchmark that systematically evaluates five core memory capabilities: information extraction, temporal reasoning, conflict detection, abstention, and user modeling, in long-term emotional support settings, covering question answering, summarization, and dialogue generation tasks. To support the benchmark, we also propose EvoEmo, a multi-session dataset for personalized long-term emotional support that captures fragmented, implicit user disclosures and evolving user states. Extensive experiments on open-source long-context, commercial, and retrieval-augmented (RAG) LLMs show that explicit long-term memory is essential for reducing hallucinations and enabling effective personalization. At the same time, RAG improves factual consistency but struggles with temporal dynamics and evolving user states. These findings highlight both the potential and limitations of current paradigms and motivate more robust integration of memory and retrieval for long-term personalized dialogue systems.

💡 Research Summary

The paper addresses a critical gap in the evaluation of conversational agents that provide long‑term emotional support. While large language models (LLMs) have demonstrated impressive capabilities in short‑term interactions, they struggle when required to retain and reason over fragmented, implicit, and evolving user information across multiple sessions. Existing long‑term dialogue benchmarks focus mainly on static fact retrieval and therefore do not capture the complexities of real emotional‑support scenarios.

To fill this void, the authors introduce ES‑MemEval, a comprehensive benchmark that systematically tests five core memory abilities:

- Information Extraction (IE) – retrieving entities, events, and relationships dispersed over many turns.

- Temporal Reasoning (TR) – understanding the chronological order and causal links among events, and tracking how a user’s emotional state changes over time.

- Conflict Detection (CD) – spotting contradictory statements within the dialogue history.

- Abstention (Abs) – correctly refusing to answer when the evidence is insufficient, thereby reducing hallucinations.

- User Modeling (UM) – building a coherent, longitudinal representation of the user’s mental state, goals, and relationships.

These abilities are evaluated through three complementary tasks: (a) Question Answering (QA) that requires retrieving and synthesizing information from the full conversation history; (b) Summarization that asks the model to abstract a user’s emotional trajectory across sessions; and (c) Dialogue Generation where the model must produce a personalized, supportive response grounded in the accumulated memory.

To support the benchmark, the authors construct EvoEmo, the first multi‑session dataset specifically designed for long‑term emotional support. EvoEmo contains 18 virtual users, each with a richly annotated profile (demographics, social ties, core beliefs) and an event timeline that evolves over up to 33 sessions. The dataset averages 510 turns per user (≈13.3 k tokens), combining real short‑term ES conversations from ESConv with automatically generated continuations that reflect realistic life events (break‑ups, job stress, family changes, etc.). All data and code are publicly released on Zenodo and GitHub, ensuring reproducibility.

The experimental study compares three families of models:

- Open‑source long‑context LLMs (e.g., LLaMA‑2‑70B‑Chat, LongChat‑13B) that rely solely on an extended context window.

- Commercial closed‑source models (e.g., GPT‑4, Claude‑2) representing the state‑of‑the‑art in industry.

- Retrieval‑Augmented Generation (RAG) pipelines that first retrieve relevant passages from a vector store and then feed them to an LLM.

Each model is evaluated on the three ES‑MemEval tasks using automatic metrics (F1, ROUGE‑L, BLEU, BERTScore) and human judgments (factual accuracy, consistency, emotional appropriateness).

Key findings include:

- Memory‑less baselines (single‑turn inputs) suffer from severe hallucinations—over 30 % of answers contain fabricated user details—demonstrating that explicit long‑term memory is indispensable for reliable personalization.

- RAG systems improve factual consistency by 12–15 % relative to pure LLMs, confirming the benefit of external retrieval for static facts. However, they lag in temporal reasoning (≈8 % lower accuracy) because retrieved snippets are often out‑of‑date or lack chronological ordering, and the LLM struggles to integrate them into a coherent timeline.

- User Modeling scores are highest for long‑context LLMs (normalized 0.68) versus RAG (0.61), indicating that maintaining an internal representation across turns is more effective for tracking evolving emotional states than naïve retrieval.

- Abstention is uniformly weak; commercial models especially tend to guess rather than say “I don’t know,” raising safety concerns in sensitive support contexts.

- Session‑level retrieval (retrieving whole prior sessions) captures more context but introduces redundancy, while turn‑level retrieval can miss subtle cross‑session cues.

- Scalability: Smaller long‑context models degrade sharply when input length exceeds ~8 k tokens, underscoring the need for hybrid memory‑retrieval architectures.

The authors discuss limitations and future directions: EvoEmo’s virtual users, while realistic, differ from real clinical data; RAG pipelines need temporally aware indexing; integration of external key‑value memory with LLMs could provide persistent, updatable user representations; and ethical considerations around long‑term profiling and privacy must be addressed.

In summary, ES‑MemEval offers the first systematic benchmark for evaluating memory‑driven personalization in long‑term emotional‑support dialogues. The extensive experiments reveal that current LLMs and retrieval‑augmented systems each have strengths and weaknesses: explicit long‑term memory reduces hallucination and boosts personalization, while retrieval improves factual grounding but falls short on temporal dynamics. These insights chart a clear research agenda toward more robust, safe, and empathetic conversational agents capable of sustaining supportive relationships over weeks or months.

Comments & Academic Discussion

Loading comments...

Leave a Comment