AXE: Low-Cost Cross-Domain Web Structured Information Extraction

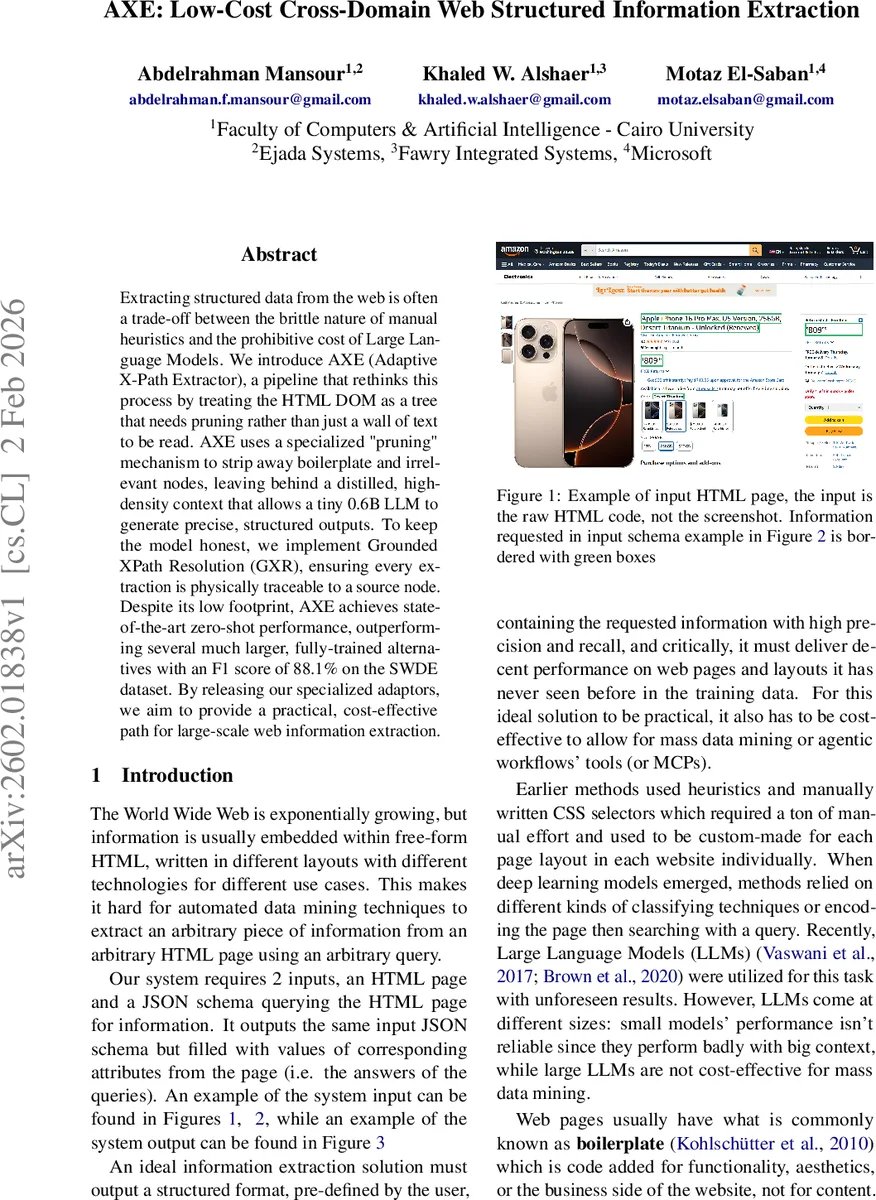

Extracting structured data from the web is often a trade-off between the brittle nature of manual heuristics and the prohibitive cost of Large Language Models. We introduce AXE (Adaptive X-Path Extractor), a pipeline that rethinks this process by treating the HTML DOM as a tree that needs pruning rather than just a wall of text to be read. AXE uses a specialized “pruning” mechanism to strip away boilerplate and irrelevant nodes, leaving behind a distilled, high-density context that allows a tiny 0.6B LLM to generate precise, structured outputs. To keep the model honest, we implement Grounded XPath Resolution (GXR), ensuring every extraction is physically traceable to a source node. Despite its low footprint, AXE achieves state-of-the-art zero-shot performance, outperforming several much larger, fully-trained alternatives with an F1 score of 88.1% on the SWDE dataset. By releasing our specialized adaptors, we aim to provide a practical, cost-effective path for large-scale web information extraction.

💡 Research Summary

The paper introduces AXE (Adaptive X‑Path Extractor), a low‑cost, cross‑domain web structured information extraction system that leverages aggressive DOM pruning to enable a tiny 0.6 B language model to produce accurate, schema‑conforming JSON outputs. The authors argue that most HTML pages contain a large amount of boilerplate (navigation bars, ads, footers, etc.) that is irrelevant to a specific extraction query. By treating the HTML DOM as a tree and systematically removing non‑essential nodes, AXE creates a dense, query‑focused context that dramatically reduces the token budget required for downstream language‑model processing.

The AXE pipeline consists of three stages. First, a pre‑processor cleans the raw HTML (removing scripts, CSS, and using HTMLRAG lossless cleaning) and chunks the page into structural blocks via a specialized “Au‑toChunker”. Second, an AI extractor performs two sub‑steps: (a) a Pruning Adapter, built with low‑rank LoRA (rsLoRA) on top of the Qwen‑3 0.6 B backbone, evaluates each mini‑chunk (single‑XPath units) against the user‑provided JSON schema or natural‑language question and selects only the relevant XPaths; (b) the selected, re‑merged HTML and the query are fed to the same backbone, now fine‑tuned as either a QA Adapter or a Schema Adapter, which generates the textual answer or JSON structure. Third, a post‑processor called Grounded XPath Resolution (GXR) maps the generated text back to the original DOM node. GXR scores candidate nodes using a dual metric—lexical overlap and Gestalt‑pattern fuzzy similarity—and returns the absolute XPath of the best match, thereby eliminating hallucinations and guaranteeing traceability.

Training data are synthetically generated via knowledge distillation from a large teacher model (Qwen‑3‑Coder‑480B). The authors scrape 10 k random pages from Common Crawl, cluster them to 1 k, filter down to 914 high‑quality pages, and create three task‑specific datasets: pruning, QA, and schema extraction. The Pruning Adapter is trained for two epochs with a 3 072‑token context window, NEFTune noise (α = 12) and 0.2 dropout. The extraction adapters use a 4 096‑token window, lighter regularization (α = 5, dropout = 0.1), and the QA adapter receives an additional 15 k WebSRC examples. Training employs FlashAttention‑2, Liger kernels, and BF16 precision for efficiency.

Evaluation is performed on two benchmarks. SWDE (124 k pages, 80 sites, 8 verticals) measures cross‑site attribute extraction with page‑level F1. AXE, in a zero‑shot setting (k = 0), achieves 88.10 % F1, surpassing the strongest one‑shot baseline WebLM‑LARGE (87.57 %). Performance is consistent across domains, ranging from 80.90 % (Auto) to 93.13 % (Restaurant). Token analysis shows the Pruning Adapter reduces average context length from 16 581 tokens to 351 tokens—a 97.9 % reduction—making the small model feasible and cutting inference cost dramatically. Ablation studies confirm that removing the pruner drops F1 by only 0.66 % (showing robustness to noise) while disabling GXR leads to a substantial accuracy decline, highlighting the importance of grounding.

The authors conclude that AXE’s three‑part strategy—DOM pruning, lightweight LLM inference, and structural grounding—delivers state‑of‑the‑art zero‑shot extraction at a fraction of the computational budget of prior large‑model approaches. The released adapters enable practical, large‑scale web mining and can be reused for other LLM‑driven web tasks, offering both academic insight and immediate industry applicability.

Comments & Academic Discussion

Loading comments...

Leave a Comment