Efficient Cross-Country Data Acquisition Strategy for ADAS via Street-View Imagery

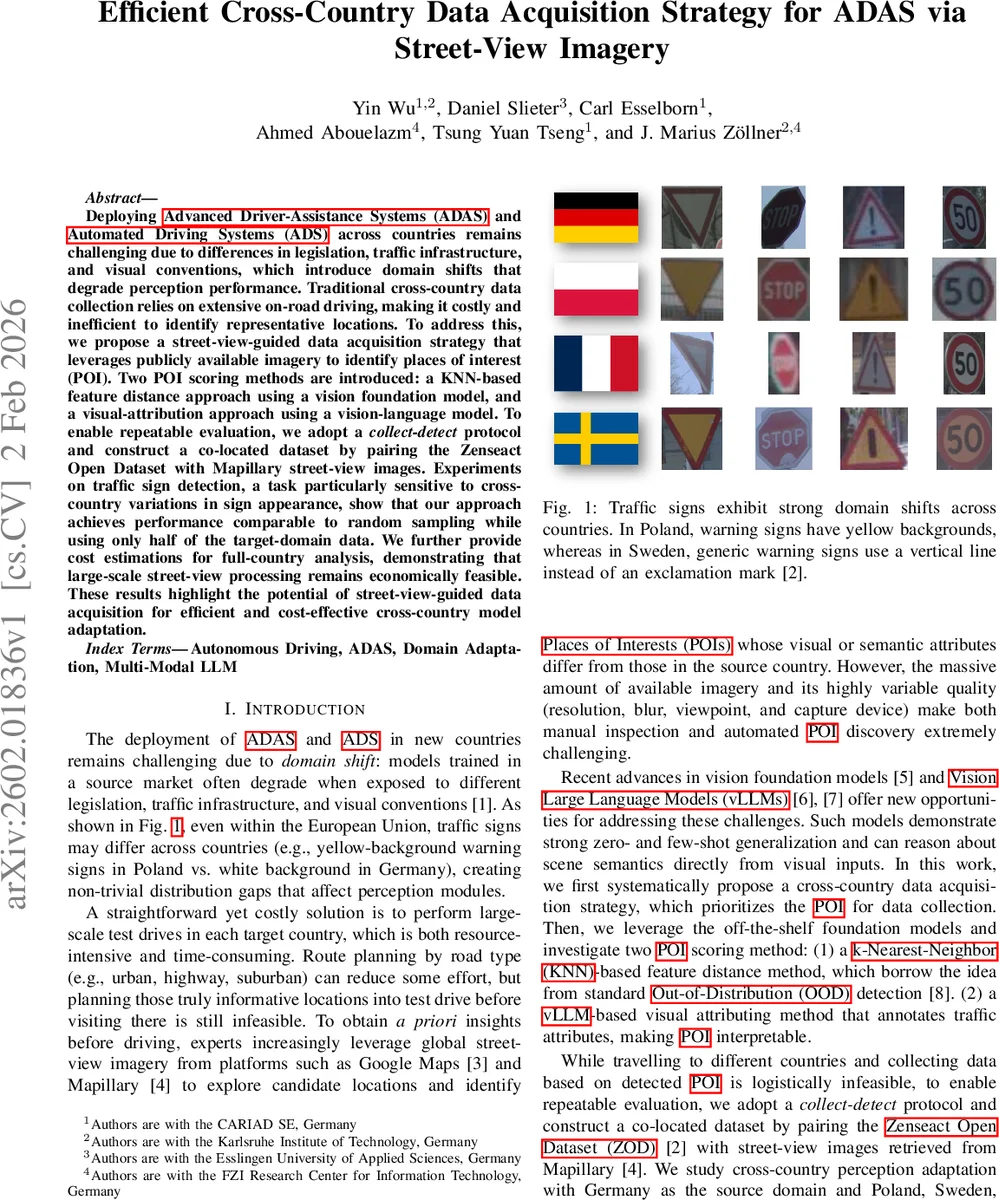

Deploying ADAS and ADS across countries remains challenging due to differences in legislation, traffic infrastructure, and visual conventions, which introduce domain shifts that degrade perception performance. Traditional cross-country data collection relies on extensive on-road driving, making it costly and inefficient to identify representative locations. To address this, we propose a street-view-guided data acquisition strategy that leverages publicly available imagery to identify places of interest (POI). Two POI scoring methods are introduced: a KNN-based feature distance approach using a vision foundation model, and a visual-attribution approach using a vision-language model. To enable repeatable evaluation, we adopt a collect-detect protocol and construct a co-located dataset by pairing the Zenseact Open Dataset with Mapillary street-view images. Experiments on traffic sign detection, a task particularly sensitive to cross-country variations in sign appearance, show that our approach achieves performance comparable to random sampling while using only half of the target-domain data. We further provide cost estimations for full-country analysis, demonstrating that large-scale street-view processing remains economically feasible. These results highlight the potential of street-view-guided data acquisition for efficient and cost-effective cross-country model adaptation.

💡 Research Summary

The paper tackles the problem of deploying Advanced Driver‑Assistance Systems (ADAS) and Automated Driving Systems (ADS) in new countries, where domain shifts caused by differing traffic regulations, sign designs, and road infrastructure can severely degrade perception performance, especially for traffic‑sign detection. Traditional solutions rely on extensive on‑road data collection in each target country, which is costly, time‑consuming, and inefficient because it does not indicate which locations are most informative for model adaptation.

To address this, the authors propose a pre‑drive data‑acquisition strategy that leverages publicly available street‑view imagery (e.g., Mapillary, Google Street View) to identify Places of Interest (POIs) that are likely to exhibit visual or semantic discrepancies relative to the source domain. Two POI scoring methods are introduced:

-

KNN‑based Feature Distance – A pretrained vision foundation model (such as CLIP) extracts embeddings from source‑domain driving images (Germany) and target‑domain street‑view images (Poland, Sweden, France). For each street‑view image, the k‑th nearest‑neighbor distance to the source feature set is computed; larger distances indicate higher discrepancy. Scores for all images at a GPS location are aggregated by taking the minimum distance, yielding a location‑level POI score.

-

vLLM‑based Visual Attribution – A vision‑language large model processes each street‑view image with a structured prompt that asks the model to detect all traffic signs and return a JSON description containing attributes such as shape, border color, background color, symbol, extracted text, and language. The resulting attribute vectors are compared against those derived from source‑domain data; locations with larger attribute mismatches receive higher POI scores. This method provides interpretable, fine‑grained semantic differences.

Because real‑world “detect‑then‑collect” (explore street‑view, then drive to the identified locations) is logistically infeasible for systematic research, the authors adopt a collect‑detect protocol. They start from an existing multi‑country driving dataset (Zenseact Open Dataset, ZOD), retrieve street‑view images at the exact GPS coordinates of each driving frame, run the POI scoring methods on the street‑view set, and select the top‑k locations. Only the driving frames corresponding to these locations are used for fine‑tuning the ADAS perception model. This creates a repeatable, controlled evaluation environment that isolates the effect of POI selection under a fixed data budget.

Experiments focus on binary traffic‑sign detection (sign vs. background) as a representative ADAS perception task. Germany serves as the source domain; Poland, Sweden, and France are target domains. Results show that both POI selection methods outperform random sampling. Notably, the vLLM‑based approach achieves comparable mean Average Precision (mAP) while using only about 50 % of the target‑domain data required by random selection. The KNN method also yields gains but is less stable across countries.

The paper further provides a cost analysis for scaling the approach to whole‑country coverage. Using current cloud‑based image‑processing rates and modest human annotation effort, the total expense for processing millions of street‑view images is estimated in the low‑hundreds‑of‑thousands of dollars, far cheaper than conducting extensive on‑road test drives.

Limitations include variability in street‑view image quality (resolution, blur, viewpoint, weather) and incomplete coverage in some regions, which can affect POI detection reliability. The study also concentrates on traffic‑sign detection; extending the framework to other perception tasks (pedestrian detection, lane detection, dynamic object tracking) would require additional attribute ontologies and prompt engineering.

In summary, the paper presents a data‑centric, foundation‑model‑driven pipeline for efficient cross‑country ADAS data acquisition. By exploiting street‑view imagery and modern vision‑language models, it identifies the most informative locations for targeted data collection, dramatically reducing the amount of target‑domain data needed for effective model adaptation while keeping costs manageable. This work offers a practical blueprint for manufacturers and researchers aiming to scale ADAS/ADS deployments across diverse regulatory environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment