Hyperbolic Graph Neural Networks Under the Microscope: The Role of Geometry-Task Alignment

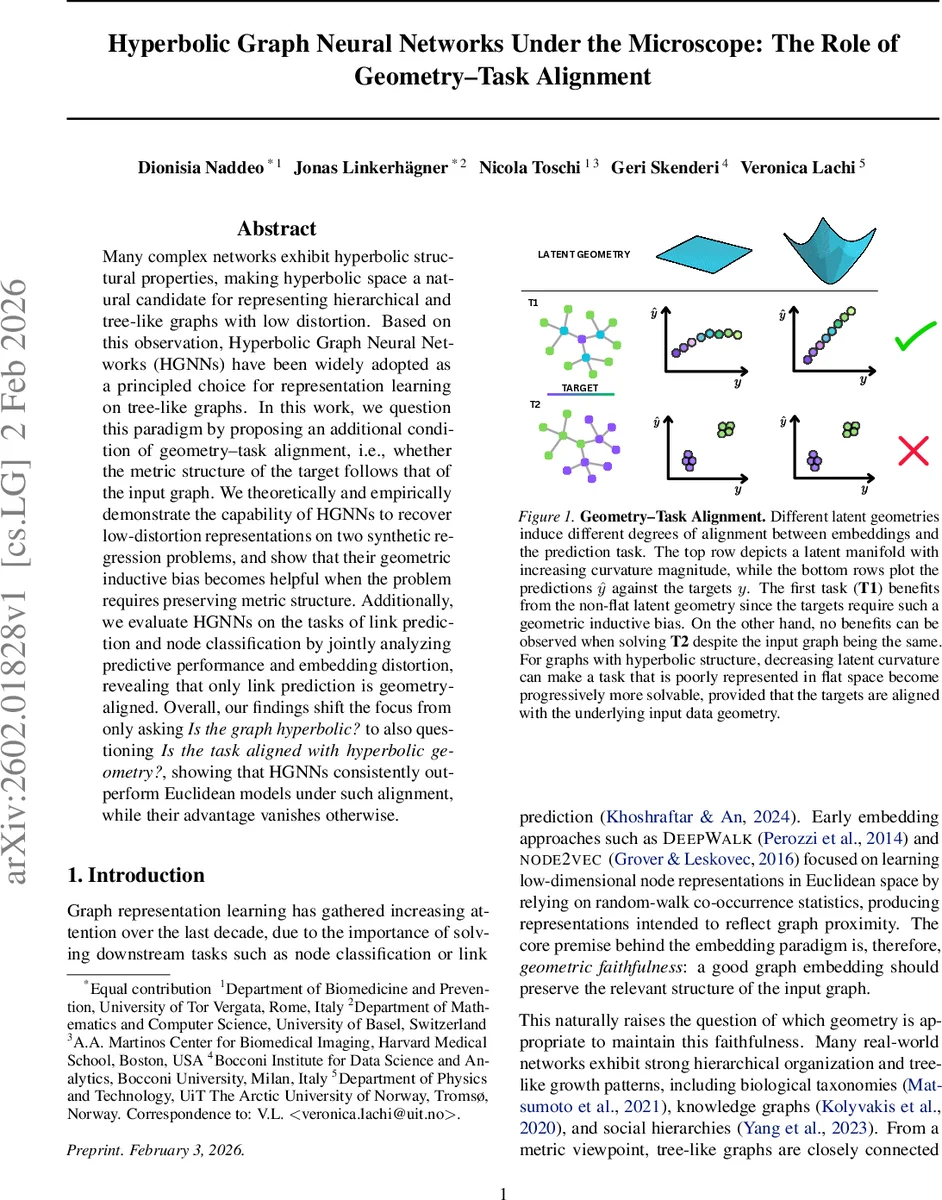

Many complex networks exhibit hyperbolic structural properties, making hyperbolic space a natural candidate for representing hierarchical and tree-like graphs with low distortion. Based on this observation, Hyperbolic Graph Neural Networks (HGNNs) have been widely adopted as a principled choice for representation learning on tree-like graphs. In this work, we question this paradigm by proposing an additional condition of geometry-task alignment, i.e., whether the metric structure of the target follows that of the input graph. We theoretically and empirically demonstrate the capability of HGNNs to recover low-distortion representations on two synthetic regression problems, and show that their geometric inductive bias becomes helpful when the problem requires preserving metric structure. Additionally, we evaluate HGNNs on the tasks of link prediction and node classification by jointly analyzing predictive performance and embedding distortion, revealing that only link prediction is geometry-aligned. Overall, our findings shift the focus from only asking “Is the graph hyperbolic?” to also questioning “Is the task aligned with hyperbolic geometry?”, showing that HGNNs consistently outperform Euclidean models under such alignment, while their advantage vanishes otherwise.

💡 Research Summary

This paper revisits the widely held belief that hyperbolic graph neural networks (HGNNs) are automatically the best choice for any graph exhibiting hyperbolic structure. The authors argue that the mere “tree‑likeness” of a graph is insufficient; instead, a second condition—geometry‑task alignment—must be satisfied. Geometry‑task alignment asks whether the metric structure of the downstream target (e.g., link existence, node labels) aligns with the intrinsic metric of the input graph.

The theoretical foundation begins with a formal definition of embedding distortion (δ), the product of contraction and expansion factors that quantify how faithfully a mapping h from the graph metric space (V, d_G) to a representation manifold (M, d_M) preserves distances. A distortion of 1 corresponds to a global similarity (up to a scale factor), while larger values indicate metric warping. Prior work (Sarkar, 2011) shows that trees can be embedded in hyperbolic space with arbitrarily low distortion, motivating the question: can a supervised HGNN actually learn such low‑distortion embeddings?

To answer this, the authors introduce a synthetic Pairwise Distance Prediction (PDP) task. For a given graph, every unordered node pair (i, j) is assigned the ground‑truth shortest‑path distance D_ij as a regression target. The encoder h_ϕ maps node features to latent points z_i in either Euclidean ℝ^d, the Poincaré ball B^d_c, or the Lorentz hyperboloid L^d_c. A simple linear decoder predicts D̂_ij = a·d_M(z_i, z_j) + b. Training minimizes a stress loss L_stress = (1/|P|) Σ_{(i,j)∈P} ((D̂_ij – D_ij)^2 / D_ij^2), which directly penalizes metric distortion.

Two synthetic graphs are used: a balanced 5‑ary tree (|V|=781) and a 28×28 grid (|V|=784), both with sparse Gaussian node features. Experiments sweep embedding dimensions d ∈ {3,8,16,32,64,128} and compare four models: Euclidean GCN, Euclidean GAT, hyperbolic HGCN (tangent‑space) and HyboNet (fully hyperbolic). Results on the tree show that hyperbolic models achieve dramatically lower stress at low dimensions (especially HyboNet at d=3), confirming that negative curvature provides a strong inductive bias for compactly representing hierarchical structures. As d grows, Euclidean models close the gap, and at d=128 all methods reach comparable stress. On the grid, the opposite pattern emerges: Euclidean models consistently attain lower stress, while HyboNet struggles due to its fixed curvature. HGCN performs best among hyperbolic variants because its learnable curvature can adapt toward an effectively Euclidean regime.

Beyond synthetic metrics, the paper evaluates two real‑world downstream tasks: link prediction and node classification on several benchmark datasets. For each task, the authors report both predictive performance (AUC or accuracy) and embedding distortion measured on the learned latent space. The findings reveal a clear alignment effect: link prediction benefits from hyperbolic geometry because the existence of an edge is directly tied to graph distance, leading to higher AUC and lower distortion for HGNNs. In contrast, node classification relies more on feature separability than on preserving graph distances; Euclidean GCN/GAT match or surpass HGNNs, and the distortion advantage of hyperbolic embeddings does not translate into better accuracy.

Theoretical contributions include: (i) proof that HGNNs can recover low‑distortion embeddings of trees under supervision, (ii) analysis showing that distortion reduction alone does not guarantee downstream gains, and (iii) formalization of geometry‑task alignment as a necessary condition for HGNN superiority.

Practical guidance distilled from the study:

- Use HGNNs for tasks where the target is intrinsically metric‑aligned with the graph (e.g., link prediction, hierarchical relationship inference).

- Prefer Euclidean GNNs for node classification or any task where label information is weakly correlated with graph distances.

- When employing hyperbolic models, consider tangent‑space approaches (HGCN) with learnable curvature for flexibility across datasets.

- Benchmarking hyperbolic advantages requires tasks that explicitly penalize metric distortion; otherwise, reported gains may be illusory.

In summary, the paper shifts the evaluation of hyperbolic graph neural networks from a sole focus on graph hyperbolicity to a dual focus that also includes the alignment between the task’s objective and the graph’s metric structure. This nuanced perspective explains why HGNNs sometimes outperform Euclidean baselines and sometimes do not, providing a clearer roadmap for researchers and practitioners choosing the appropriate geometry for graph representation learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment