CritiqueCrew: Orchestrating Multi-Perspective Conversational Design Critique

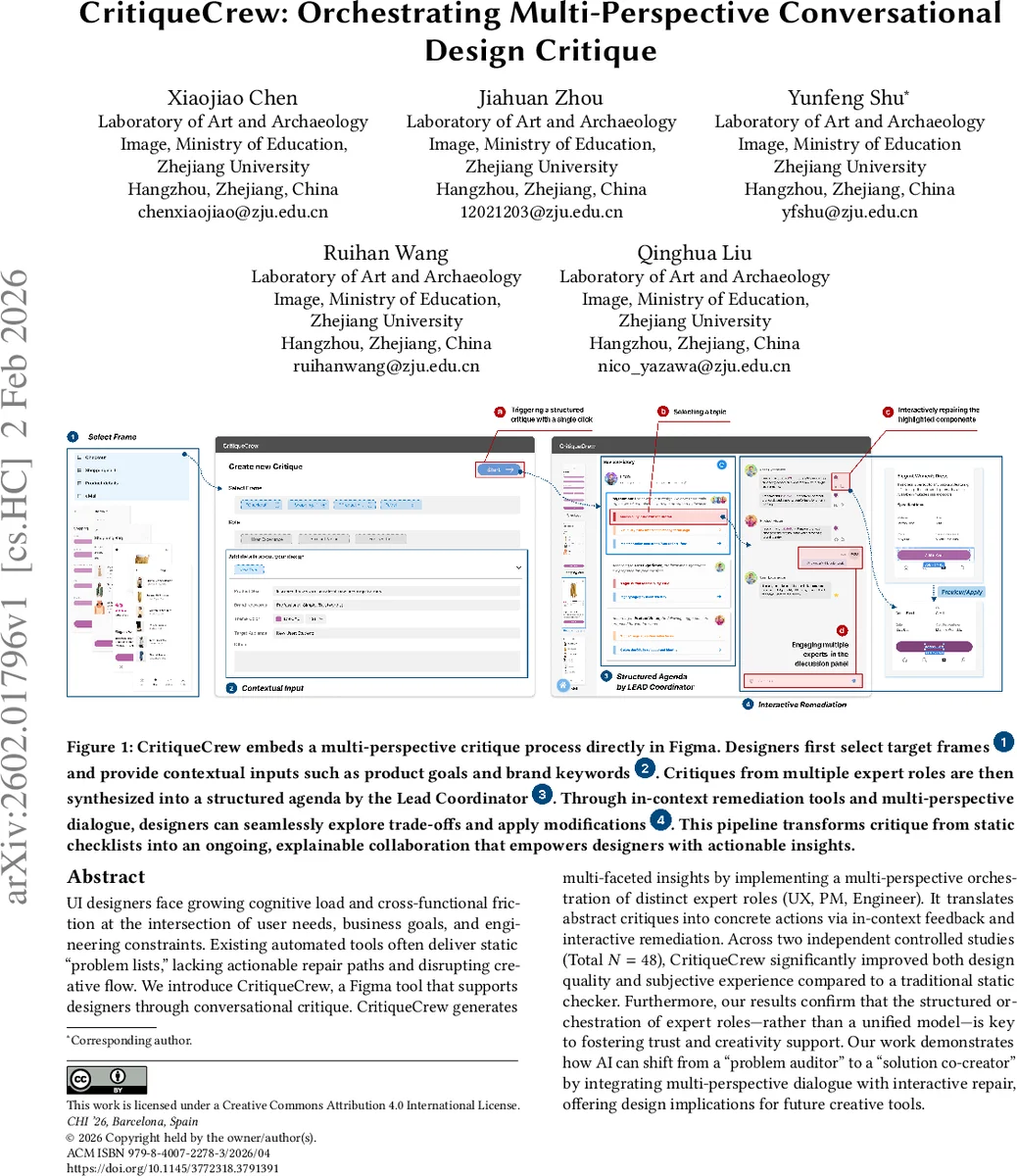

UI designers face growing cognitive load and cross functional friction at the intersection of user needs, business goals, and engineering constraints. Existing automated tools often deliver static “problem lists”, lacking actionable repair paths and disrupting creative flow. We introduce CritiqueCrew, a Figma tool that supports designers through conversational critique. CritiqueCrew generates multi-faceted insights by implementing a multi-perspective orchestration of distinct expert roles (UX, PM, Engineer). It translates abstract critiques into concrete actions via in context feedback and interactive remediation. Across two independent controlled studies (Total N=48), CritiqueCrew significantly improved both design quality and subjective experience compared to a traditional static checker. Furthermore, our results confirm that the structured orchestration of expert roles-rather than a unified model-is key to fostering trust and creativity support. Our work demonstrates how AI can shift from a “problem auditor” to a “solution co-creator” by integrating multi-perspective dialogue with interactive repair, offering design implications for future creative tools.

💡 Research Summary

CritiqueCrew is a novel AI‑augmented design critique tool that integrates directly into the Figma design environment. The system addresses a well‑documented problem in existing automated UI evaluation tools: they typically present designers with static “problem lists” that lack actionable remediation and disrupt creative flow. To overcome this, CritiqueCrew adopts a multi‑perspective orchestration architecture, modeling three distinct expert roles—User Experience (UX) designer, Product Manager (PM), and Engineer—each powered by a large language model (LLM) with role‑specific prompts and evaluation criteria.

When a designer selects a frame and supplies contextual information (product goals, brand keywords, etc.), each role independently generates a set of issues and concrete suggestions. A central Lead Coordinator module then synthesizes these outputs into a structured agenda, prioritizing and reconciling potentially conflicting feedback. Crucially, the system does not stop at textual advice; it maps each suggestion onto the Figma canvas using real‑time highlights, in‑context annotations, and live preview of design changes. Designers can accept, reject, or modify the AI‑proposed edits directly within the canvas, creating a closed loop of critique‑to‑action that preserves designer agency while providing concrete repair paths.

Two controlled user studies (total N = 48) evaluate the efficacy of this approach. Study 1 compares CritiqueCrew against a state‑of‑the‑art static checker built on the UICrit framework. Design quality is operationalized along two dimensions: issue coverage (the breadth of problems identified) and solution effectiveness (expert ratings of visual aesthetics, usability, compliance, and overall design integrity). Results show that CritiqueCrew improves issue coverage by roughly 27 % and raises solution effectiveness scores by 0.42 points on a standardized scale, both statistically significant. Subjective measures also reveal reduced cognitive load, higher trust, and better perceived usability.

Study 2 isolates the contribution of the multi‑perspective architecture by pitting CritiqueCrew’s role‑based system against a unified‑expert baseline that uses the same underlying LLM but without role separation. The multi‑perspective condition again outperforms the unified model on trust (average +1.3 points), creativity support (+1.5 points), and cognitive load (−0.9 points), confirming that role orchestration broadens problem discovery and yields more coherent, actionable solutions.

Technical implementation hinges on carefully engineered prompts: each role receives a “expert profile” that encodes its priorities (e.g., UX focuses on heuristic violations, PM on business alignment, Engineer on feasibility). The Lead Coordinator employs rule‑based synthesis to resolve conflicts and generate a unified agenda. Integration with Figma is achieved via the plugin API and a WebSocket channel that streams AI responses to the canvas in real time. This design balances AI automation with human control, aligning with mixed‑initiative interaction principles from the HCI literature.

The authors acknowledge limitations. LLM outputs can still be inconsistent, and the conflict‑resolution logic is relatively simple. Participants were experienced designers, so generalizability to novices remains uncertain. Future work is proposed to enrich negotiation protocols among roles, incorporate multimodal inputs such as sketches or user flow diagrams, and expand the expert panel to include additional stakeholders (e.g., accessibility specialists, data analysts).

In sum, CritiqueCrew demonstrates that embedding multi‑perspective conversational critique with interactive, in‑context remediation can substantially improve design outcomes, lower mental effort, and foster a more collaborative AI‑human partnership. The work shifts AI’s role from a passive “problem auditor” to an active “solution co‑creator,” offering a compelling blueprint for next‑generation creative support tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment