Data Distribution Matters: A Data-Centric Perspective on Context Compression for Large Language Model

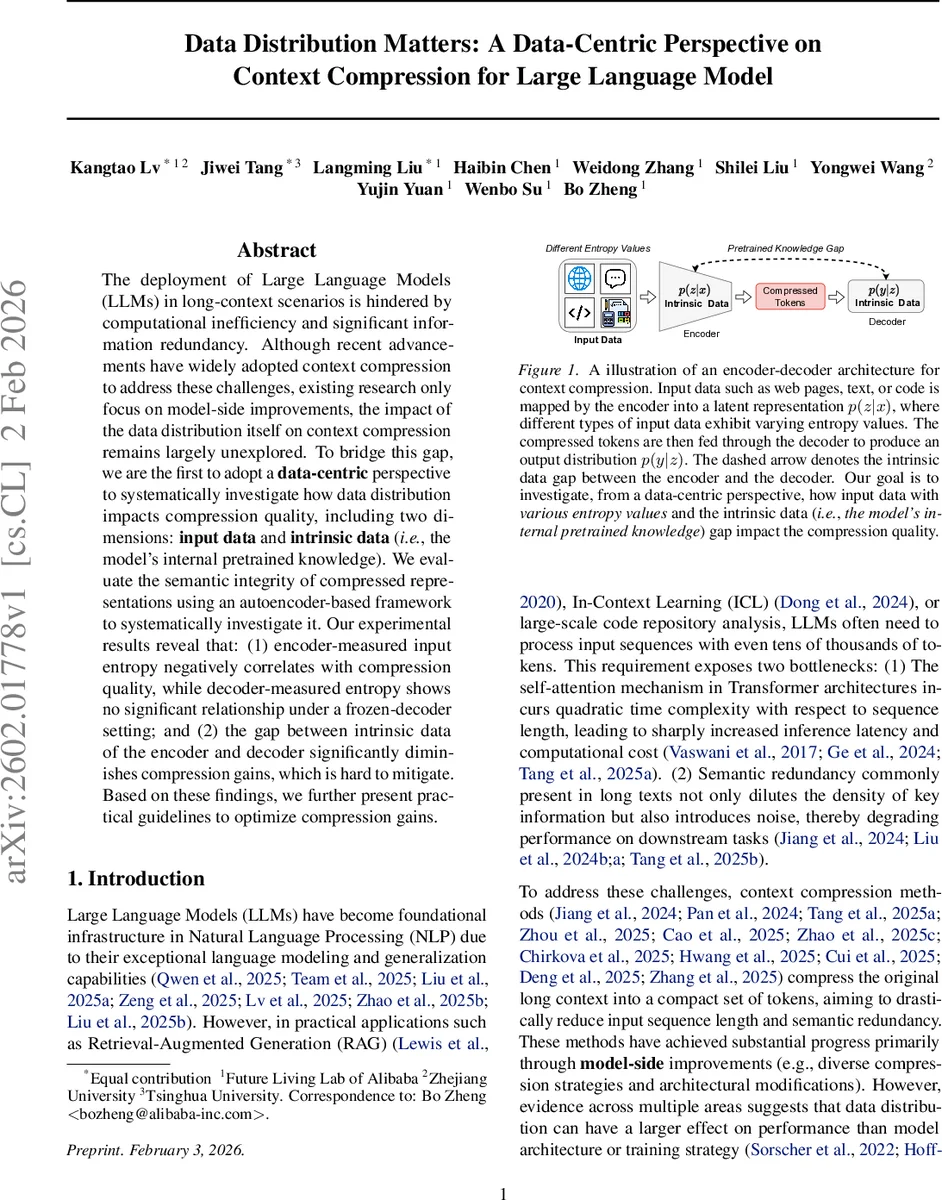

The deployment of Large Language Models (LLMs) in long-context scenarios is hindered by computational inefficiency and significant information redundancy. Although recent advancements have widely adopted context compression to address these challenges, existing research only focus on model-side improvements, the impact of the data distribution itself on context compression remains largely unexplored. To bridge this gap, we are the first to adopt a data-centric perspective to systematically investigate how data distribution impacts compression quality, including two dimensions: input data and intrinsic data (i.e., the model’s internal pretrained knowledge). We evaluate the semantic integrity of compressed representations using an autoencoder-based framework to systematically investigate it. Our experimental results reveal that: (1) encoder-measured input entropy negatively correlates with compression quality, while decoder-measured entropy shows no significant relationship under a frozen-decoder setting; and (2) the gap between intrinsic data of the encoder and decoder significantly diminishes compression gains, which is hard to mitigate. Based on these findings, we further present practical guidelines to optimize compression gains.

💡 Research Summary

The paper addresses a critical bottleneck in deploying large language models (LLMs) for long‑context tasks: the quadratic computational cost of self‑attention and the prevalence of redundant information in lengthy inputs. While many recent works have introduced context‑compression techniques—ranging from hard token selection to soft latent‑vector summarization—most of these efforts focus on model‑side innovations such as novel architectures or training tricks. The authors argue that the distribution of the data itself, both the external input data and the internal “intrinsic data” (i.e., the pretrained knowledge embedded in the encoder and decoder), can have a comparable or even larger impact on compression quality, a factor that has been largely ignored.

To investigate this hypothesis, the authors adopt a data‑centric perspective and construct an auto‑encoder (AE) framework in which a separately pretrained encoder compresses a long text into a short sequence of latent tokens, and a frozen decoder attempts to reconstruct the original text from these tokens. Compression quality is measured by the fidelity of reconstruction using three complementary metrics: F1 (token‑level precision/recall), ROUGE‑L (longest‑common‑subsequence similarity), and BLEU (4‑gram overlap). By keeping the decoder fixed during fine‑tuning, the experimental design isolates the effect of the encoder’s adaptation to a given decoder, allowing a clean analysis of how data characteristics influence performance.

The study uses six massive datasets derived from The Pile, each containing 50 billion tokens tokenized with the Qwen3 tokenizer. Dataset D₁ consists solely of general web text (Common Crawl). Datasets D₂–D₆ progressively replace portions of D₁ with a mixture of ArXiv papers, GitHub code, and mathematics problems in a fixed 2:2:1 ratio, increasing the proportion α from 1⁄6 to 5⁄6. This design creates a controlled gradient of input entropy and domain specificity, ranging from natural language to highly logical, code‑heavy content. The encoder and decoder are pretrained from scratch on disjoint subsets (e.g., encoder on D₁, decoder on a different Dᵢ) to deliberately introduce an “intrinsic data gap” between their internal priors.

Key findings emerge from systematic experiments:

-

Input Entropy vs. Compression Quality – The entropy measured by the encoder (i.e., the Shannon entropy of the input distribution as seen by the encoder) exhibits a strong negative correlation with reconstruction scores. Higher‑entropy inputs (e.g., code or math) lead to lower BLEU, ROUGE‑L, and F1 after compression, indicating that more information‑dense texts are harder to compress without loss. In contrast, the decoder’s entropy (computed from its own pretrained distribution) shows no statistically significant relationship with reconstruction quality, suggesting that the decoder’s internal prior dominates its perception of complexity and can mask input variability when the decoder is frozen.

-

Intrinsic Data Gap as a Bottleneck – When the encoder and decoder are trained on mismatched domains (e.g., a general‑text encoder paired with a code‑specialized decoder), compression performance deteriorates sharply. BLEU drops by over 20 % and F1/ROUGE‑L decline similarly, even though the encoder is fine‑tuned on the target data. This demonstrates that the decoder’s ability to interpret the latent tokens is constrained by its own pretrained knowledge; a large intrinsic data gap creates a “decoder‑centric bottleneck” that limits overall compression gains.

-

Scalability Observations – Scaling model size (from 0.5 B to 2 B parameters) improves absolute reconstruction scores, but the relative impact of data distribution remains dominant. Larger models still suffer when the intrinsic data gap is wide, confirming that simply increasing capacity does not solve the data‑distribution problem.

Based on these insights, the authors propose practical guidelines:

- Entropy‑aware Compression – Estimate the input entropy before compression; for high‑entropy segments, either reduce compression ratio or apply additional summarization steps to preserve critical information.

- Domain‑Aligned Pretraining – Align encoder and decoder pretraining corpora as closely as possible, or employ multi‑domain pretraining to reduce the intrinsic data gap.

- Decoder Adaptation – Instead of keeping the decoder completely frozen, consider lightweight fine‑tuning (e.g., adapters) on target domains to broaden its internal prior without sacrificing modularity.

- Dynamic Ratio Adjustment – Use entropy estimates to dynamically adjust the number of latent slots per input segment, allocating more capacity to complex regions.

In summary, the paper convincingly demonstrates that data distribution—both external input entropy and internal pretrained knowledge—plays a decisive role in the effectiveness of context compression for LLMs. By shifting focus from purely model‑centric improvements to a balanced data‑centric strategy, the work provides a roadmap for building more robust, efficient, and domain‑generalizable compression pipelines, ultimately enabling LLMs to handle longer contexts with reduced computational overhead while maintaining semantic fidelity.

Comments & Academic Discussion

Loading comments...

Leave a Comment