MagicFuse: Single Image Fusion for Visual and Semantic Reinforcement

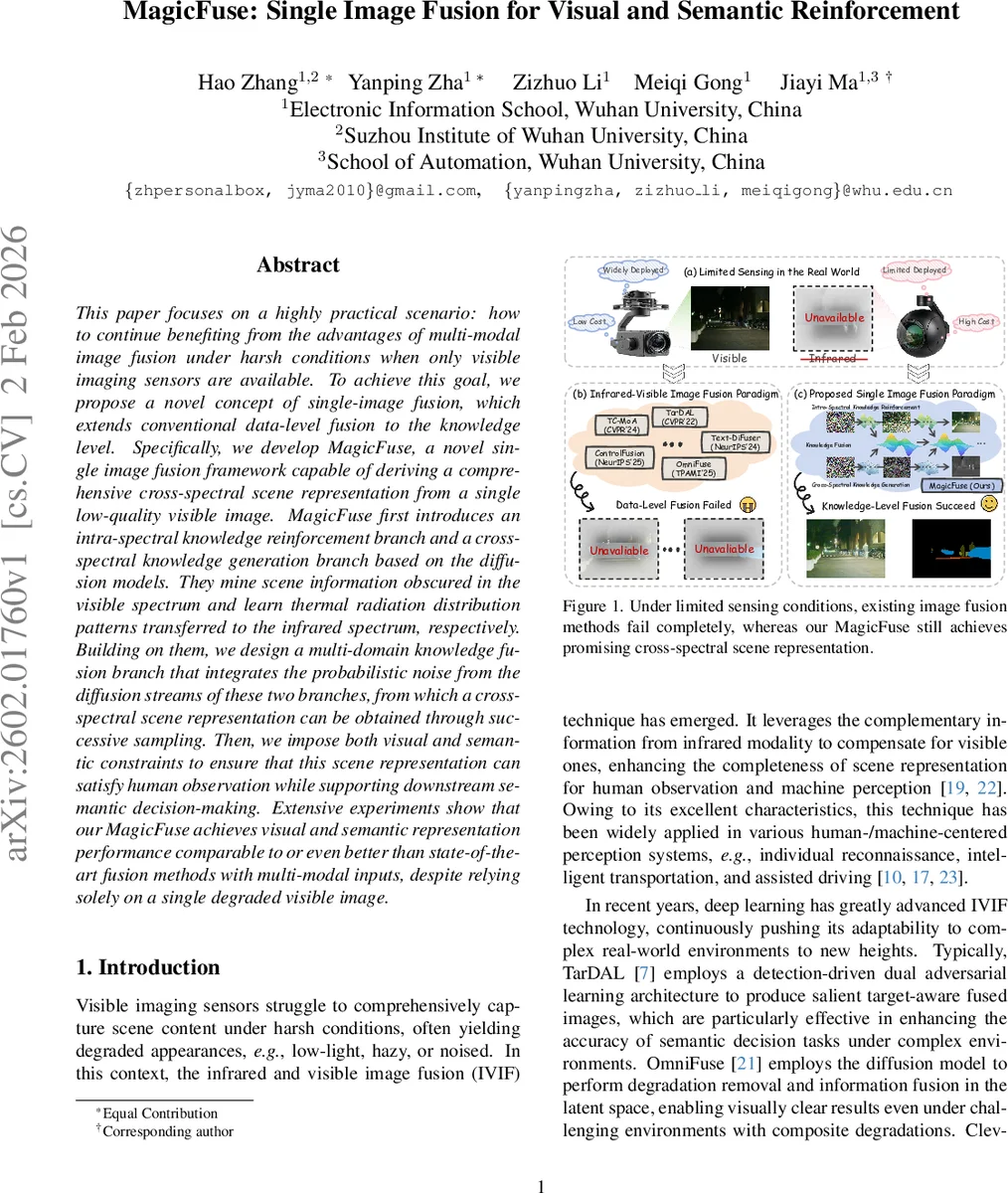

This paper focuses on a highly practical scenario: how to continue benefiting from the advantages of multi-modal image fusion under harsh conditions when only visible imaging sensors are available. To achieve this goal, we propose a novel concept of single-image fusion, which extends conventional data-level fusion to the knowledge level. Specifically, we develop MagicFuse, a novel single image fusion framework capable of deriving a comprehensive cross-spectral scene representation from a single low-quality visible image. MagicFuse first introduces an intra-spectral knowledge reinforcement branch and a cross-spectral knowledge generation branch based on the diffusion models. They mine scene information obscured in the visible spectrum and learn thermal radiation distribution patterns transferred to the infrared spectrum, respectively. Building on them, we design a multi-domain knowledge fusion branch that integrates the probabilistic noise from the diffusion streams of these two branches, from which a cross-spectral scene representation can be obtained through successive sampling. Then, we impose both visual and semantic constraints to ensure that this scene representation can satisfy human observation while supporting downstream semantic decision-making. Extensive experiments show that our MagicFuse achieves visual and semantic representation performance comparable to or even better than state-of-the-art fusion methods with multi-modal inputs, despite relying solely on a single degraded visible image.

💡 Research Summary

MagicFuse tackles a practical yet under‑explored problem: how to retain the benefits of infrared‑visible image fusion when only a single degraded visible‑light image is available. Traditional infrared‑visible image fusion (IVIF) methods assume the simultaneous presence of both modalities, which is often unrealistic because infrared sensors are expensive or unavailable in many real‑world scenarios. The authors therefore introduce the concept of Single‑Image Fusion (SIF), shifting the fusion paradigm from the data level to the knowledge level.

The core of MagicFuse consists of three diffusion‑based components. First, an intra‑spectral knowledge reinforcement (IKR) branch uses a latent diffusion model (LDM) to restore missing visual details (color, texture, fine structures) from a low‑quality visible image. Second, a cross‑spectral knowledge generation (CKG) branch, also an LDM but trained on paired visible‑infrared data, learns to translate visible features into infrared‑domain representations, effectively synthesizing thermal radiation patterns that are absent in the input. Both branches operate in latent space, employing a forward Markovian noise‑injection process and a reverse denoising process that predicts the noise term ϵ̂ at each timestep.

The knowledge extracted from IKR (ϵψₜ) and CKG (ϵφₜ) is not a final image but a probabilistic noise representation of the respective knowledge. MagicFuse fuses these two streams in a Multi‑Domain Knowledge Fusion (MKF) module. At each diffusion timestep t, a learned weighting network F computes a scalar weight w based on (i) the estimated “clean” latent from each branch (e_zψₜ→0, e_zφₜ→0), (ii) the current fused latent z_fₜ, (iii) the raw noises ϵψₜ and ϵφₜ, and (iv) the timestep index. The fused noise is then ϵ_fₜ = w·ϵψₜ + (1‑w)·ϵφₜ, which is fed back into the reverse diffusion process to gradually generate a “Magic Image” that carries both visual fidelity and inferred infrared characteristics.

To guarantee that the generated representation is useful for downstream machine perception, the authors embed a segmentation head S within the MKF branch. The head consumes attention maps from the fusion network and predicts semantic segmentation maps. The segmentation loss L_s is jointly optimized with the diffusion‑based fusion loss L_f, encouraging the fused latent to be semantically meaningful. Moreover, the predicted segmentation is used to derive a radiation‑category mask M (e.g., pedestrian, vehicle), which further refines the weighting w via w_r = M·min(w, τ) + (1‑M)·w, ensuring that thermally salient objects are preserved during fusion.

Training proceeds by minimizing a combined objective:

min_{ω_f, ω_s} L_f(F(kψ, kφ; ω_f)) + L_s(S(ζ; ω_s)),

where ω_f and ω_s are the parameters of the fusion network and segmentation head, respectively. The diffusion models are pre‑trained on large paired datasets (e.g., KITTI‑IR, FLIR‑ADAS) and then fine‑tuned within the MagicFuse pipeline.

Experimental evaluation covers a range of degradation conditions (low light, haze, noise) and compares MagicFuse against state‑of‑the‑art multimodal fusion methods (OmniFuse, Text‑DiFuse, ControlFusion) as well as single‑modality restoration baselines. Metrics include PSNR/SSIM for visual quality and mIoU/mAP for semantic performance. MagicFuse consistently matches or exceeds the multimodal baselines despite using only a single visible input. Notably, it produces clear thermal‑like highlights of objects that would normally require an infrared sensor, demonstrating successful cross‑spectral inference. An analysis of the learned weight w shows a natural progression: early diffusion steps favor visual reinforcement (w≈0.7‑0.8), while later steps shift toward infrared generation (w≈0.3‑0.4).

The paper’s strengths lie in its practical relevance, the elegant use of diffusion noise as a unifying knowledge carrier, and the explicit visual‑semantic coupling that makes the output useful for both human observers and AI systems. Limitations include the dependence on large paired visible‑infrared datasets for pre‑training, the computational cost of diffusion sampling (which may hinder real‑time deployment), and the relatively simple design of the weighting network, which might not capture more complex inter‑modal relationships in highly corrupted scenes.

Future work suggested by the authors includes lightweight diffusion variants for faster inference, multi‑scale or self‑attention based weight estimation, and exploring unsupervised or weakly‑supervised strategies to reduce the need for paired infrared data.

In summary, MagicFuse pioneers the single‑image fusion paradigm, demonstrating that a well‑designed diffusion‑based knowledge extraction and fusion pipeline can recover both visual detail and inferred infrared information from a lone degraded visible image, thereby extending the applicability of image‑fusion technology to cost‑constrained or sensor‑limited environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment