Mind-Brush: Integrating Agentic Cognitive Search and Reasoning into Image Generation

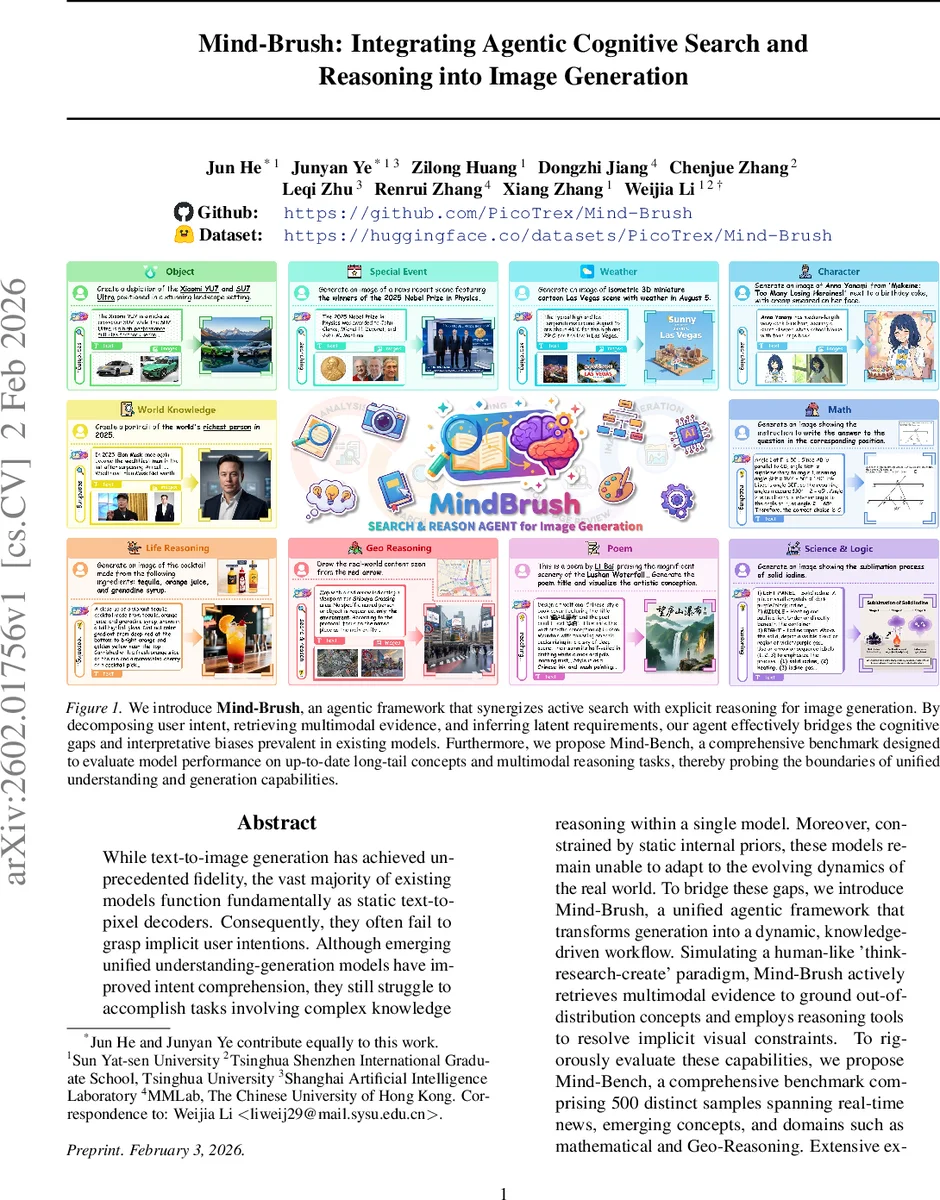

While text-to-image generation has achieved unprecedented fidelity, the vast majority of existing models function fundamentally as static text-to-pixel decoders. Consequently, they often fail to grasp implicit user intentions. Although emerging unified understanding-generation models have improved intent comprehension, they still struggle to accomplish tasks involving complex knowledge reasoning within a single model. Moreover, constrained by static internal priors, these models remain unable to adapt to the evolving dynamics of the real world. To bridge these gaps, we introduce Mind-Brush, a unified agentic framework that transforms generation into a dynamic, knowledge-driven workflow. Simulating a human-like ’think-research-create’ paradigm, Mind-Brush actively retrieves multimodal evidence to ground out-of-distribution concepts and employs reasoning tools to resolve implicit visual constraints. To rigorously evaluate these capabilities, we propose Mind-Bench, a comprehensive benchmark comprising 500 distinct samples spanning real-time news, emerging concepts, and domains such as mathematical and Geo-Reasoning. Extensive experiments demonstrate that Mind-Brush significantly enhances the capabilities of unified models, realizing a zero-to-one capability leap for the Qwen-Image baseline on Mind-Bench, while achieving superior results on established benchmarks like WISE and RISE.

💡 Research Summary

Mind‑Brush introduces an agentic framework that transforms text‑to‑image generation from a static decoding process into a dynamic “think‑research‑create” workflow. The system first performs Cognitive Gap Detection by converting the user’s prompt (and optional reference image) into a structured 5W1H representation and identifying missing entities, relations, or logical dependencies that are not covered by the model’s internal knowledge. These deficiencies are formalized as a set of atomic questions (Q_gap).

Based on the nature of Q_gap, a dynamic execution policy selects between two specialized agents:

-

Search Agent (A_search) – Generates precise textual and visual queries, retrieves relevant documents (T_ref) and reference images (I_ref) from open‑world sources (web, Wikipedia, domain‑specific databases). Retrieved information is injected back into the original prompt and calibrated for visual queries, thereby grounding out‑of‑distribution concepts and real‑time events.

-

Reasoning Agent (A_reasoning) – Employs a chain‑of‑thought (CoT) approach to solve logical, mathematical, or spatial problems that require multi‑step deduction. It consumes the accumulated search evidence together with the original instruction and produces explicit conclusions (R_cot).

All evidence (E = T_ref ∪ I_ref ∪ R_cot) is passed to a Concept Review Agent, which filters noise, ensures logical consistency, and rewrites the information into a Master Prompt (P_master) that explicitly enumerates visual attributes previously implicit. Finally, a Unified Image Generation Agent synthesizes the image conditioned on both P_master and adaptive visual cues (either retrieved references or the original reference image).

The entire process is formalized as a hierarchical sequential decision‑making problem M = ⟨S, A, π, E⟩, where the state S contains the user input, evidence buffer, and intermediate images; the action set A includes the meta‑action (gap detection) and execution actions (search, reason); and the policy π dynamically composes a trajectory based on the detected gaps. This architecture allows the system to iteratively refine its understanding, acquire up‑to‑date knowledge, and perform complex reasoning before generation, overcoming the static knowledge ceiling of conventional unified multimodal models (UMMs).

To evaluate these capabilities, the authors present Mind‑Bench, a new benchmark comprising 500 samples across ten categories: real‑time news, emerging IP concepts, novel terminology, cutting‑edge scientific developments, mathematical problems, geographic reasoning, physics principles, socio‑political issues, artistic trends, and composite multimodal scenarios. Each sample requires external knowledge retrieval and/or multi‑step logical inference, thereby testing the agentic loop rather than mere prompt‑following.

Experimental results show that integrating Mind‑Brush with the Qwen‑Image backbone raises the accuracy on Mind‑Bench from 0.02 to 0.31—a roughly fifteen‑fold improvement. On established knowledge‑driven benchmarks, Mind‑Brush achieves +25.8 % WiScore on WISE and +27.3 % accuracy on RISEBench, outperforming state‑of‑the‑art baselines. Ablation studies confirm that both the search and reasoning components are essential: disabling search collapses performance on OOD and real‑time items, while removing reasoning harms mathematical and spatial tasks.

The paper acknowledges limitations: dependence on the quality of external retrieval engines, added latency from the search‑reason‑generate loop, and current focus on text‑image modalities (extension to video, 3D, or audio remains future work). Proposed future directions include efficient caching of retrieved facts, meta‑learning for better action routing, integration of domain‑specific toolchains (e.g., symbolic math solvers, GIS APIs), and scaling the agentic paradigm to richer multimodal generation.

In summary, Mind‑Brush demonstrates that coupling active multimodal retrieval and explicit logical reasoning with image synthesis yields a substantial “zero‑to‑one” capability leap, moving generative AI closer to human‑like cognition and real‑world adaptability.

Comments & Academic Discussion

Loading comments...

Leave a Comment