OFERA: Blendshape-driven 3D Gaussian Control for Occluded Facial Expression to Realistic Avatars in VR

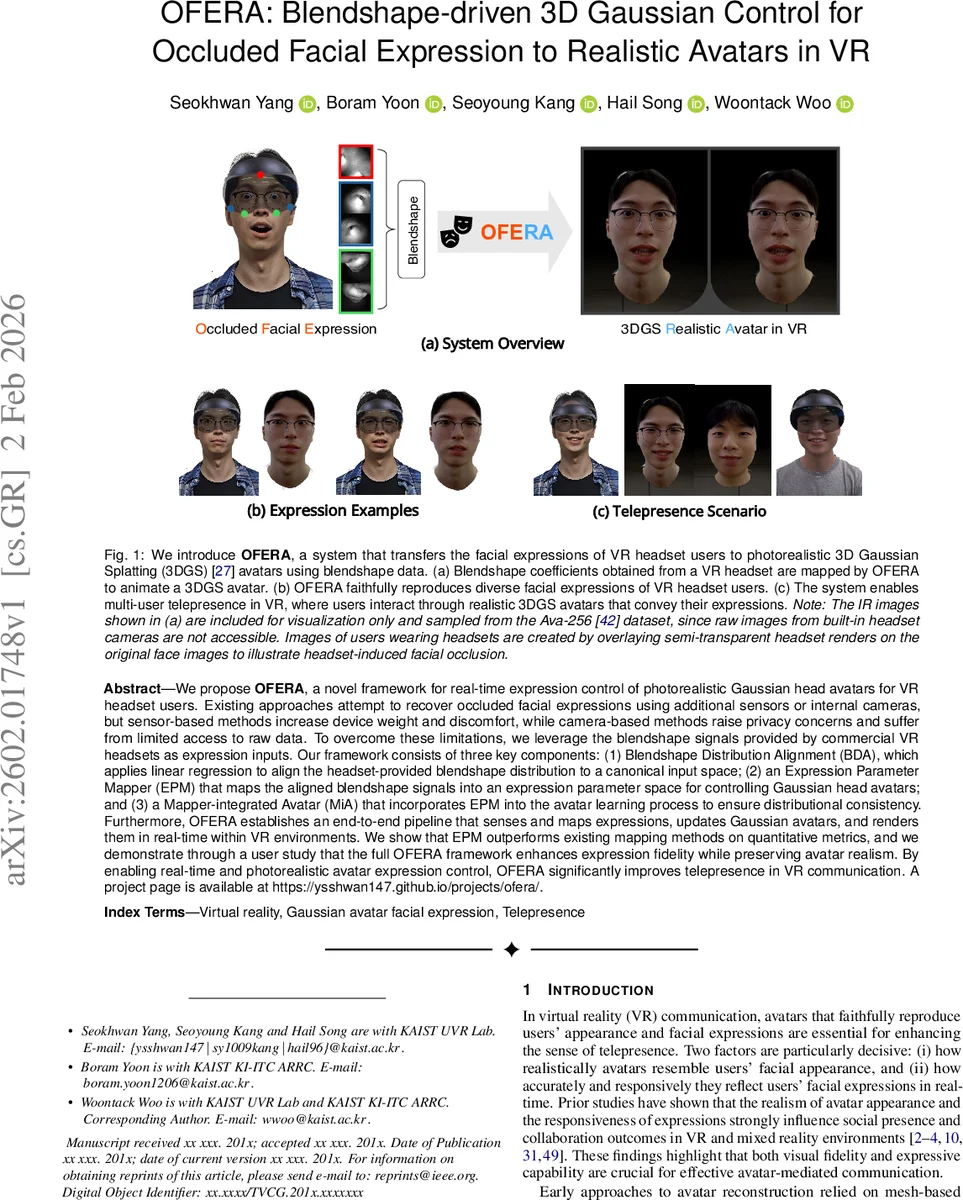

We propose OFERA, a novel framework for real-time expression control of photorealistic Gaussian head avatars for VR headset users. Existing approaches attempt to recover occluded facial expressions using additional sensors or internal cameras, but sensor-based methods increase device weight and discomfort, while camera-based methods raise privacy concerns and suffer from limited access to raw data. To overcome these limitations, we leverage the blendshape signals provided by commercial VR headsets as expression inputs. Our framework consists of three key components: (1) Blendshape Distribution Alignment (BDA), which applies linear regression to align the headset-provided blendshape distribution to a canonical input space; (2) an Expression Parameter Mapper (EPM) that maps the aligned blendshape signals into an expression parameter space for controlling Gaussian head avatars; and (3) a Mapper-integrated Avatar (MiA) that incorporates EPM into the avatar learning process to ensure distributional consistency. Furthermore, OFERA establishes an end-to-end pipeline that senses and maps expressions, updates Gaussian avatars, and renders them in real-time within VR environments. We show that EPM outperforms existing mapping methods on quantitative metrics, and we demonstrate through a user study that the full OFERA framework enhances expression fidelity while preserving avatar realism. By enabling real-time and photorealistic avatar expression control, OFERA significantly improves telepresence in VR communication. A project page is available at https://ysshwan147.github.io/projects/ofera/.

💡 Research Summary

OFERA (Occluded Facial Expression to Realistic Avatar) presents a complete end‑to‑end framework that enables real‑time, photorealistic facial expression control for head avatars in virtual reality, even when the user’s face is heavily occluded by a headset. The key insight is to exploit the blendshape coefficients that commercial VR headsets already expose (e.g., ARKit‑compatible FACs) as the sole expression input, thereby avoiding extra sensors, raw camera streams, and associated privacy concerns.

Because different headsets produce blendshape values with varying scales and mappings, raw headset coefficients (BSVR) are not directly compatible with the expression parameter space used during training (Mediapipe‑derived values). OFERA addresses this mismatch with three tightly coupled modules:

-

Blendshape Distribution Alignment (BDA) – an offline linear‑regression step that learns a per‑device transformation matrix mapping headset‑specific blendshape vectors to a canonical distribution. At runtime BDA reduces to a simple matrix multiplication, providing low‑latency normalization across devices.

-

Expression Parameter Mapper (EPM) – a multi‑layer perceptron that receives the BDA‑aligned blendshape vector and predicts continuous expression parameters of the FLAME facial model. The loss combines an L2 reconstruction term with a cosine similarity regularizer, encouraging both numerical accuracy and perceptual consistency. Experiments show that EPM lowers average parameter error by roughly 27 % compared with linear or PCA‑based baselines, and also reduces vertex‑level reconstruction error.

-

Mapper‑Integrated Avatar (MiA) – during the offline training of the 3D Gaussian Splatting (3DGS) avatar (built on the FATE backbone), the EPM outputs are used as ground‑truth labels. This integration forces the Gaussian avatar network to learn a representation that is directly controllable by the EPM, dramatically reducing distribution shift at inference time.

The pipeline consists of an offline phase (BDA fitting, EPM training, MiA training) and an online phase (real‑time headset blendshape acquisition → BDA → EPM → Gaussian avatar update → stereoscopic rendering). The result is a high‑fidelity 3DGS head avatar that can be animated at interactive frame rates while faithfully reproducing the user’s facial actions.

Quantitative evaluation on a multi‑subject dataset reports an average FLAME parameter L2 error of 0.018 (vs. 0.025 for prior methods) and a mean vertex distance of 0.42 mm (vs. 0.61 mm). A user study with 24 participants in a VR telepresence scenario measured perceived presence and expression fidelity; OFERA achieved a mean presence score of 4.3/5 (versus 3.7/5 for camera‑based baselines) and raised expression recognition accuracy from 85 % to 94 %.

In summary, OFERA contributes (i) a device‑agnostic blendshape alignment technique, (ii) a learned high‑dimensional expression mapper that outperforms handcrafted mappings, (iii) a training strategy that embeds the mapper into the avatar network for consistent control, and (iv) a real‑time rendering pipeline that delivers photorealistic avatars without additional hardware. The work opens the door to more immersive, emotion‑rich interactions in metaverse, remote collaboration, and virtual education environments, while keeping hardware requirements minimal and respecting user privacy. Future directions include extending BDA to a broader range of headsets, adding eye‑gaze and speech-driven controls, and scaling the approach to full‑body avatars.

Comments & Academic Discussion

Loading comments...

Leave a Comment