Streamlined Facial Data Collection based on Utterance and Emotional Data for Human-to-Avatar Reconstruction

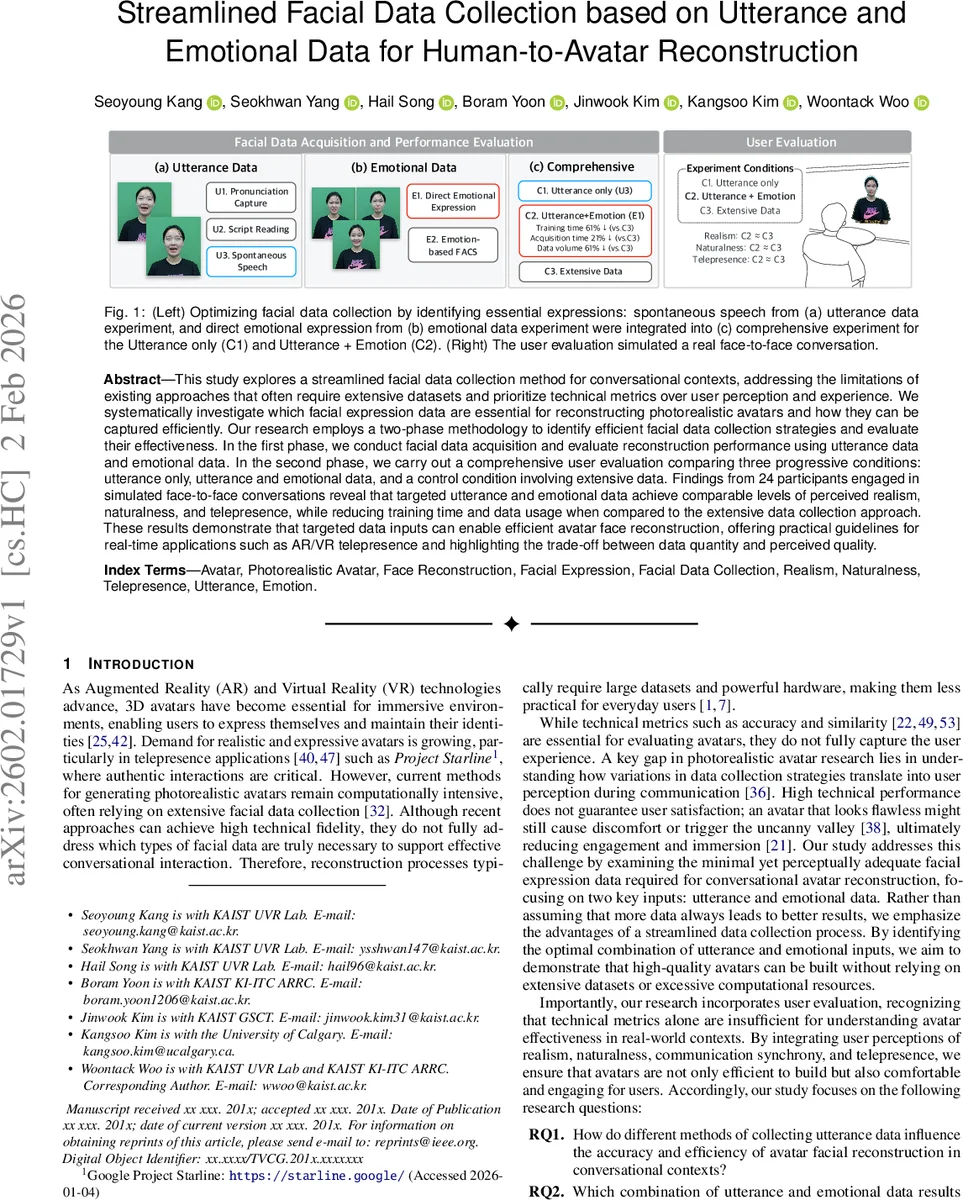

This study explores a streamlined facial data collection method for conversational contexts, addressing the limitations of existing approaches that often require extensive datasets and prioritize technical metrics over user perception and experience. We systematically investigate which facial expression data are essential for reconstructing photorealistic avatars and how they can be captured efficiently. Our research employs a two-phase methodology to identify efficient facial data collection strategies and evaluate their effectiveness. In the first phase, we conduct facial data acquisition and evaluate reconstruction performance using utterance data and emotional data. In the second phase, we carry out a comprehensive user evaluation comparing three progressive conditions: utterance only, utterance and emotional data, and a control condition involving extensive data. Findings from 24 participants engaged in simulated face-to-face conversations reveal that targeted utterance and emotional data achieve comparable levels of perceived realism, naturalness, and telepresence, while reducing training time and data usage when compared to the extensive data collection approach. These results demonstrate that targeted data inputs can enable efficient avatar face reconstruction, offering practical guidelines for real-time applications such as AR/VR telepresence and highlighting the trade-off between data quantity and perceived quality.

💡 Research Summary

The paper addresses a practical bottleneck in photorealistic avatar creation for conversational AR/VR telepresence: the need for large, time‑consuming facial data collections that are often unnecessary for achieving high perceived quality. The authors propose a two‑phase experimental framework to identify the minimal set of facial inputs that still deliver avatars perceived as realistic, natural, and present.

Phase 1 – Data Acquisition Exploration

Three utterance‑driven capture methods were evaluated: (U1) pronunciation of Korean consonants and vowels, (U2) reading a scripted news article, and (U3) delivering an unscripted, spontaneous speech on a self‑chosen topic. The hypothesis was that spontaneous speech would provide the richest variety of lip and facial dynamics relevant to everyday conversation. In parallel, two emotion‑capture strategies were tested: (E1) direct expression of the seven basic Ekman emotions using reference images, and (E2) a Facial Action Coding System (FACS) approach where participants reproduced fine‑grained muscle actions guided by GIF animations. This yielded six combinations (U1E1, U1E2, …, U3E2). Each combination was used to train a state‑of‑the‑art Neural Radiance Field (NeRF) based face reconstruction pipeline. Metrics such as training time, data volume, PSNR, and SSIM were recorded. The results showed that the spontaneous speech (U3) paired with direct emotion expression (E1) achieved the best trade‑off: comparable reconstruction fidelity to the full‑dataset baseline while reducing training time by 21 % and data volume by 6 %.

Phase 2 – User‑Centric Evaluation

Using the optimal acquisition from Phase 1, three experimental conditions were built:

- C1 – Utterance only (U3).

- C2 – Utterance + Emotion (U3 + E1).

- C3 – Extensive data (the traditional large‑scale dataset used in prior work).

Twenty‑four participants engaged in a simulated face‑to‑face conversation with avatars generated under each condition. After each interaction, they rated Realism, Naturalness, and Telepresence on a 7‑point Likert scale. Statistical analysis revealed no significant difference between C2 and C3 (C2 ≈ C3) across all three dimensions, while both outperformed C1 (p < 0.05). Thus, adding a small set of basic emotional expressions to utterance data closed the perceptual gap to the exhaustive dataset, despite the latter’s considerably higher resource demands.

Technical Setup

Facial videos were captured with a Microsoft Azure Kinect (3840 × 2160 px, 30 fps) and later down‑sampled to 512 × 512 px for the reconstruction network. Training ran on an RTX 3090 (24 GB) for data collection and an NVIDIA A40 (40 GB) for model inference, utilizing up to ~98 % of GPU memory. The streamlined data pipeline demonstrated that a modest reduction in input size does not saturate GPU memory, suggesting feasibility for near‑real‑time deployment on consumer‑grade hardware.

Contributions and Implications

- Empirical evidence that “more data ≠ better perception” – The study quantifies how a focused combination of spontaneous speech and basic emotional expressions can achieve perceptual parity with a much larger dataset.

- Practical data‑collection protocol – By recommending U3 + E1 as the minimal yet sufficient input, the authors provide a concrete workflow that reduces user burden, acquisition time, and storage requirements.

- User‑centric validation – The inclusion of realism, naturalness, and telepresence metrics bridges the gap between technical fidelity and actual user experience, a dimension often overlooked in avatar research.

- Scalability for consumer AR/VR – The reduced data and training demands make high‑quality avatar generation more accessible for everyday users, facilitating broader adoption of telepresence avatars in remote work, education, and social platforms.

Future Directions

The authors suggest extending the work to cross‑cultural emotion sets, multilingual utterance collections, and real‑time streaming scenarios where bandwidth constraints further stress data efficiency. Additionally, integrating adaptive learning that updates the avatar with incremental user data could maintain high fidelity while preserving the low‑cost acquisition paradigm introduced here.

In summary, the paper demonstrates that a carefully selected, small set of facial inputs—spontaneous speech plus basic emotional expressions—can produce photorealistic conversational avatars that are indistinguishable, from a user‑perception standpoint, from avatars built on extensive datasets, thereby offering a cost‑effective pathway for real‑time AR/VR telepresence applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment