Voting-based Pitch Estimation with Temporal and Frequential Alignment and Correlation Aware Selection

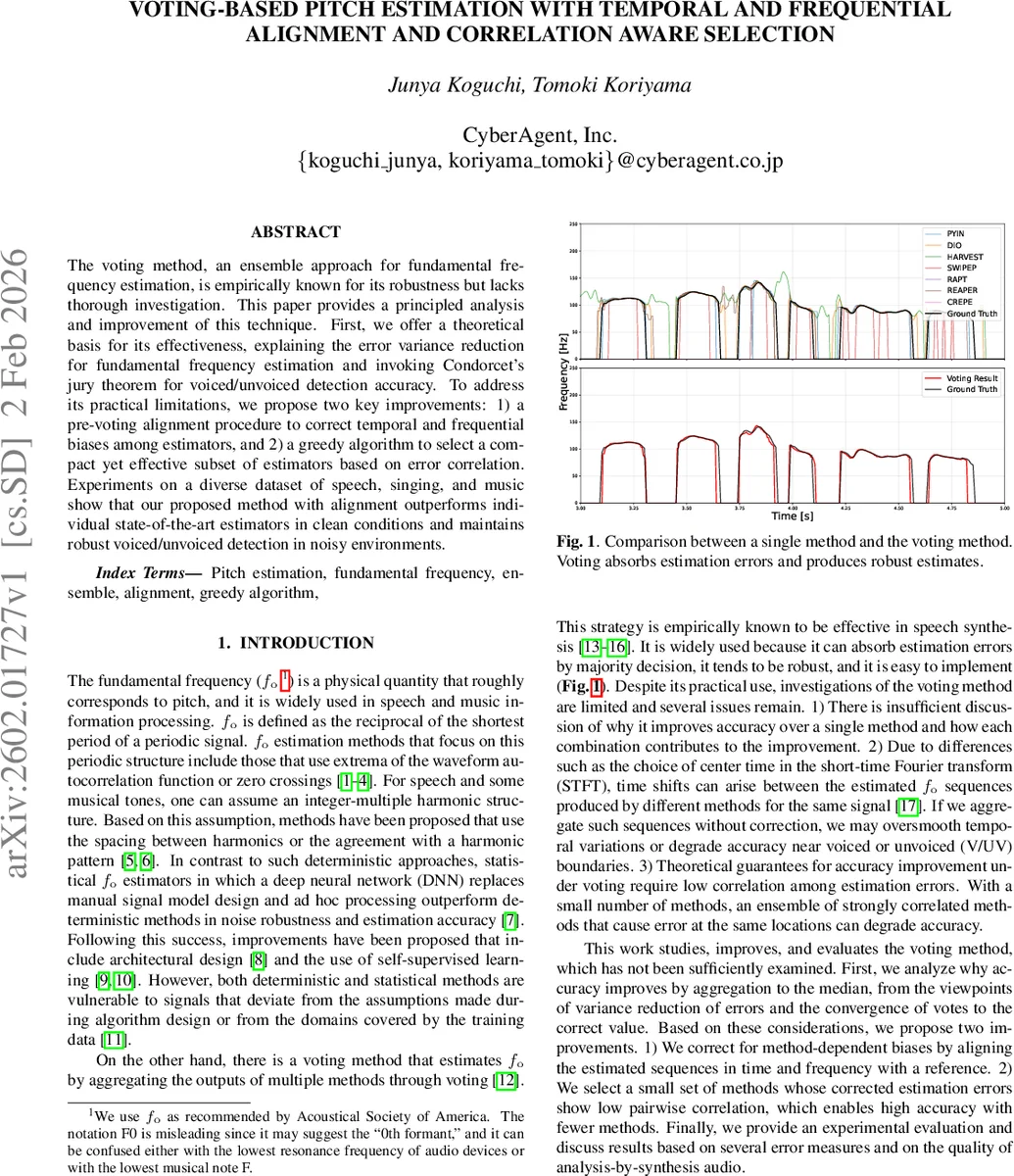

The voting method, an ensemble approach for fundamental frequency estimation, is empirically known for its robustness but lacks thorough investigation. This paper provides a principled analysis and improvement of this technique. First, we offer a theoretical basis for its effectiveness, explaining the error variance reduction for fundamental frequency estimation and invoking Condorcet’s jury theorem for voiced/unvoiced detection accuracy. To address its practical limitations, we propose two key improvements: 1) a pre-voting alignment procedure to correct temporal and frequential biases among estimators, and 2) a greedy algorithm to select a compact yet effective subset of estimators based on error correlation. Experiments on a diverse dataset of speech, singing, and music show that our proposed method with alignment outperforms individual state-of-the-art estimators in clean conditions and maintains robust voiced/unvoiced detection in noisy environments.

💡 Research Summary

This paper revisits the voting‑based ensemble approach for fundamental frequency (F0) estimation, a technique that has long been known for its robustness in speech synthesis and music processing but has received little theoretical scrutiny. The authors first provide a principled analysis of why voting improves accuracy. By modeling each estimator’s output as X_i = θ + ε_i, where θ is the true pitch and ε_i the error, they show that the median (or mean) of the estimates reduces the variance of the error according to

Var(θ̂) ≈ (1 + (n − 1) ρ̄) / (4 n) · h̄²,

where n is the number of estimators, ρ̄ the average correlation of error signs, and h̄ the average density of the error distribution at zero. This formula demonstrates that, provided the error signs are not perfectly correlated (ρ̄ < 1), the ensemble variance shrinks as more estimators are added, mirroring the classic “wisdom of crowds” effect. For voiced/unvoiced (V/UV) decision making, the paper invokes Condorcet’s jury theorem: if each estimator correctly classifies a frame with probability p > 0.5 and errors are independent, the majority‑vote accuracy P_n rapidly approaches 1 as n grows. These theoretical results justify the empirical success of voting observed in prior work.

Despite its appeal, the vanilla voting method suffers from two practical issues. First, different pitch trackers often use distinct STFT window placements, peak‑picking strategies, or post‑processing steps, leading to systematic temporal offsets (frame‑level shifts) and global frequency biases (e.g., consistent octave errors). Aggregating such misaligned sequences can oversmooth rapid pitch movements or introduce large errors near V/UV boundaries. Second, the ensemble’s performance depends on the diversity of its members; highly correlated estimators can produce simultaneous mistakes, negating the variance‑reduction benefit and even degrading accuracy when computational resources limit the number of methods that can be used.

To address these problems, the authors propose two complementary improvements.

- Pre‑voting alignment: A reference estimator is chosen (typically a strong, well‑behaved method such as REAPER). For each other estimator, the algorithm searches a bounded temporal offset k (within ±H frames) that maximizes raw pitch accuracy (RPA) against the reference, counting a frame as correct if the cent‑difference is below a tolerance ε (e.g., 25 cents). The optimal k_align is applied by shifting the sequence, with zero‑padding to preserve length. After temporal alignment, a global frequency bias f_align is computed as the median cent‑difference across voiced frames and subtracted in the cent domain. This two‑step correction removes both frame‑level mis‑synchronization and systematic octave/half‑octave shifts, restoring the assumptions required for the variance‑reduction analysis.

- Correlation‑aware method selection: When computational budget restricts the number of trackers, a greedy algorithm builds a compact subset G from a candidate pool S. Starting from an initial method (REAPER), each remaining candidate A_j is temporarily added to G, and the resulting ensemble is evaluated using two criteria: (a) RPA (direct accuracy) and (b) the average correlation of error signs among members. The candidate that yields the largest RPA improvement—or, under the correlation criterion, the smallest average correlation—is permanently added to G and removed from S. The process stops when adding any further method does not improve the score or when a predefined subset size is reached. Notably, the correlation metric can be estimated without ground‑truth labels, enabling unsupervised subset construction.

The experimental protocol is extensive. Audio is resampled to 48 kHz, 16‑bit, and drawn from diverse corpora: speech (multiple English and Japanese datasets), singing voice (MIR‑1K), and instrumental music (MDB‑stem‑synth). Nine state‑of‑the‑art pitch trackers serve as both baselines and ensemble members: RAPT, SWIPE’, pYIN, DIO, Harvest, Praat, CREPE, FCNF0++, and REAPER (the latter also provides ground‑truth for speech via EGG‑derived analysis). All methods share a 5 ms frame shift and operate within 25 Hz–4200 Hz. Evaluation metrics include: (i) pitch error in cents (mean ± std), (ii) raw pitch accuracy at 5, 25, 50 cents thresholds, (iii) V/UV recall and false‑alarm rate, (iv) analysis‑by‑synthesis quality using WORLD and automatic MOS estimation (UTMOSv2), and (v) robustness under additive noise (NOISEX92, QUT‑NOISE) at 30, 20, 10 dB SNR.

Results in clean conditions (Table 1) show that the full voting ensemble (all nine methods) with both temporal and frequency alignment achieves the lowest pitch error (≈ 3.35 cents) and the highest RPA (≈ 66.9 % at 5 cents), surpassing every individual tracker, including REAPER. V/UV recall reaches 94.2 %, higher than any single method. Ablation experiments reveal that removing either temporal or frequency alignment degrades performance noticeably, confirming the necessity of both corrections.

Under noisy conditions (Tables 2 and 3), deep‑learning based trackers (CREPE, FCNF0++) retain relatively high RPA even at 10 dB, reflecting their known noise robustness. The voting ensemble’s RPA declines with decreasing SNR but remains competitive down to 20 dB, and its V/UV recall stays above 90 % until 10 dB, demonstrating that majority voting preserves voicing decisions even when pitch trajectories become noisy.

The greedy selection study (Table 4) indicates that a compact subset of three to five carefully chosen trackers attains nearly the same accuracy as the full nine‑method ensemble. Subsets selected by the accuracy criterion and by the correlation criterion are remarkably similar, supporting the theoretical claim that low error‑sign correlation is a key driver of ensemble gains.

In conclusion, the paper delivers a thorough theoretical justification for voting‑based F0 estimation, introduces practical alignment and selection mechanisms that substantially improve accuracy and robustness, and validates these contributions across a wide range of speech, singing, and music data. The work bridges the gap between empirical success and analytical understanding, and it opens avenues for future research: (i) enhancing pitch‑trajectory fidelity in low‑SNR scenarios, (ii) extending the framework to real‑time or low‑resource settings, and (iii) exploring even broader method pools, including recent self‑supervised or transformer‑based pitch models, to further diversify error patterns and boost ensemble performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment