Hyperspectral Image Fusion with Spectral-Band and Fusion-Scale Agnosticism

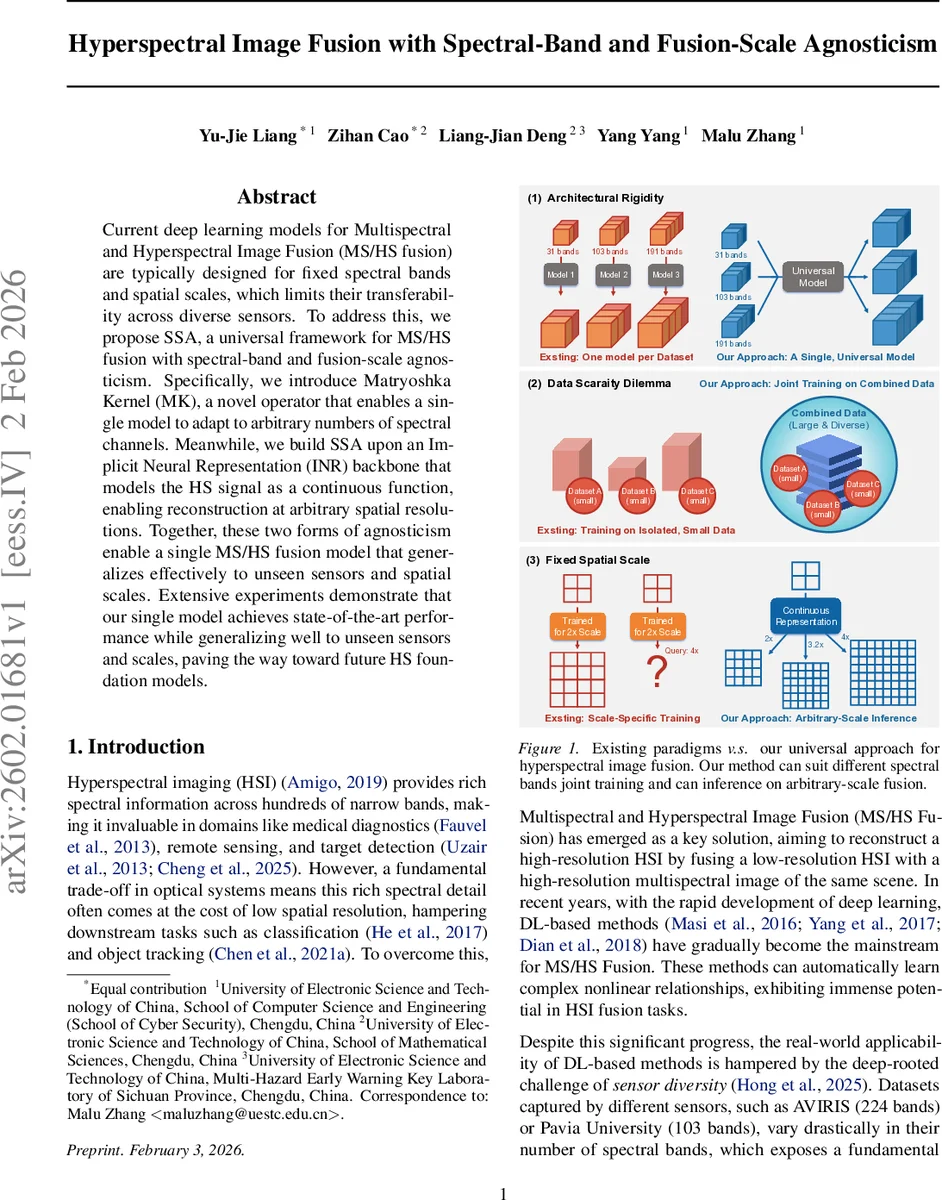

Current deep learning models for Multispectral and Hyperspectral Image Fusion (MS/HS fusion) are typically designed for fixed spectral bands and spatial scales, which limits their transferability across diverse sensors. To address this, we propose SSA, a universal framework for MS/HS fusion with spectral-band and fusion-scale agnosticism. Specifically, we introduce Matryoshka Kernel (MK), a novel operator that enables a single model to adapt to arbitrary numbers of spectral channels. Meanwhile, we build SSA upon an Implicit Neural Representation (INR) backbone that models the HS signal as a continuous function, enabling reconstruction at arbitrary spatial resolutions. Together, these two forms of agnosticism enable a single MS/HS fusion model that generalizes effectively to unseen sensors and spatial scales. Extensive experiments demonstrate that our single model achieves state-of-the-art performance while generalizing well to unseen sensors and scales, paving the way toward future HS foundation models.

💡 Research Summary

The paper addresses a fundamental limitation of current deep‑learning‑based multispectral–hyperspectral (MS/HS) image fusion methods: they are tightly coupled to a fixed number of spectral bands and a predetermined spatial up‑sampling factor, which hampers their applicability across the wide variety of sensors used in remote sensing, medical imaging, and other domains. To overcome both spectral and spatial rigidity, the authors propose a unified framework called SSA (Spectral‑Scale Agnostic fusion).

The first pillar of SSA is the Matryoshka Kernel (MK). Inspired by Matryoshka Representation Learning, MK stores a superset of convolutional weights with dimensions D × Cmax × k × k, where Cmax is the maximum number of input channels the model can ever encounter. During a forward pass, the layer slices this tensor along the channel dimension to obtain a valid kernel of size D × Cin × k × k that matches the actual number of input bands (Cin). The same slicing principle is applied at the output side, allowing the network to generate an output with exactly the original number of hyperspectral bands. This dynamic kernel mechanism eliminates the need for separate models or manual band selection for each sensor, enabling a single architecture to process arbitrary spectral configurations.

The second pillar is an Implicit Neural Representation (INR) backbone. After the MK layers convert the low‑resolution HSI and high‑resolution MSI into a fixed‑dimensional feature space (dimension D), two parallel encoders compress these features into latent codes. An MLP then learns a continuous mapping from normalized spatial coordinates p∈

Comments & Academic Discussion

Loading comments...

Leave a Comment