Towards Autonomous Instrument Tray Assembly for Sterile Processing Applications

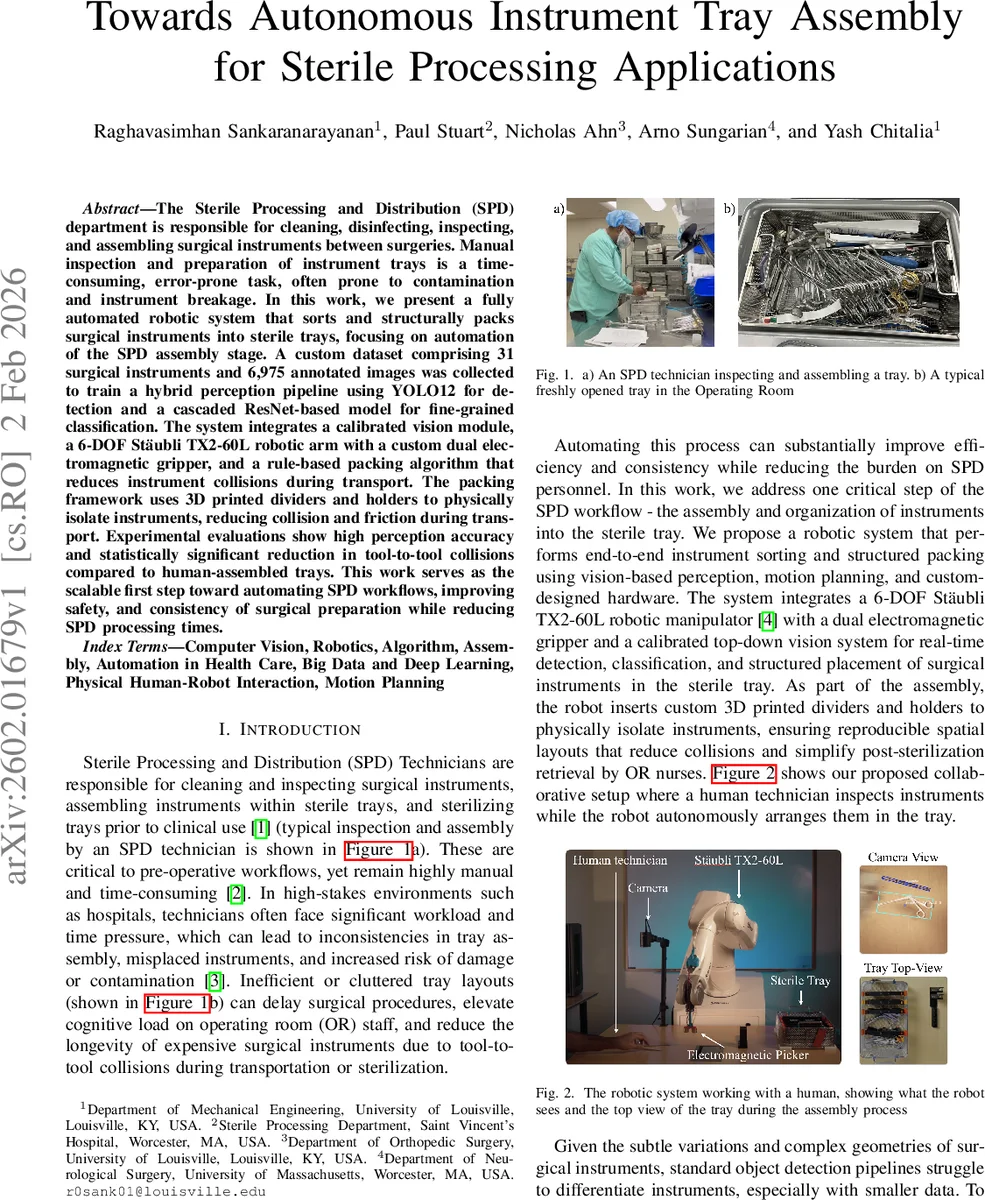

The Sterile Processing and Distribution (SPD) department is responsible for cleaning, disinfecting, inspecting, and assembling surgical instruments between surgeries. Manual inspection and preparation of instrument trays is a time-consuming, error-prone task, often prone to contamination and instrument breakage. In this work, we present a fully automated robotic system that sorts and structurally packs surgical instruments into sterile trays, focusing on automation of the SPD assembly stage. A custom dataset comprising 31 surgical instruments and 6,975 annotated images was collected to train a hybrid perception pipeline using YOLO12 for detection and a cascaded ResNet-based model for fine-grained classification. The system integrates a calibrated vision module, a 6-DOF Staubli TX2-60L robotic arm with a custom dual electromagnetic gripper, and a rule-based packing algorithm that reduces instrument collisions during transport. The packing framework uses 3D printed dividers and holders to physically isolate instruments, reducing collision and friction during transport. Experimental evaluations show high perception accuracy and statistically significant reduction in tool-to-tool collisions compared to human-assembled trays. This work serves as the scalable first step toward automating SPD workflows, improving safety, and consistency of surgical preparation while reducing SPD processing times.

💡 Research Summary

This paper presents a comprehensive robotic system designed to automate the assembly of surgical instrument trays within the Sterile Processing and Distribution (SPD) department, a critical but manually intensive, error-prone, and time-consuming pre-operative workflow. The system aims to improve consistency, reduce instrument damage from collisions, and alleviate the burden on SPD technicians.

The core of the system is a collaborative human-robot workflow. After inspecting a cleaned instrument, a human technician simply places it on a designated staging area. The system then autonomously handles the sorting and precise placement into a sterile tray. This is achieved through a tightly integrated trio of components: a sophisticated perception pipeline, a robotic manipulator with custom end-effector, and an intelligent packing framework.

First, a hybrid vision pipeline addresses the challenge of recognizing and localizing a diverse set of surgical instruments, including many with subtle visual differences. The authors created a custom dataset of 6,975 annotated images across 31 instrument types. Detection is performed in real-time using YOLO12. For each detected instrument, the Segment Anything Model (SAM) generates a precise mask, from which the instrument’s orientation is calculated via Principal Component Analysis (PCA). A novel, part-aware cascaded ResNet-18 model then performs fine-grained classification. This model explicitly processes the head, tail, and full-body regions of an instrument separately and incorporates metadata (log-normalized instrument dimensions) to significantly improve discrimination between visually similar instruments (e.g., straight vs. curved forceps).

Second, for physical manipulation, a Stäubli TX2-60L 6-DOF robotic arm is employed. Its key feature is a custom-designed dual electromagnetic gripper, controlled by an ESP32 microcontroller. This gripper can pick up both metallic instruments and the system’s proprietary 3D-printed tray organizers. A degaussing function minimizes residual magnetism on instruments.

Third, and crucially, the system goes beyond simple pick-and-place. It incorporates a rule-based packing algorithm informed by interviews with SPD technicians. This algorithm formalizes tray assembly as a constrained spatial-allocation problem, grouping instruments by type and sorting them by length within their groups to minimize movement and collision risk. To physically enforce this organization, the system uses modular, 3D-printed dividers and specialized holders. Dividers create separate compartments for instrument groups. Holders securely lock ring-handled instruments in place using a torsion-spring latch, preventing them from falling out during transport while still allowing operating room nurses to easily remove and lay them sideways per standard convention.

Experimental evaluations demonstrated high accuracy for the perception system. More importantly, trays assembled by the robotic system showed a statistically significant reduction in tool-to-tool collisions compared to those assembled manually by humans. This directly addresses a key cause of instrument damage.

In conclusion, this work presents a fully functional, end-to-end automation solution for a specific yet vital hospital logistics task. It successfully integrates advanced computer vision, reliable robotics, and practical hardware design informed by real-world user needs. The system serves as a scalable proof-of-concept and a foundational step towards broader automation of the entire SPD workflow, promising enhanced safety, consistency, and efficiency in surgical preparation.

Comments & Academic Discussion

Loading comments...

Leave a Comment