TABX: A High-Throughput Sandbox Battle Simulator for Multi-Agent Reinforcement Learning

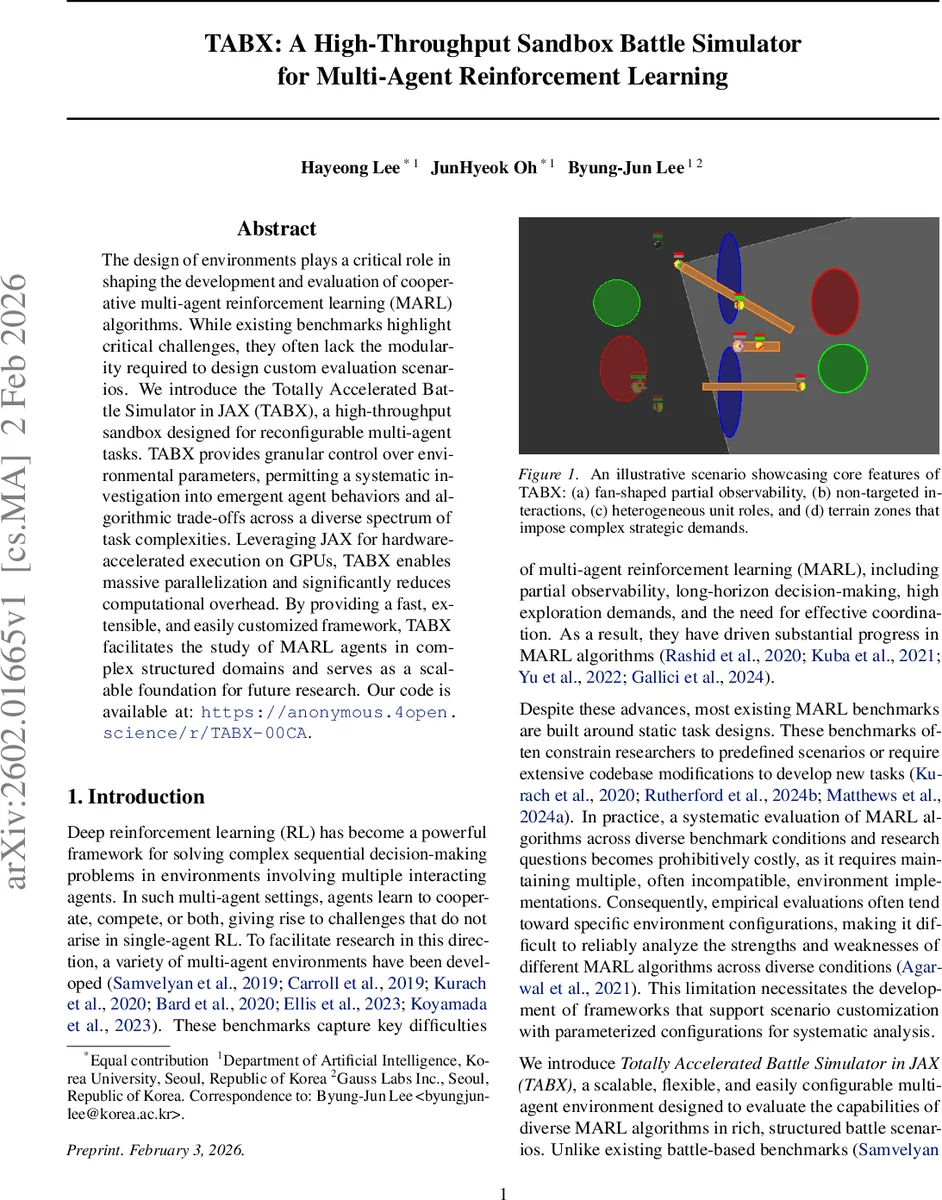

The design of environments plays a critical role in shaping the development and evaluation of cooperative multi-agent reinforcement learning (MARL) algorithms. While existing benchmarks highlight critical challenges, they often lack the modularity required to design custom evaluation scenarios. We introduce the Totally Accelerated Battle Simulator in JAX (TABX), a high-throughput sandbox designed for reconfigurable multi-agent tasks. TABX provides granular control over environmental parameters, permitting a systematic investigation into emergent agent behaviors and algorithmic trade-offs across a diverse spectrum of task complexities. Leveraging JAX for hardware-accelerated execution on GPUs, TABX enables massive parallelization and significantly reduces computational overhead. By providing a fast, extensible, and easily customized framework, TABX facilitates the study of MARL agents in complex structured domains and serves as a scalable foundation for future research. Our code is available at: https://anonymous.4open.science/r/TABX-00CA.

💡 Research Summary

The paper introduces TABX (Totally Accelerated Battle Simulator in JAX), a high‑throughput, highly configurable sandbox designed for multi‑agent reinforcement learning (MARL) research. Existing MARL benchmarks such as SMAC, MPE, and Craftax provide valuable challenges—partial observability, long‑horizon decision making, and sparse rewards—but they are largely static, require extensive code modifications to create new tasks, and lack systematic parameterization. TABX addresses these shortcomings by leveraging JAX for GPU‑accelerated, fully vectorized simulation, enabling millions of environment steps per second and dramatically reducing experimental cost.

TABX’s design revolves around four orthogonal parameter groups: (1) unit specifications (health, attack damage, movement speed, range, etc.), (2) terrain zones (lava, swamp, bush) that impose damage, speed penalties, or asymmetric concealment, (3) heuristic policy parameters that control non‑player agents, and (4) physics parameters (time‑step size, collision tolerance, restitution). All these can be altered at episode level via JSON configuration files or an intuitive graphical scenario editor, eliminating the need for recompilation or deep code changes.

Agents receive a fan‑shaped field‑of‑view aligned with their facing direction, mirroring a first‑person perspective. Within this view they observe health, unit type, and terrain information of all visible entities. Actions are limited to six discrete choices: four directional moves, a rotation, and an attack (or heal for supporter units). Crucially, TABX employs a non‑targeted interaction model: attacks and heals affect any unit that lies inside a “hurtbox” aligned with the attacker’s heading, rather than requiring explicit target selection. This design forces agents to coordinate positioning and orientation to succeed, thereby amplifying the need for joint strategic planning under partial observability.

To provide diverse adversarial behavior, TABX defines role‑appropriate heuristic policies. Each unit is assigned one of three functional roles—Assassin, Ranger, Healer—based on its kinematic and combat attributes. Role‑specific behavioral biases are combined to produce three difficulty levels (random, novice, medium). This yields richer, more realistic opponent dynamics than the simplistic random or fixed scripts used in many prior benchmarks.

The authors evaluate four representative MARL algorithms—MAPPO, IPPO, QMIX, and IQL—across eight pre‑defined scenarios (Crossfire, Rangers, Ambush, Superking, Clover, Bypass, Ribbon, Grid). Results show substantial variance in win rates depending on terrain complexity and observation constraints. For instance, MAPPO consistently outperforms the others in bush‑dense maps where asymmetric visibility creates high information asymmetry, while QMIX struggles in long‑horizon tasks with sparse rewards. The experiments demonstrate that TABX can expose algorithmic strengths and weaknesses that remain hidden in static environments.

A comparative table highlights that TABX uniquely satisfies four criteria: multi‑agent support, high‑throughput execution, full configurability, and an integrated scenario editor—features that are only partially present in existing benchmarks. The paper’s contributions are threefold: (1) a JAX‑based, GPU‑accelerated simulator with baseline implementations for reproducible benchmarking, (2) a modular framework allowing dynamic reconfiguration of units, terrain, heuristics, and physics without code changes, together with a visual scenario editor, and (3) empirical evidence that TABX enables systematic study of core MARL challenges such as information‑dependent value learning, sparse‑reward exploration, and zero‑shot generalization.

Future work includes extending the engine to 3‑D physics, integrating automated curriculum generation (e.g., unsupervised environment design), and developing meta‑learning techniques to automatically tune heuristic opponent policies. Overall, TABX represents a significant step toward scalable, flexible, and reproducible MARL experimentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment