Multimodal UNcommonsense: From Odd to Ordinary and Ordinary to Odd

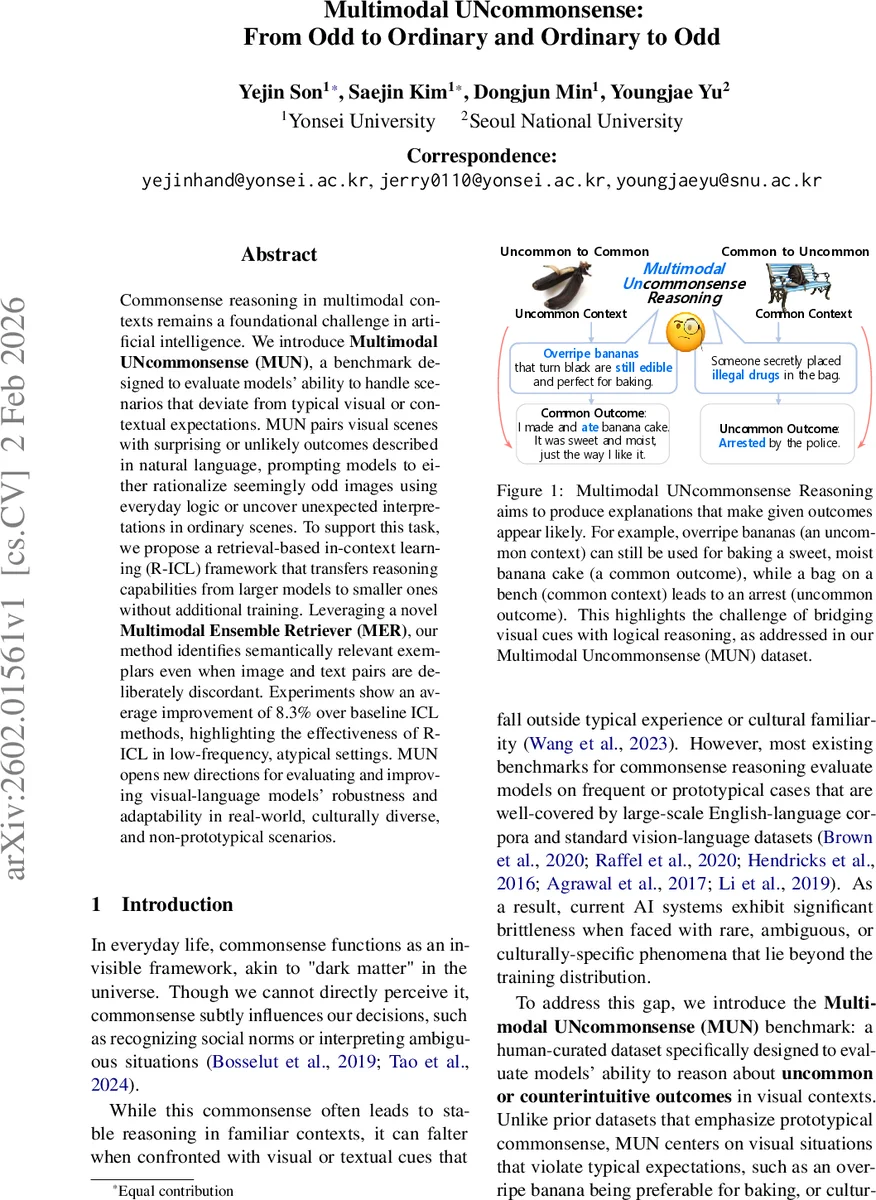

Commonsense reasoning in multimodal contexts remains a foundational challenge in artificial intelligence. We introduce Multimodal UNcommonsense(MUN), a benchmark designed to evaluate models’ ability to handle scenarios that deviate from typical visual or contextual expectations. MUN pairs visual scenes with surprising or unlikely outcomes described in natural language, prompting models to either rationalize seemingly odd images using everyday logic or uncover unexpected interpretations in ordinary scenes. To support this task, we propose a retrieval-based in-context learning (R-ICL) framework that transfers reasoning capabilities from larger models to smaller ones without additional training. Leveraging a novel Multimodal Ensemble Retriever (MER), our method identifies semantically relevant exemplars even when image and text pairs are deliberately discordant. Experiments show an average improvement of 8.3% over baseline ICL methods, highlighting the effectiveness of R-ICL in low-frequency, atypical settings. MUN opens new directions for evaluating and improving visual-language models’ robustness and adaptability in real-world, culturally diverse, and non-prototypical scenarios.

💡 Research Summary

The paper addresses a gap in multimodal commonsense reasoning: existing benchmarks focus on frequent, prototypical situations, while real‑world applications often involve visual and textual cues that are mismatched, rare, or culturally specific. To evaluate and improve model performance under such conditions, the authors introduce the Multimodal UNcommonsense (MUN) benchmark. MUN is built around a bidirectional reasoning paradigm. In the “MUN‑vis” task, models receive an image that looks odd or low‑frequency (e.g., an over‑ripe, blackened banana) together with a common outcome description (e.g., “person enjoyed banana bread without health concerns”). The model must generate an explanation that normalizes the visual oddity. In the “MUN‑lang” task, the input is a mundane image (e.g., a backpack on a bench) paired with an uncommon outcome (e.g., “arrested by police”). Here the model must uncover hidden, surprising causes that make the outcome plausible. This bidirectional setup forces models to reason both from odd‑to‑ordinary and ordinary‑to‑odd, a capability missing from prior datasets.

Dataset construction proceeds in four stages. First, GPT‑4o is prompted to produce diverse textual scenarios for both task types, encouraging rich cultural and sensory detail. Second, for each scenario, five candidate images are retrieved via the Bing Web Search API; human annotators manually select the image that best matches the intended oddness or ordinariness. Third, 26 graduate‑student annotators write natural‑language explanations for a subset of the pairs (143 MUN‑vis and 156 MUN‑lang instances). Fourth, the same explanations are refined using GPT‑4o, yielding a hybrid “Human+LLM” set that preserves human diversity while gaining the precision of large language models. The final corpus contains 1,015 pairs (515 MUN‑vis, 500 MUN‑lang) with human, LLM, and hybrid explanations.

To boost smaller vision‑language models (VLMs) without additional fine‑tuning, the authors adopt Retrieval‑based In‑Context Learning (R‑ICL). R‑ICL supplies a target model with a few high‑quality exemplars generated by a larger model, enabling zero‑ or few‑shot reasoning. The key technical contribution is the Multimodal Ensemble Retriever (MER). MER computes similarity scores independently in the visual and textual modalities, then fuses them using a tunable weighting scheme. This design explicitly accommodates the intentional cross‑modal discordance of MUN; unlike conventional cross‑modal retrievers that assume strong alignment, MER can retrieve relevant exemplars even when image and text are semantically divergent.

Experiments compare MER‑R‑ICL against baseline ICL methods and a random exemplar selection baseline. Across multiple VLM backbones, MER‑R‑ICL achieves an average 8.3 % absolute increase in win‑rate over random selection and consistently outperforms standard ICL. Detailed metrics (accuracy, BLEU, METEOR, and human plausibility ratings) show that explanations generated with MER‑R‑ICL are more coherent, context‑aware, and culturally sensible, especially in low‑frequency or culturally specific scenarios.

The paper acknowledges limitations: manual image curation restricts scalability; the notion of “uncommon” is inherently subjective, potentially affecting reproducibility; and MER’s weighting parameters may need re‑tuning for new domains. Future work is suggested in automating image‑text pairing, defining quantitative uncommonness measures, and expanding the benchmark to cover a broader range of cultures and modalities.

In summary, MUN introduces a novel, bidirectional multimodal commonsense benchmark that challenges models with deliberately discordant visual‑textual pairs. The proposed MER‑based R‑ICL framework demonstrates that small VLMs can inherit sophisticated reasoning abilities from larger models without extra training, achieving notable performance gains. This work opens a new research direction toward robust, culturally aware AI systems capable of abductive reasoning beyond everyday expectations.

Comments & Academic Discussion

Loading comments...

Leave a Comment