Making Avatars Interact: Towards Text-Driven Human-Object Interaction for Controllable Talking Avatars

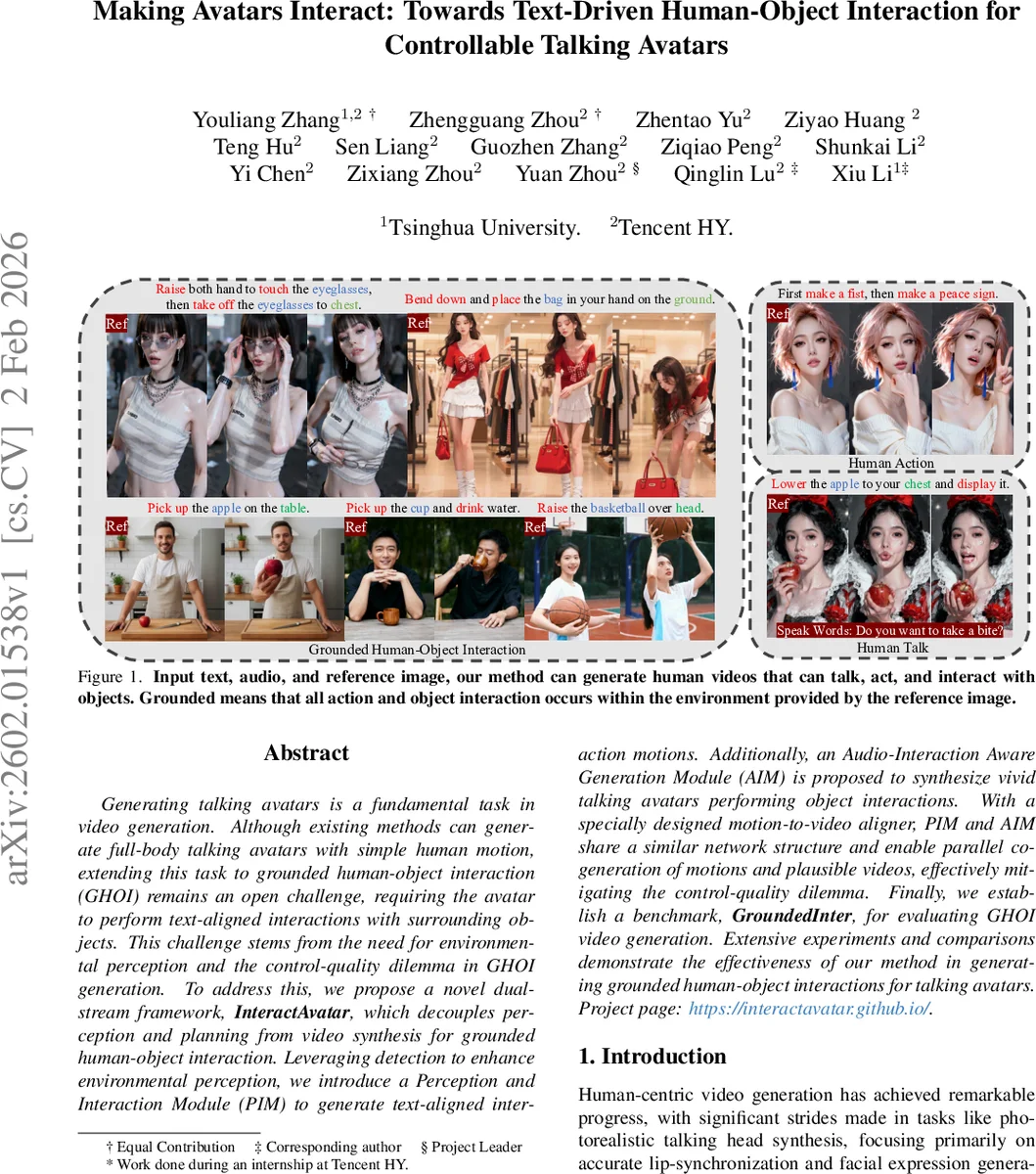

Generating talking avatars is a fundamental task in video generation. Although existing methods can generate full-body talking avatars with simple human motion, extending this task to grounded human-object interaction (GHOI) remains an open challenge, requiring the avatar to perform text-aligned interactions with surrounding objects. This challenge stems from the need for environmental perception and the control-quality dilemma in GHOI generation. To address this, we propose a novel dual-stream framework, InteractAvatar, which decouples perception and planning from video synthesis for grounded human-object interaction. Leveraging detection to enhance environmental perception, we introduce a Perception and Interaction Module (PIM) to generate text-aligned interaction motions. Additionally, an Audio-Interaction Aware Generation Module (AIM) is proposed to synthesize vivid talking avatars performing object interactions. With a specially designed motion-to-video aligner, PIM and AIM share a similar network structure and enable parallel co-generation of motions and plausible videos, effectively mitigating the control-quality dilemma. Finally, we establish a benchmark, GroundedInter, for evaluating GHOI video generation. Extensive experiments and comparisons demonstrate the effectiveness of our method in generating grounded human-object interactions for talking avatars. Project page: https://interactavatar.github.io

💡 Research Summary

InteractAvatar tackles the newly defined task of Grounded Human‑Object Interaction (GHOI) for talking avatars, where an avatar must act on objects within a specific scene while speaking. The authors propose a dual‑stream Diffusion Transformer (DiT) architecture that separates perception‑planning from video synthesis. The Perception and Interaction Module (PIM) receives a reference image, a textual command, and optional initial motion, and jointly learns object detection and motion generation using a unified flow‑matching loss. By alternating between “Conditional Continuation” (predict future motion given the first frame) and “Perception‑as‑Generation” (predict the whole sequence from image and text), PIM learns to parse scene layout and produce a spatio‑temporal motion consisting of human skeletal keypoints and object bounding‑box trajectories.

The Audio‑Interaction aware Generation Module (AIM) takes the motion latent, audio features extracted by a pretrained Wav2Vec model, and synthesizes high‑fidelity video frames. A Motion‑to‑Video (M2V) aligner injects intermediate PIM features into AIM layer‑wise via residual connections, ensuring that the generated video stays tightly coupled with the evolving motion. This parallel co‑generation mitigates the classic control‑quality dilemma: the system remains controllable through text/audio while preserving visual realism.

Key engineering tricks include: (1) embedding the reference image as a virtual timestep –1 with a custom Rotary Position Embedding mapping, allowing it to condition the entire sequence without disrupting temporal encodings; (2) concatenating a task‑type embedding (ACTION vs HOI) with the text embedding for unified cross‑attention conditioning; (3) limiting motion resolution to 256 px on the shorter side to reduce computation; and (4) applying a spatial face mask to focus audio‑injection on the facial region, stabilizing lip‑sync training.

To evaluate the approach, the authors construct GroundedInter, a benchmark of 600 test cases each comprising a reference image, a structured textual description of the interaction, and corresponding speech audio. Metrics cover scene‑action grounding, object interaction accuracy, lip‑sync quality, and overall video fidelity (FID, LPIPS). InteractAvatar outperforms prior audio‑driven full‑body animation models (e.g., OmniHuman‑1) and HOI‑conditioned generation methods (e.g., AnchorCrafter, ManiVideo) across all metrics, achieving notably lower FID, higher LPIPS, and superior alignment with textual commands. Human studies confirm higher perceived naturalness and command fidelity.

Limitations include reliance on 2‑D bounding‑box object representations, which restricts complex 3D manipulations and physical dynamics, and dependence on pretrained object detectors that may not generalize to unseen categories. Future work is suggested on 3‑D scene reconstruction, physics‑based interaction, multi‑object simultaneous actions, and efficient sampling for real‑time deployment.

In summary, InteractAvatar introduces a novel, decoupled architecture that successfully generates controllable, high‑quality talking avatars performing grounded human‑object interactions, and provides a dedicated benchmark to spur further research in this emerging domain.

Comments & Academic Discussion

Loading comments...

Leave a Comment