OpInf-LLM: Parametric PDE Solving with LLMs via Operator Inference

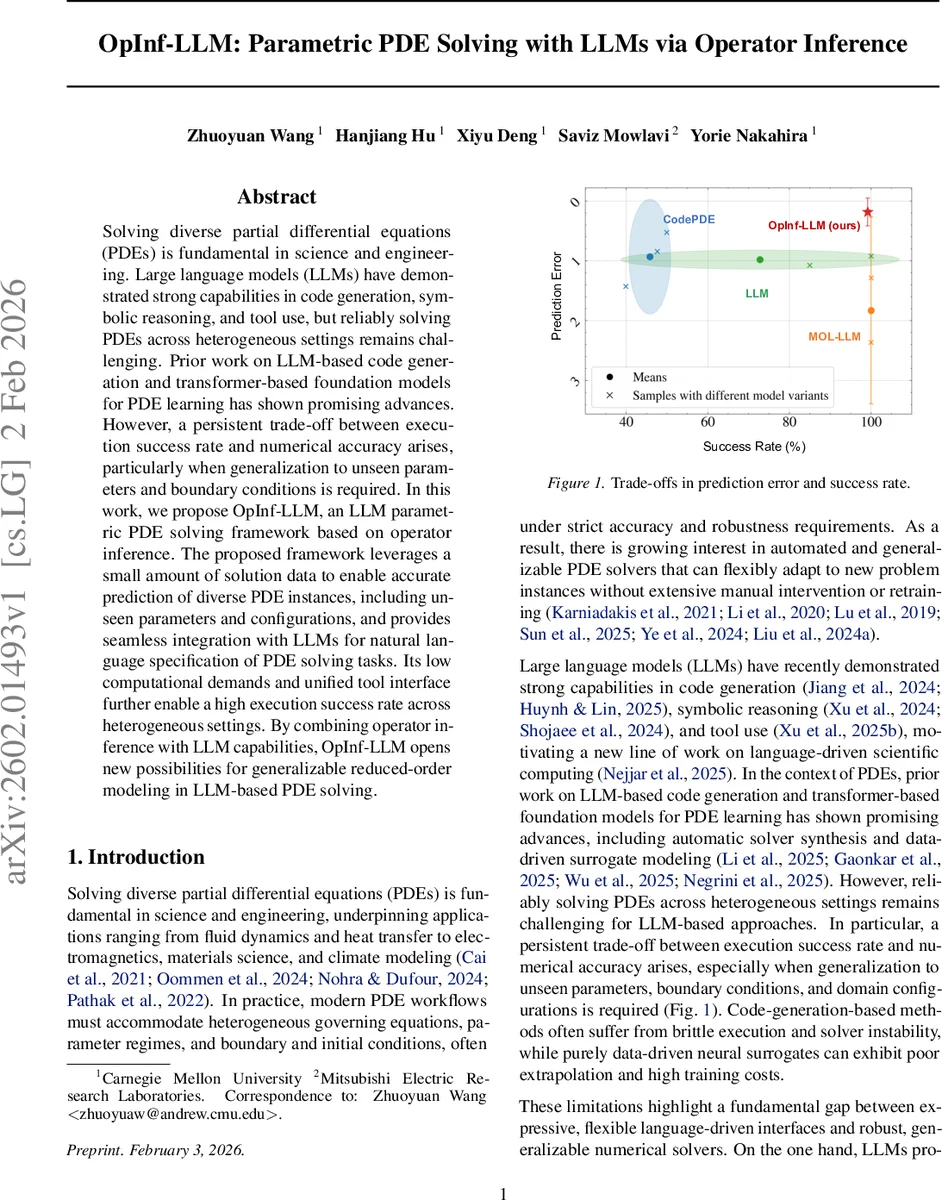

Solving diverse partial differential equations (PDEs) is fundamental in science and engineering. Large language models (LLMs) have demonstrated strong capabilities in code generation, symbolic reasoning, and tool use, but reliably solving PDEs across heterogeneous settings remains challenging. Prior work on LLM-based code generation and transformer-based foundation models for PDE learning has shown promising advances. However, a persistent trade-off between execution success rate and numerical accuracy arises, particularly when generalization to unseen parameters and boundary conditions is required. In this work, we propose OpInf-LLM, an LLM parametric PDE solving framework based on operator inference. The proposed framework leverages a small amount of solution data to enable accurate prediction of diverse PDE instances, including unseen parameters and configurations, and provides seamless integration with LLMs for natural language specification of PDE solving tasks. Its low computational demands and unified tool interface further enable a high execution success rate across heterogeneous settings. By combining operator inference with LLM capabilities, OpInf-LLM opens new possibilities for generalizable reduced-order modeling in LLM-based PDE solving.

💡 Research Summary

OpInf‑LLM introduces a novel framework that combines large language models (LLMs) with operator inference (OpInf) to solve parametric partial differential equations (PDEs) in a robust, data‑efficient, and user‑friendly manner. The approach is divided into an offline stage and an online stage. In the offline stage, a small set of high‑fidelity simulation snapshots covering a limited range of parameters is collected. Proper orthogonal decomposition (POD) is applied to all snapshots to obtain a shared reduced basis Φ of dimension r. For each training parameter ξi, the solution is projected onto Φ, yielding modal coefficients a(t, ξi) and their time derivatives. A least‑squares problem with Tikhonov regularization is then solved to infer reduced operators A(ξi), H(ξi), B(ξi), and c(ξi) that reproduce the projected dynamics via the quadratic reduced‑order model da/dt = A(ξ)a + H(ξ)(a⊗a) + B(ξ)u + c(ξ). To enable generalization, the operators are treated as functions of ξ and fitted with polynomial regression, allowing the prediction of operators for unseen parameters ξ∗ without additional high‑fidelity simulations.

The online stage leverages an LLM as a natural‑language front‑end. A user issues a plain‑language instruction such as “Solve the viscous Burgers equation with ν = 0.03 and Dirichlet boundary conditions …”. The LLM parses the request, extracts the PDE type, parameter values, and boundary specifications, and then calls pre‑registered tool functions: (1) polynomial_regression to obtain A(ξ∗), H(ξ∗), B(ξ∗), c(ξ∗); (2) ode_integration to integrate the low‑dimensional ODE system forward in time; and (3) reconstruction to map the modal solution back to the full‑order field using Φ. Because the online computation only involves evaluating a few polynomial expressions and integrating a small ODE system, the computational cost is negligible and the chance of runtime failures is dramatically reduced compared with code‑generation pipelines that must compile and execute generated solvers.

Experimental evaluation covers three benchmark PDE families: the heat equation, Burgers’ equation, and the lid‑driven cavity flow (Navier‑Stokes). For each family, the authors train OpInf‑LLM on 5–10 parameter settings and test on unseen parameters across a wide range (e.g., ν ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment