MEnvAgent: Scalable Polyglot Environment Construction for Verifiable Software Engineering

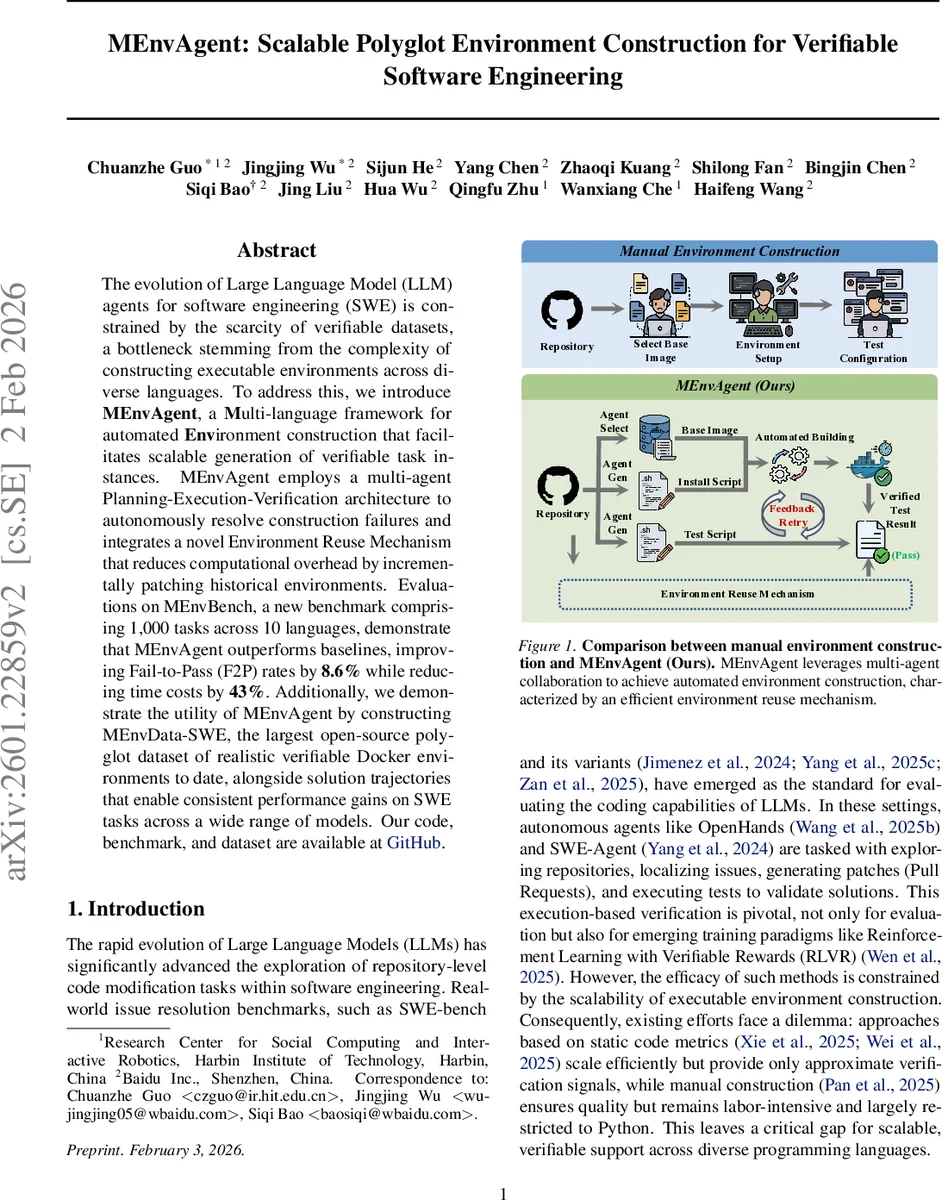

The evolution of Large Language Model (LLM) agents for software engineering (SWE) is constrained by the scarcity of verifiable datasets, a bottleneck stemming from the complexity of constructing executable environments across diverse languages. To address this, we introduce MEnvAgent, a Multi-language framework for automated Environment construction that facilitates scalable generation of verifiable task instances. MEnvAgent employs a multi-agent Planning-Execution-Verification architecture to autonomously resolve construction failures and integrates a novel Environment Reuse Mechanism that reduces computational overhead by incrementally patching historical environments. Evaluations on MEnvBench, a new benchmark comprising 1,000 tasks across 10 languages, demonstrate that MEnvAgent outperforms baselines, improving Fail-to-Pass (F2P) rates by 8.6% while reducing time costs by 43%. Additionally, we demonstrate the utility of MEnvAgent by constructing MEnvData-SWE, the largest open-source polyglot dataset of realistic verifiable Docker environments to date, alongside solution trajectories that enable consistent performance gains on SWE tasks across a wide range of models. Our code, benchmark, and dataset are available at https://github.com/ernie-research/MEnvAgent.

💡 Research Summary

The paper tackles a fundamental bottleneck in large‑language‑model (LLM)‑driven software engineering (SWE): the scarcity of verifiable datasets. Existing datasets are either limited to Python, rely on static code metrics, or require labor‑intensive manual construction of executable environments. Both issues hinder scalable training and evaluation of LLM agents that need to run code, apply patches, and verify results through real tests.

To solve this, the authors introduce MEnvAgent, a multi‑language framework that automatically constructs, verifies, and reuses Docker‑based execution environments for arbitrary open‑source repositories. The system is built around a closed‑loop Planning‑Execution‑Verification architecture, populated by specialized agents:

- Repository Analysis Agent parses a repository snapshot, extracts its project type, dependency list, and entry points.

- Environment Setup Agent selects an appropriate base image (e.g., Ubuntu 22.04) and generates a full installation script (the build process P).

- Test Configuration Agent creates a test script (T) that runs the repository’s test suite, handling language‑specific frameworks such as pytest, Maven, or cargo.

During the Execution stage, the Environment Execution Agent spins up a container, runs the build script, and monitors the terminal output in real time. It can automatically apply corrective commands (install missing packages, pin versions, etc.) without human intervention. If the build succeeds, the workflow proceeds to verification; otherwise, the system falls back to the Planning stage to generate a new plan.

The Verification stage is performed by the Verification Agent, which executes the test script inside the container. Success is defined by the Fail‑to‑Pass (F2P) criterion: the original repository must fail the tests, while the repository after applying the fix patch must pass them. This ensures that the constructed environment faithfully reproduces the bug and validates its resolution. If verification fails, the agent attributes the error (missing dependency, incorrect test command) and feeds this information back to the Planning agents for another iteration.

A key novelty is the Environment Reuse Mechanism. Instead of building every environment from scratch, MEnvAgent maintains a pool of previously verified environments (S_pool). When a new task arrives, it searches this pool for a “similar” environment S_sim using a hierarchical similarity metric: exact version match, same repository, and backward compatibility (newer environments often support older dependencies). Once a candidate is found, an EnvPatchAgent synthesizes an incremental patch ΔP that adapts the existing environment to the new repository snapshot. The patch is applied iteratively, with verification after each step, until the F2P condition is satisfied. This reuse strategy dramatically cuts down build time and computational cost; in the authors’ experiments, 62 % of tasks reused an existing environment, and overall time per task dropped by 43 %.

To evaluate the approach, the authors construct MEnvBench, a new benchmark comprising 1,000 tasks across ten mainstream languages (Python, Java, JavaScript, Go, Rust, C++, C, PHP, Ruby, TypeScript). The benchmark draws from 200 high‑quality GitHub repositories (≥1 k stars, ≥200 forks, primary language > 60 %). For each repository, five historical issue‑pull‑request pairs are selected, ensuring each task includes a bug‑fix patch and a corresponding test patch. Strict quality controls (closed issues, explicit test patches, LLM‑based issue scoring) guarantee that the benchmark reflects realistic, verifiable SWE scenarios.

Experimental results show that MEnvAgent outperforms several baselines, including prior automated environment builders and manual construction. On MEnvBench, MEnvAgent improves the Fail‑to‑Pass rate by 8.6 % relative to the best baseline and reduces average construction time by 43 %. The authors also release MEnvData‑SWE, the largest open‑source polyglot dataset of realistic, verifiable Docker environments, complete with solution trajectories (the sequence of agent actions and feedback). Fine‑tuning open‑source LLMs on this dataset yields consistent performance gains on downstream SWE tasks, demonstrating the practical utility of the generated data.

In summary, the paper makes three major contributions:

- MEnvAgent – a scalable, multi‑agent system for automated, polyglot environment construction that includes a novel reuse mechanism to cut computational overhead.

- MEnvBench – a comprehensive, execution‑based benchmark covering ten languages, 200 repositories, and 1,000 tasks, with strict quality assurance.

- MEnvData‑SWE – the largest publicly available dataset of verified Docker environments for SWE, enabling better training and evaluation of LLM agents.

The work shows that by treating environment construction as an autonomous, reusable service, the community can overcome the data scarcity barrier and accelerate research on LLM‑driven software engineering across diverse programming ecosystems. Future directions include expanding the environment pool, incorporating meta‑learning to accelerate adaptation to new languages or frameworks, and integrating the system with reinforcement‑learning‑with‑verifiable‑rewards pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment