Gated Relational Alignment via Confidence-based Distillation for Efficient VLMs

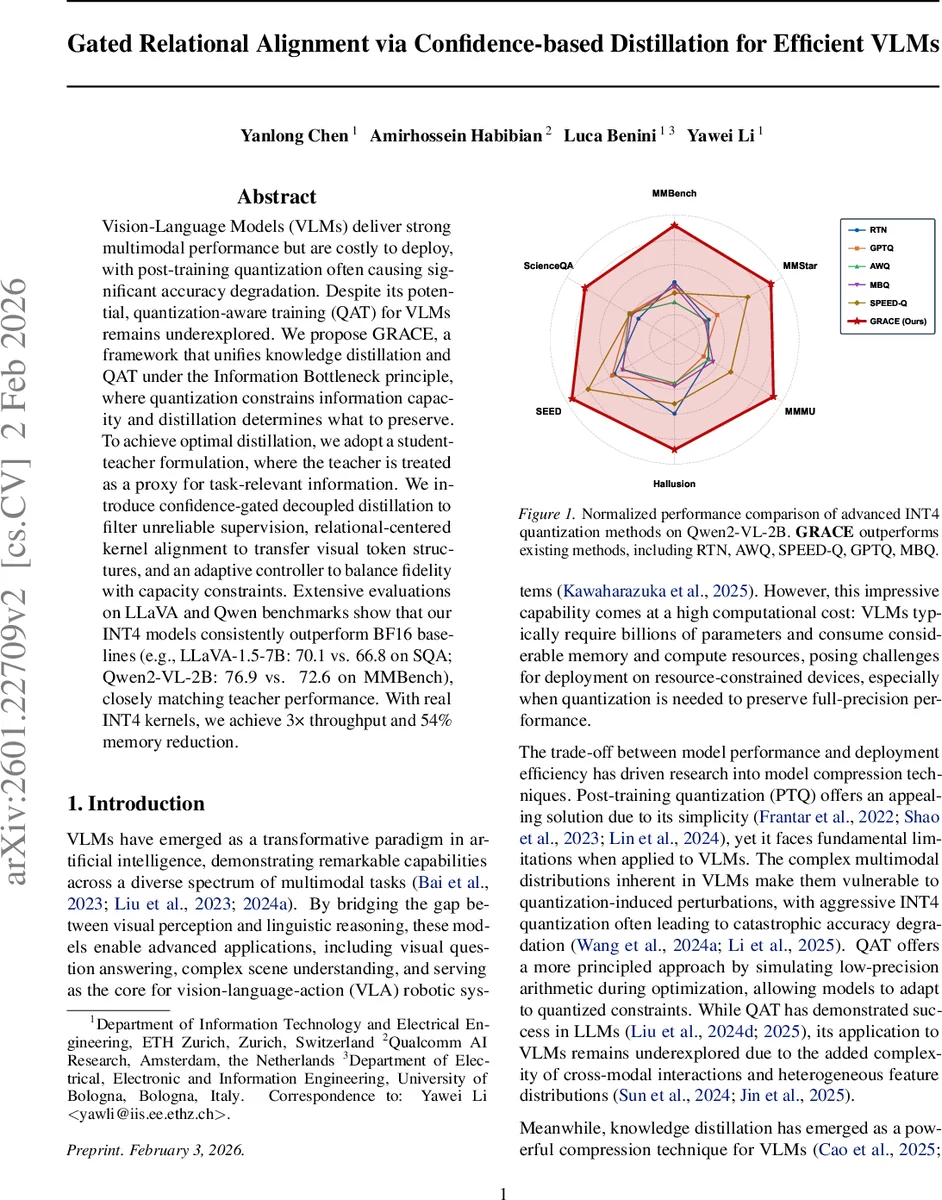

Vision-Language Models (VLMs) achieve strong multimodal performance but are costly to deploy, and post-training quantization often causes significant accuracy loss. Despite its potential, quantization-aware training for VLMs remains underexplored. We propose GRACE, a framework unifying knowledge distillation and QAT under the Information Bottleneck principle: quantization constrains information capacity while distillation guides what to preserve within this budget. Treating the teacher as a proxy for task-relevant information, we introduce confidence-gated decoupled distillation to filter unreliable supervision, relational centered kernel alignment to transfer visual token structures, and an adaptive controller via Lagrangian relaxation to balance fidelity against capacity constraints. Across extensive benchmarks on LLaVA and Qwen families, our INT4 models consistently outperform FP16 baselines (e.g., LLaVA-1.5-7B: 70.1 vs. 66.8 on SQA; Qwen2-VL-2B: 76.9 vs. 72.6 on MMBench), nearly matching teacher performance. Using real INT4 kernel, we achieve 3$\times$ throughput with 54% memory reduction. This principled framework significantly outperforms existing quantization methods, making GRACE a compelling solution for resource-constrained deployment.

💡 Research Summary

The paper tackles the pressing challenge of deploying large Vision‑Language Models (VLMs) under strict resource constraints. While post‑training quantization (PTQ) is simple, it often catastrophically degrades performance for VLMs, especially at aggressive INT4 precision. Quantization‑aware training (QAT) offers a more principled solution but has been under‑explored for VLMs due to the added complexity of cross‑modal interactions and heterogeneous feature distributions.

To bridge this gap, the authors formulate model compression as an Information Bottleneck (IB) problem: quantization imposes a hard bit‑budget (capacity constraint) and knowledge distillation determines which information should be retained within that budget. The teacher model serves as a dense proxy for task‑relevant information, guiding the student (quantized) model to allocate its limited capacity wisely.

GRACE (Gated Relational Alignment via Confidence‑based Distillation) introduces three synergistic components:

-

Confidence‑Gated Decoupled Knowledge Distillation (GDKD).

- The authors empirically demonstrate a strong positive correlation between teacher output entropy and error rate (Pearson r = 0.484, R² = 0.901 on ScienceQA).

- They decompose the classic KL‑distillation loss into Target‑Class Knowledge Distillation (TCKD) and Non‑target‑Class Knowledge Distillation (NCKD), following the Decoupled Knowledge Distillation (DKD) paradigm.

- A confidence gate gᵢ = exp(‑ĥᵢ) (where ĥᵢ is normalized entropy) down‑weights tokens with high uncertainty, effectively filtering noisy supervision. The gated loss L_GDKD = ∑ᵢ gᵢ · L_DKD⁽ⁱ⁾/∑ᵢ gᵢ implements a bottleneck on the supervision signal, allocating more distillation capacity to confident teacher predictions.

-

Relational Centered Kernel Alignment (RCKA).

- Visual reasoning in VLMs relies heavily on the relational structure among visual tokens. The authors compute centered kernel matrices K_T and K_S for teacher and student visual token embeddings at the penultimate LLM layer, then maximize their Centered Kernel Alignment (CKA) similarity.

- This loss transfers fine‑grained intra‑sample token‑to‑token similarity patterns (e.g., attention maps) rather than only batch‑level relationships, enabling the student to inherit the teacher’s superior visual attention dynamics.

-

Adaptive Information‑Bottleneck Controller.

- Because the student’s capacity to absorb information evolves during training, a static distillation weight β is suboptimal. The controller monitors an EMA‑smoothed version of the gated DKD loss (L_GDKD). If L_GDKD exceeds a predefined threshold τ, β is increased to strengthen teacher guidance; if it falls below τ, β is decreased to avoid over‑regularization. This dynamic adjustment balances fidelity to the teacher against the quantization‑induced capacity limit.

Quantization itself uses group‑wise learned step‑size quantization (LSQ), jointly updating both weights and per‑group scales. The total loss combines cross‑entropy, gated DKD, and RCKA terms, with the adaptive controller modulating the DKD weight in real time.

Theoretical contributions include:

- Theorem 3.1 proving that confidence gating introduces a negative covariance between token loss and gating weight, formally justifying the reduction of noisy supervision.

- Proposition 3.2 interpreting the KL divergence as an information gap: minimizing L_GDKD maximizes the mutual information I(Z_S; Y_T) between the student’s representation and the teacher’s pseudo‑labels, providing an IB‑consistent objective.

Empirical evaluation spans two major VLM families: LLaVA‑1.5 and Qwen2‑VL. INT4‑quantized 7B LLaVA‑1.5 achieves 70.1 % on ScienceQA (vs. 66.8 % for BF16) and an average accuracy of 69.0 %—nearly matching the 13B teacher. Qwen2‑VL‑2B in INT4 surpasses its full‑precision baseline across all benchmarks (e.g., SQA 79.1 % vs. 73.7 %). Compared against state‑of‑the‑art quantization methods (RTN, AWQ, SPEED‑Q, GPTQ, MBQ), GRACE consistently delivers higher accuracy. Real‑hardware INT4 kernels yield a 3× inference speedup and a 54 % reduction in memory footprint.

In summary, GRACE unifies quantization‑aware training and knowledge distillation under an Information Bottleneck lens, introducing confidence‑gated decoupled distillation, relational kernel alignment, and an adaptive capacity controller. This combination enables aggressive INT4 quantization of VLMs with negligible performance loss, paving the way for efficient multimodal AI deployment on edge and mobile platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment