Less Noise, More Voice: Reinforcement Learning for Reasoning via Instruction Purification

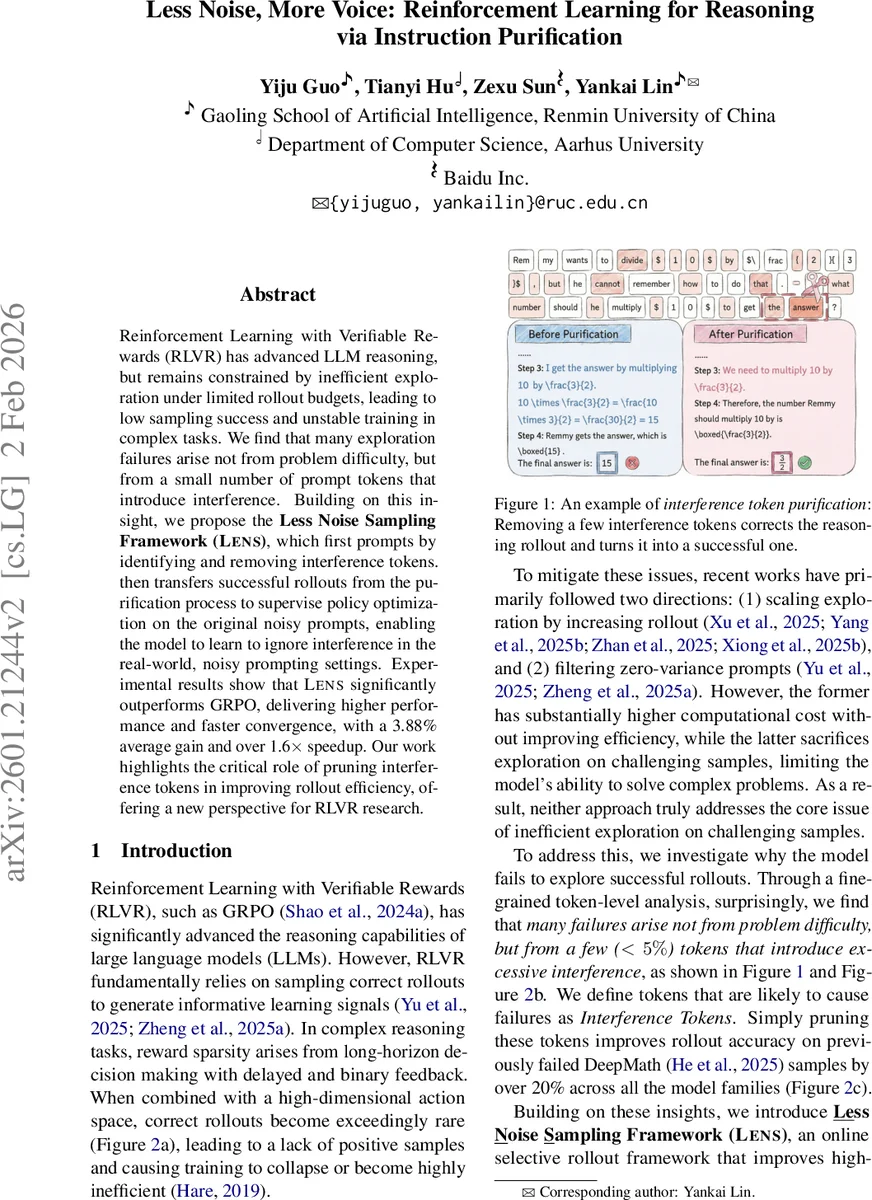

Reinforcement Learning with Verifiable Rewards (RLVR) has advanced LLM reasoning, but remains constrained by inefficient exploration under limited rollout budgets, leading to low sampling success and unstable training in complex tasks. We find that many exploration failures arise not from problem difficulty, but from a small number of prompt tokens that introduce interference. Building on this insight, we propose the Less Noise Sampling Framework (LENS), which first prompts by identifying and removing interference tokens. then transfers successful rollouts from the purification process to supervise policy optimization on the original noisy prompts, enabling the model to learn to ignore interference in the real-world, noisy prompting settings. Experimental results show that LENS significantly outperforms GRPO, delivering higher performance and faster convergence, with a 3.88% average gain and over 1.6$\times$ speedup. Our work highlights the critical role of pruning interference tokens in improving rollout efficiency, offering a new perspective for RLVR research.

💡 Research Summary

Reinforcement Learning with Verifiable Rewards (RLVR) has become a prominent paradigm for improving the reasoning abilities of large language models (LLMs). The approach relies on sampling roll‑outs that receive a binary, verifiable reward, and then using those outcomes to update the policy. In complex, long‑horizon tasks such as advanced mathematics, successful roll‑outs are extremely sparse, which leads to unstable training and poor sample efficiency. Prior work has tackled this problem in two ways: (1) scaling up the number of roll‑outs per instruction, and (2) filtering out “zero‑variance” prompts that never yield a reward. The former dramatically increases computational cost without guaranteeing better samples, while the latter discards hard examples and limits the model’s ability to learn on challenging problems.

The authors of this paper conduct a fine‑grained token‑level analysis of failed roll‑outs and discover that a tiny fraction of tokens—less than five percent of the total—are responsible for the majority of failures. They term these “Interference Tokens.” Such tokens cause a large divergence between the current policy πθ and a reference policy πref (trained on the original data) as measured by KL‑divergence. The divergence is interpreted as a symptom of over‑optimization or noisy signal injection, which in turn hampers effective exploration in the high‑dimensional token action space.

To address this, the paper introduces the Less Noise Sampling framework (LENS), which operates in two stages.

Stage 1 – Interference Token Identification & Purification. For each prompt xᵢ, the framework computes an Interference Score for every token:

S_I(s, a) = |log πθ(a|s) – log πref(a|s)|.

Tokens are ranked by this score, and the top γ % (typically 1–5 %) are removed, yielding a denoised prompt x′ᵢ. Empirically, this simple pruning raises the success rate of roll‑outs generated from x′ᵢ, especially on difficult DeepMath‑style samples where accuracy improves by more than 20 %.

Stage 2 – Calibrated Rollout Policy Optimization (CRPO). When the original prompt’s success rate (\bar a_i) falls below a threshold τ, LENS treats the successful roll‑outs obtained from the denoised prompt as high‑quality supervision. A subset of failed roll‑outs from the original prompt is replaced with these successful ones, preserving the original prompt‑to‑rollout mapping for the rest. The algorithm then re‑weights each roll‑out using a factor (\tilde w(y)) that depends on (\bar a_i) (successful roll‑outs receive weight (\bar a_i), replaced or failed roll‑outs receive (1-\bar a_i)). Importance sampling ratios (\rho(y;\theta) = \frac{\pi_\theta(y|x_i)}{\pi_{\text{old}}(y|x_{\text{roll}}(y))}) correct for the distribution shift between the original and denoised prompts. Finally, a PPO‑style clipped surrogate loss with a KL‑regularization term is optimized:

L(θ) = –∑y (\tilde w(y) \min\bigl(\rho(y;\theta) \hat A(y), \text{clip}(\rho(y;\theta), 1‑ε, 1+ε)\hat A(y)\bigr) + β D{KL}(\pi_\theta‖\pi_{\text{ref}})).

This calibrated objective enables the model to learn to ignore interference tokens while still being trained on the original, noisy prompts, thereby avoiding the collapse that occurs when only clean prompts are used.

The authors evaluate LENS on five model families (Llama‑3.2‑3B‑Instruct, Qwen‑2.5‑3B/7B, Qwen‑3‑4B‑Base, Qwen‑3‑8B‑Base) using the OpenRL‑Math‑46k training set. Seven downstream math reasoning benchmarks serve as testbeds: Math500, AMC23, AIME24, AIME25, GaokaoEN‑2023, Minerva, and OlympiadBench. Baselines include vanilla GRPO, a rollout‑budget‑doubled version (GRPO‑Extended), and two zero‑variance filtering methods (DAPO and GRESO) with and without extended training epochs.

Results show that LENS consistently outperforms all baselines. Across the three largest models (Qwen‑3‑4B‑Base, Qwen‑3‑8B‑Base, Llama‑3.2‑3B‑Instruct) LENS achieves an average 3.88 % higher Pass@1 than GRPO while converging 1.6 × faster. The advantage is especially pronounced on the hardest benchmarks (AMC23, AIME24/25, OlympiadBench), where filtering methods lose a substantial amount of useful signal. Learning curves illustrate smoother, more stable improvement for LENS compared with the oscillations observed in GRPO, indicating that the interference‑token removal yields higher‑quality roll‑outs that guide the policy more reliably.

Ablation studies vary the deletion ratio γ, confirming that modest pruning (≈3 %) yields the best trade‑off between noise reduction and semantic preservation. The authors also analyze the distribution of interference scores, finding that only a tiny tail of tokens exhibits high scores, validating the hypothesis that a small set of “noisy” tokens dominates failure.

The paper’s contributions are threefold: (1) identification and quantification of interference tokens as a primary cause of RLVR failure; (2) a two‑stage framework (LENS) that both cleans prompts and transfers the resulting high‑reward roll‑outs back to the original noisy setting via calibrated PPO updates; (3) extensive empirical evidence that LENS improves sample efficiency and final performance without increasing computational budget.

Limitations include reliance on a single reference policy for score computation, which may lead to false positives if the reference is itself imperfect, and the potential semantic drift caused by token deletion (though empirically negligible). Future work could explore adaptive deletion ratios, multi‑reference ensembles for more robust interference detection, and application of the same principle to non‑math domains such as code generation or commonsense reasoning.

In summary, LENS demonstrates that pruning a handful of disruptive tokens and intelligently re‑using the resulting successful roll‑outs can dramatically enhance RLVR training. By turning “noise” into a controllable signal, the framework offers a practical, low‑cost path to more reliable reasoning in large language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment