CUA-Skill: Develop Skills for Computer Using Agent

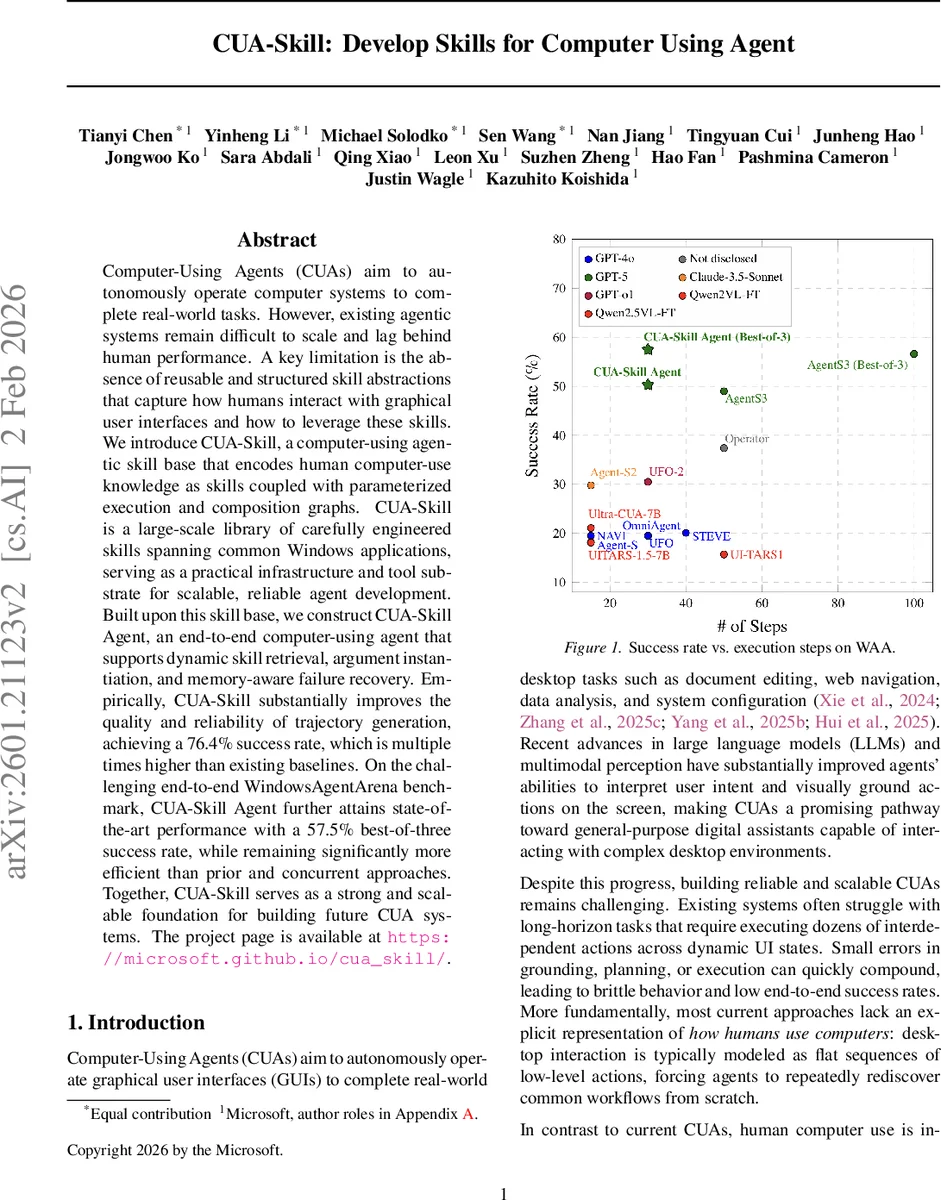

Computer-Using Agents (CUAs) aim to autonomously operate computer systems to complete real-world tasks. However, existing agentic systems remain difficult to scale and lag behind human performance. A key limitation is the absence of reusable and structured skill abstractions that capture how humans interact with graphical user interfaces and how to leverage these skills. We introduce CUA-Skill, a computer-using agentic skill base that encodes human computer-use knowledge as skills coupled with parameterized execution and composition graphs. CUA-Skill is a large-scale library of carefully engineered skills spanning common Windows applications, serving as a practical infrastructure and tool substrate for scalable, reliable agent development. Built upon this skill base, we construct CUA-Skill Agent, an end-to-end computer-using agent that supports dynamic skill retrieval, argument instantiation, and memory-aware failure recovery. Our results demonstrate that CUA-Skill substantially improves execution success rates and robustness on challenging end-to-end agent benchmarks, establishing a strong foundation for future computer-using agent development. On WindowsAgentArena, CUA-Skill Agent achieves state-of-the-art 57.5% (best of three) successful rate while being significantly more efficient than prior and concurrent approaches. The project page is available at https://microsoft.github.io/cua_skill/.

💡 Research Summary

The paper addresses a fundamental bottleneck in current computer‑using agents (CUAs): the lack of reusable, structured abstractions that capture how humans interact with graphical user interfaces (GUIs). Existing agents typically model desktop interaction as flat sequences of low‑level actions, forcing them to rediscover common workflows for every new task and making them brittle on long‑horizon problems. To overcome this, the authors introduce CUA‑Skill, a library of parameterized “skills” together with execution and composition graphs that serve as an intermediate layer between high‑level user intent and primitive GUI operations.

Skill definition – Each skill S is defined by four elements: (i) the target application τ, (ii) a natural‑language description of the user intent I, (iii) an argument schema A (a set of typed slots), and (iv) a parameterized execution graph Gₑ. The argument schema is paired with a feasible domain D(A) that can be either finite (e.g., menu items, toggle states) or open (e.g., file paths, free‑form text). This separation allows the system to reason about argument validity independently of execution and to generate arguments using specialized generators (enumeration for finite domains, controlled sampling for open domains).

Execution graph – For each skill, an execution graph Gₑ = (V, E) encodes one or more concrete realizations of the intent. Nodes represent internal control states; directed edges are labeled with base actions such as mouse clicks, keystrokes, or script calls, optionally guarded by UI predicates. The graph is parameterized by concrete argument values, enabling the same skill to adapt to different UI layouts, dialog prompts, or alternative interaction affordances. Edge weighting can express preferences for particular paths, facilitating robustness to UI changes without redefining the skill.

Composition graph – Skills are not isolated; they are typically chained into higher‑level workflows. The composition graph G_c = (V_c, E_c) captures permissible transitions between skills, both within a single application and across applications. A path in G_c corresponds to a multi‑step task (e.g., “open file → copy → paste into a document”), providing a reusable procedural knowledge base that mirrors human habits.

CUA‑Skill Agent – Built on top of the skill base, the agent follows a loop that integrates a large‑language‑model (LLM) planner M_p, a retrieval module R, and a memory store M. At each iteration: (1) the planner generates K natural‑language queries based on the current UI observation and memory; (2) the retrieval module returns the top L candidate skills from the library; (3) a reranker selects the most promising skill considering both retrieved and a small set of basic GUI primitives; (4) a configurator instantiates the skill’s arguments using the feasible domains; (5) the selected skill is executed either via a GUI grounding model or a script executor; (6) the outcome is summarized and appended to memory, enabling subsequent steps to be informed by past successes or failures. When execution fails, the memory‑aware recovery mechanism consults prior traces to propose alternative skills, reducing error propagation.

Empirical evaluation – The authors evaluate two aspects. First, in a “trajectory generation” setting, CUA‑Skill achieves a 76.4 % success rate, outperforming prior baselines by a factor of 1.7 × to 3.6 ×. Second, on the challenging WindowsAgentArena benchmark—a suite of real‑world desktop tasks—the CUA‑Skill Agent attains a best‑of‑three success rate of 57.5 %, establishing a new state‑of‑the‑art. Moreover, the agent requires significantly fewer execution steps to reach the same success level, indicating that the skill abstraction reduces unnecessary search and improves robustness.

Key contributions –

- CUA‑Skill library: a systematic, parameterized collection of hundreds of atomic skills spanning dozens of popular Windows applications, each equipped with execution and composition graphs that enable millions of concrete task variants.

- Skill‑centric agent architecture: dynamic skill retrieval, argument instantiation, and memory‑driven failure recovery, allowing scalable expansion of the skill set without hard‑coding tools into prompts.

3 Performance gains: demonstrable improvements in both success rates and efficiency across multiple agent tasks, confirming that structured procedural knowledge is crucial for reliable desktop automation.

Future directions – The paper suggests extending the skill base to other operating systems, automating skill generation from demonstration data, integrating multimodal inputs (speech, vision), and exploring richer memory‑graph structures for even longer‑horizon reasoning. By providing a reusable, transferable procedural layer, CUA‑Skill lays a solid foundation for the next generation of general‑purpose digital assistants capable of robustly interacting with complex desktop environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment