AMA: Adaptive Memory via Multi-Agent Collaboration

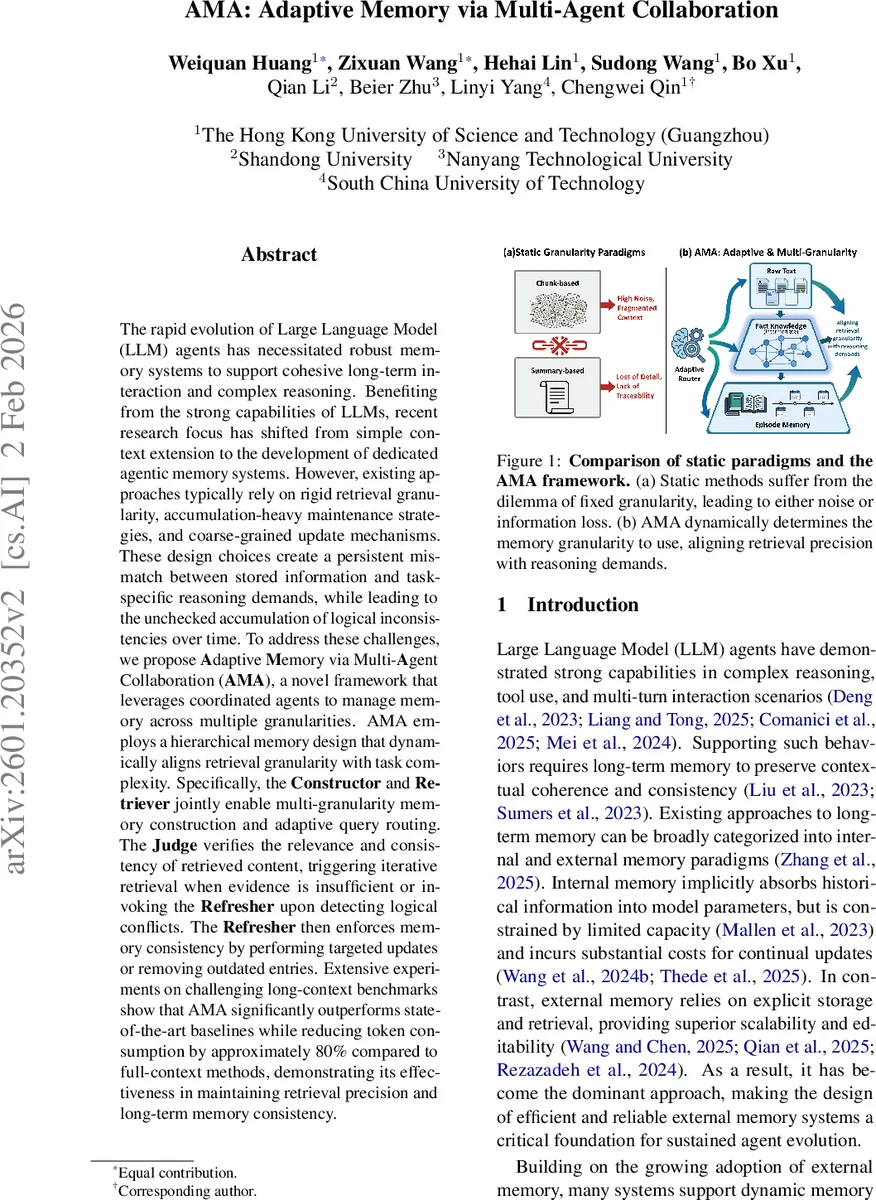

The rapid evolution of Large Language Model (LLM) agents has necessitated robust memory systems to support cohesive long-term interaction and complex reasoning. Benefiting from the strong capabilities of LLMs, recent research focus has shifted from simple context extension to the development of dedicated agentic memory systems. However, existing approaches typically rely on rigid retrieval granularity, accumulation-heavy maintenance strategies, and coarse-grained update mechanisms. These design choices create a persistent mismatch between stored information and task-specific reasoning demands, while leading to the unchecked accumulation of logical inconsistencies over time. To address these challenges, we propose Adaptive Memory via Multi-Agent Collaboration (AMA), a novel framework that leverages coordinated agents to manage memory across multiple granularities. AMA employs a hierarchical memory design that dynamically aligns retrieval granularity with task complexity. Specifically, the Constructor and Retriever jointly enable multi-granularity memory construction and adaptive query routing. The Judge verifies the relevance and consistency of retrieved content, triggering iterative retrieval when evidence is insufficient or invoking the Refresher upon detecting logical conflicts. The Refresher then enforces memory consistency by performing targeted updates or removing outdated entries. Extensive experiments on challenging long-context benchmarks show that AMA significantly outperforms state-of-the-art baselines while reducing token consumption by approximately 80% compared to full-context methods, demonstrating its effectiveness in maintaining retrieval precision and long-term memory consistency.

💡 Research Summary

The paper addresses the growing need for robust long‑term memory in large language model (LLM) agents that must sustain coherent multi‑turn interactions and complex reasoning. Existing external‑memory approaches typically rely on static chunking or coarse summaries, leading to a mismatch between the granularity of stored information and the granularity required for a given task. This mismatch causes either noisy retrieval when chunks are too large or fragmented logical dependencies when chunks are too fine, ultimately degrading reasoning performance.

To solve these problems, the authors propose Adaptive Memory via Multi‑Agent Collaboration (AMA), a framework that decomposes the memory lifecycle into four specialized agents: Constructor, Retriever, Judge, and Refresher.

Constructor converts the ongoing dialogue into three hierarchical memory levels: (1) Raw Text Memory, preserving the original utterance; (2) Fact Knowledge Memory, extracting structured subject‑verb‑object‑complement triples using predefined linguistic templates; and (3) Episode Memory, which is generated on demand when a topic shift, explicit request, or context‑window saturation is detected, yielding a high‑level summary of the conversation. Each entry is encoded into a dense vector for efficient similarity search.

Retriever first rewrites the user input into a self‑contained query and simultaneously produces a four‑dimensional intent vector (fine‑grained detail, abstract summary, event‑level, atomic fact). Based on this intent, a routing function selects the most appropriate memory granularity: raw text for fine‑detail queries, episode memory for abstract or event‑level queries, and fact knowledge for all other cases. The selected memory store is then queried using cosine similarity with the query embedding, returning a dynamic number of top‑k results.

Judge evaluates the relevance of the retrieved results and checks for logical conflicts (e.g., contradictory facts or temporal inconsistencies). If relevance is low, it triggers iterative retrieval with an adjusted k. If a conflict is detected, it signals the Refresher.

Refresher performs targeted updates: it may rewrite a conflicting fact, delete obsolete entries, or insert newly inferred knowledge. This logic‑driven maintenance loop prevents the unchecked accumulation of errors and keeps the memory consistent over long interactions.

The system operates in a closed loop: user input → Retriever → Judge → (optional Refresher) → Constructor → updated memory. By separating concerns into distinct agents, AMA achieves fine‑grained control over retrieval, verification, and evolution without the entanglement typical of monolithic controllers.

Experiments on several long‑context benchmarks—including multi‑turn question answering, extended chat simulations, and explicit knowledge‑update tasks—show that AMA consistently outperforms strong baselines such as MemGPT, Mem0, Nemori, and Zep. It achieves up to 90 % accuracy on knowledge‑update scenarios and reduces token consumption by roughly 80 % compared to feeding the full conversation context to the LLM. Ablation studies confirm that the Judge‑Refresher feedback loop and the multi‑granularity routing are the primary contributors to performance gains.

The paper also discusses limitations: dependence on carefully crafted prompts and LLM inference cost, scalability of vector‑based retrieval for massive memory stores, and sensitivity to the quality of episode summaries. Nonetheless, AMA demonstrates that a multi‑agent, hierarchical memory architecture can reconcile retrieval precision with long‑term consistency, offering a promising foundation for future LLM agents in domains such as medical counseling, legal assistance, and personalized education.

Comments & Academic Discussion

Loading comments...

Leave a Comment