Beyond Marginal Distributions: A Framework to Evaluate the Representativeness of Demographic-Aligned LLMs

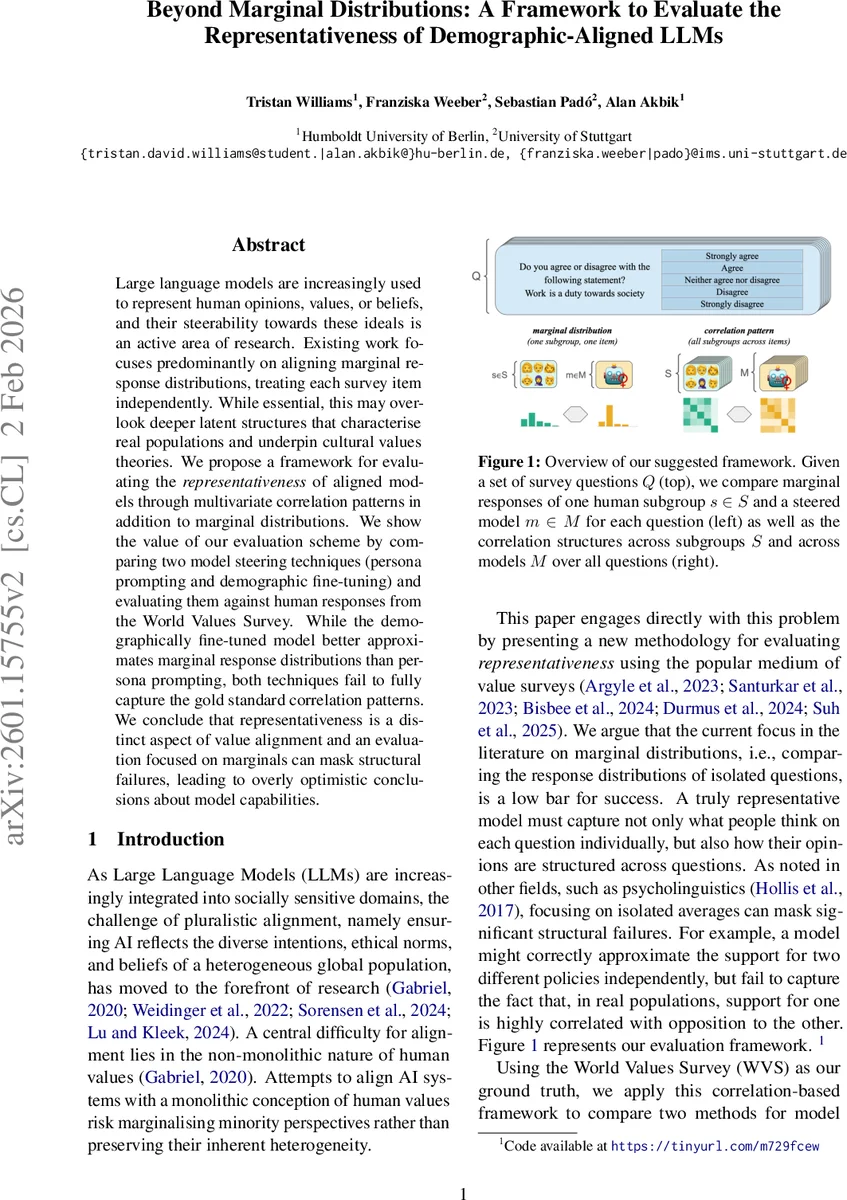

Large language models are increasingly used to represent human opinions, values, or beliefs, and their steerability towards these ideals is an active area of research. Existing work focuses predominantly on aligning marginal response distributions, treating each survey item independently. While essential, this may overlook deeper latent structures that characterise real populations and underpin cultural values theories. We propose a framework for evaluating the representativeness of aligned models through multivariate correlation patterns in addition to marginal distributions. We show the value of our evaluation scheme by comparing two model steering techniques (persona prompting and demographic fine-tuning) and evaluating them against human responses from the World Values Survey. While the demographically fine-tuned model better approximates marginal response distributions than persona prompting, both techniques fail to fully capture the gold standard correlation patterns. We conclude that representativeness is a distinct aspect of value alignment and an evaluation focused on marginals can mask structural failures, leading to overly optimistic conclusions about model capabilities.

💡 Research Summary

This paper addresses a critical gap in the evaluation of large language models (LLMs) that are being steered to reflect human opinions, values, or beliefs. While most prior work assesses alignment by comparing marginal response distributions—treating each survey item independently—the authors argue that true representativeness must also capture the multivariate correlation patterns that underlie cultural‑value theories in the social sciences. To this end, they propose a comprehensive evaluation framework that jointly measures (1) marginal similarity between model‑generated and human response distributions and (2) the fidelity of the correlation structure across survey questions.

The marginal component is quantified by a per‑question distance d (e.g., Jensen‑Shannon, Wasserstein) averaged over all questions, yielding a dissimilarity score D(P_m, P_s) ranging from 0 (perfect match) to 1 (maximal divergence). Diversity is additionally captured via a normalized variance metric V, which highlights whether a model’s outputs are as varied as real human answers. For the higher‑order component, the authors compute question‑question correlation matrices C_true (human) and C_sim (model) from mean response vectors. Two complementary metrics compare these matrices: the Pearson correlation ρ between the vectorized upper‑triangular entries (reflecting relative structure) and the root‑mean‑square error (RMSE) (reflecting absolute magnitude).

Using this framework, the study conducts a systematic comparison of two popular steering techniques: (a) persona prompting, where a demographic “persona” is injected via prompt engineering, and (b) demographic fine‑tuning, exemplified by OpinionGPT, which employs LoRA adapters trained on Reddit sub‑communities representing specific demographic groups. Both methods are evaluated on ten demographic sub‑populations (gender, age, region, political orientation) against the World Values Survey (WVS), a well‑established cross‑national instrument comprising 193 multiple‑choice items. The baseline model is the unsteered phi‑3 LLM.

Results show that fine‑tuned models achieve substantially lower marginal dissimilarity scores than both the unsteered baseline and persona‑prompted models, indicating a better approximation of individual question distributions. They also preserve response diversity more faithfully. However, when the correlation structure is examined, neither steering approach reaches the level of human data. Both exhibit low Pearson correlations and high RMSE values, especially for question pairs that encode traditionalist versus modernist worldviews, authority versus liberty, and other culturally salient dimensions. Persona prompting often produces overly stereotyped or narrowly clustered responses, further degrading structural fidelity.

These findings lead to three key insights. First, representativeness is a distinct dimension of alignment that cannot be inferred from marginal metrics alone. Second, demographic fine‑tuning, while beneficial for marginal alignment, does not automatically endow a model with the latent multivariate patterns characteristic of real populations. Third, current steering techniques are insufficient for capturing the complex, inter‑question dependencies that define cultural value systems.

The paper contributes (i) a novel, reproducible evaluation protocol that integrates marginal and correlation analyses, and (ii) an empirical benchmark that reveals hidden failures of widely used steering methods. The authors recommend future work to explore (a) structural fine‑tuning objectives that directly penalize correlation mismatches, (b) multi‑task or meta‑learning approaches that can internalize higher‑order value patterns, and (c) richer human data sources (e.g., narrative responses, longitudinal surveys) to guide models toward genuine cultural representativeness. By highlighting the limitations of current practices, the study paves the way for more nuanced, socially responsible alignment of LLMs with the diverse tapestry of human values.

Comments & Academic Discussion

Loading comments...

Leave a Comment