UM-Text: A Unified Multimodal Model for Image Understanding and Visual Text Editing

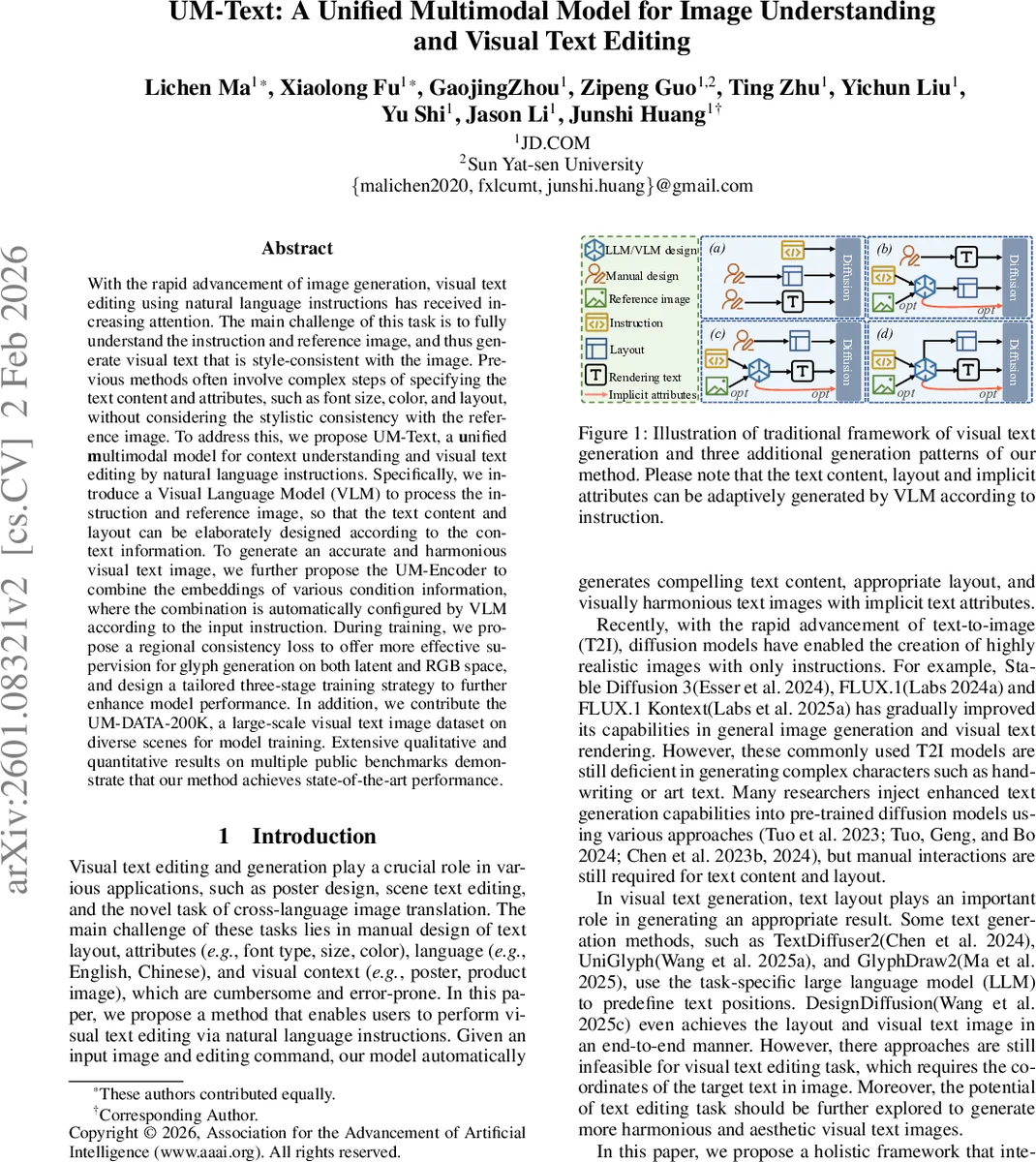

With the rapid advancement of image generation, visual text editing using natural language instructions has received increasing attention. The main challenge of this task is to fully understand the instruction and reference image, and thus generate visual text that is style-consistent with the image. Previous methods often involve complex steps of specifying the text content and attributes, such as font size, color, and layout, without considering the stylistic consistency with the reference image. To address this, we propose UM-Text, a unified multimodal model for context understanding and visual text editing by natural language instructions. Specifically, we introduce a Visual Language Model (VLM) to process the instruction and reference image, so that the text content and layout can be elaborately designed according to the context information. To generate an accurate and harmonious visual text image, we further propose the UM-Encoder to combine the embeddings of various condition information, where the combination is automatically configured by VLM according to the input instruction. During training, we propose a regional consistency loss to offer more effective supervision for glyph generation on both latent and RGB space, and design a tailored three-stage training strategy to further enhance model performance. In addition, we contribute the UM-DATA-200K, a large-scale visual text image dataset on diverse scenes for model training. Extensive qualitative and quantitative results on multiple public benchmarks demonstrate that our method achieves state-of-the-art performance.

💡 Research Summary

The paper introduces UM‑Text, a unified multimodal framework that tackles visual text editing—a task that requires both understanding of a natural‑language instruction and the visual context of an image, and then generating or modifying text in the image so that it matches the style of the surrounding content. The core architecture consists of three components: (1) a Visual Language Model called UM‑Designer, which jointly encodes the instruction (via a pretrained T5 encoder) and the reference image (via a ViT‑style visual encoder) and predicts three kinds of outputs—text content, bounding‑box layout, and implicit attributes such as font, size, and color; (2) a UM‑Encoder that aggregates multiple condition embeddings—T5 text embeddings, character‑level visual embeddings extracted from rendered glyph images using an OCR model, and the multimodal embeddings from UM‑Designer—into a single “UM‑Embedding” through a series of attention‑based fusion layers; (3) a flow‑matching diffusion model that takes the UM‑Embedding as conditioning and iteratively denoises latent representations to synthesize the final edited image.

To supervise fine‑grained glyph quality, the authors propose a Regional Consistency Loss (RC‑Loss) that measures L2 distance between predicted and ground‑truth glyph regions in both latent space and RGB space, using masks supplied by UM‑Designer or manual annotations. Training proceeds in three stages: (i) pre‑training UM‑Designer on a large synthetic dataset (UM‑DATA‑200K) to learn layout planning and text generation; (ii) pre‑training the diffusion backbone on generic image reconstruction; (iii) joint fine‑tuning of the whole pipeline with the RC‑Loss and a flow‑matching objective to align the generated velocity fields with the ideal diffusion trajectory.

The dataset construction pipeline is noteworthy: starting from 40 million product posters, the authors filter by aesthetic scores, segment main objects with SAM2, extract text via PPOCRv4, and then clean the images using FLUX‑Fill, finally curating 200 k high‑quality pairs that cover diverse scenes, languages (English, Chinese, Korean), and complex typographic styles.

Extensive experiments on public visual‑text editing benchmarks and on the authors’ multilingual test set show that UM‑Text outperforms prior state‑of‑the‑art methods such as TextDiffuser, GlyphDraw, AnyText, and UniGlyph. Gains are reported in PSNR/SSIM, OCR‑based text accuracy (up to 12 % absolute improvement), and human preference studies (15 % higher style‑consistency scores). Qualitative results demonstrate robust handling of intricate scripts, handwritten calligraphy, and cross‑language translation within a single image.

The paper also discusses limitations: reliance on large‑scale VLM pre‑training, memory overhead for high‑resolution images, and current focus on 2‑D editing. Future directions include lightweight VLM designs, memory‑efficient high‑resolution diffusion, and extending the framework to video or 3‑D texture editing.

In summary, UM‑Text presents a comprehensive solution that unifies multimodal understanding and diffusion‑based generation for visual text editing, leveraging a novel encoder for condition aggregation, a region‑wise consistency loss, and a carefully staged training regimen, achieving state‑of‑the‑art performance across diverse languages and typographic complexities.

Comments & Academic Discussion

Loading comments...

Leave a Comment